How-To

Emulating a Global Test Network with Netropy Cloud Edition

Tom Fenton tries out new tool to introduce latency, jitter and other common network disturbances into an AWS environment.

I recently wrote an article on NetropyVE, a virtual machine (VM) that is used to introduce latency and other network anomalies into a vSphere environment. I found NetropyVE to be easy to work with, and it did a great job making VMs appear to be thousands of miles apart or behave as though they had a very bad network connection -- overall, a good way to test how a vSphere environment would operate under certain network conditions.

Apposite Technologies, the company behind NetropyVE, just announced Netropy Cloud Edition (NetropyCE), which in now available in the Amazon Web Services (AWS) Marketplace and has the ability to introduce latency, jitter and other common network disturbances into an AWS environment. In this article, I will give an overview of NetropyCE, show how it is used in AWS, and then give my final thoughts on the emulator.

Introduction

It may seem counter-intuitive to want to slow down the network traffic between AWS-based VMs -- but, just as is the case with NetropyVE, there are some very good reasons why you may want to do so. Sure, by locating VMs in different regions, you could see how the distance affects the performance of instances, but this might not replicate the end user's experience and, moreover, it could get rather expensive to pay for the network traffic between the different AWS locations where your instances are located.

AWS is very good at making sure that network traffic between their different hosting centers remains optimal, and that might actually be a problem when you are trying to see how an application would behave in the real world as actual connections between an AWS-hosted VM and an end user will have other network anomalies. There is also the problem of trying to coordinate the instances used in testing to see if they are in disparate locations. AWS charges a fee for the network traffic between their different locations, and although it is just a few cents per GB, this cost can and will add up; alternatively by using NetropyCE, all traffic will be in one datacenter and you will not incur ingress and egress AWS network traffic charges. In short, NetropyCE makes it easier to evaluate how applications will behave under suboptimal network connections and is reasonably priced at $1.49 per hour.

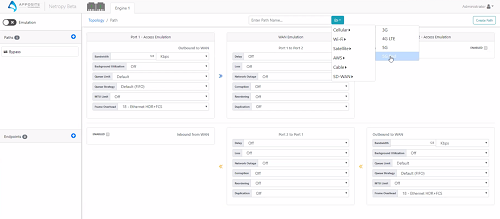

As NetropyCE is a new product, I had Joseph Zeto, CEO at Apposite, walk me through a sample scenario using the product. The first thing that I noticed is that NetropyCE has a slick new GUI (Figure 1). The NetropyCE interface is based on HTML5, whereas the version of NetropyVE that I used in the past was based on Flash. Joseph did mention, however, that they will be updating NetropyVE to the same interface as NetropyCE shortly. He also said that NetropyCE (as will also be the case with the next version of NetropyVE) is an API-first product, meaning that anything that can done from the GUI can also be accomplished via API calls. Having an API-driven product is huge, and it opens the product up to all sorts of interesting use cases. Joseph shared the Netropy 4.0 Restful API Quick Reference Guide with me. The guide had some good examples that will allows a programmer or developer to quickly create code to integrate NetropyCE testing into their organization.

[Click on image for larger view.] Figure 1: Netropy CE Interface

[Click on image for larger view.] Figure 1: Netropy CE Interface

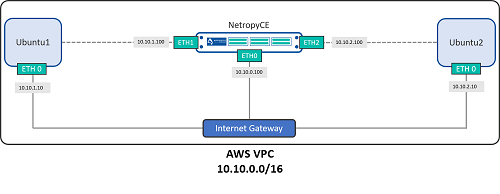

To demonstrate NetropyCE's functionality, Joseph set up an AWS environment with two Linux VMs connected through NetropyCE. Each of the Linux VMs had two NICs: one for management, and the other for connecting to NetropyCE. The NetropyCE VM had three NICs: one for management, and the other two for connecting to each of the VMs (Figure 2).

[Click on image for larger view.] Figure 2: Netropy CE Test Environment

[Click on image for larger view.] Figure 2: Netropy CE Test Environment

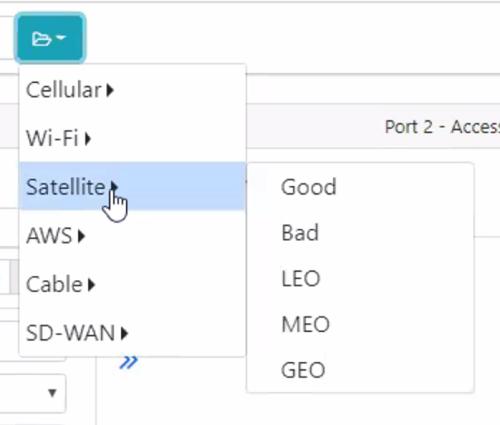

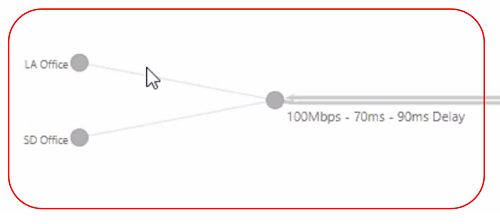

Joseph demonstrated predefined network scenarios (Figure 3), one of the new features in NetropyCE. Now, you can select the scenario from the NetropyCE menu instead of having to go through and set up the parameters for a satellite connection. If you have your own scenarios, you can create and save them to this menu as well.

[Click on image for larger view.] Figure 3: Netropy CE Scenarios

[Click on image for larger view.] Figure 3: Netropy CE Scenarios

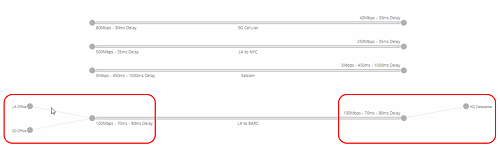

The way NetropyCE works is that you set up endpoints (Figure 4) by using either the GUI or API to connect to each of NetropyCE's two ports (Figure 5). Endpoints can be any AWS instance (for example, Linux, Windows, etc.), and you can have multiple endpoints in NetropyCE.

[Click on image for larger view.] Figure 4: NEC Connections

[Click on image for larger view.] Figure 4: NEC Connections

[Click on image for larger view.] Figure 5: NEC Endpoint

[Click on image for larger view.] Figure 5: NEC Endpoint

Joseph suggested using a C5 Large instance for NetropyCE, not because it needs the CPU and memory (it uses very few resources), but because C5 Large instances have better network QOS specifications. If you are doing light testing you could get away with using a smaller instance size, but the instance should have at least 2 vCPUs and 8GB of RAM and the ability to support 3 NIC cards.

While the product was in beta form, they had customers use it in a variety of use cases; the one that I found most interesting was a company that was using it in their demo environment so sales engineers could show potential customers how well their application behaved under high latency conditions.

NetropyCE can be acquired in the AWS Marketplace (Figure 6) and searching for Netropy or by going here.

[Click on image for larger view.] Figure 6: Get NetropyCE in the AWS Marketplace

[Click on image for larger view.] Figure 6: Get NetropyCE in the AWS Marketplace

Conclusions

During the short time that I spent with NetropyCE, I was impressed with its usability. Its GUI was straightforward and intuitive; yes, you will need to become familiar with its terminology, but after doing so, anyone with a very basic understanding of networking will be able to set up scenarios to test how well their instances will behave when they experience adverse networking conditions. That said, I think one of NetropyCE's most interesting features is that it is API-driven, which means you will be able to programmatically deploy testing scenarios -- this is huge and very important in today's software-defined datacenter.

In sum, NetropyCE appears to be a very capable product that casual and non-technical users can use with its GUI or via its API for automated usage to test and see how various network conditions would affect your AWS based applications.

About the Author

Tom Fenton has a wealth of hands-on IT experience gained over the past 30 years in a variety of technologies, with the past 20 years focusing on virtualization and storage. He previously worked as a Technical Marketing Manager for ControlUp. He also previously worked at VMware in Staff and Senior level positions. He has also worked as a Senior Validation Engineer with The Taneja Group, where he headed the Validation Service Lab and was instrumental in starting up its vSphere Virtual Volumes practice. He's on X @vDoppler.