How-To

Hyper-V Deep Dive 7: New Storage Enhancements

Storing VMs on file shares, CSV enhancements, guest Fibre Channel, and the new VHDX format: it's all about better ways to work with storage in this version of Hyper-V.

More on this topic:

We've looked at quite a bit so far -- scalability improvements, NUMA, VM monitoring and replication, to name a few -- in the first six parts of this series. Let's turn our attention to a hot topic and see what's improved in Hyper-V as far as storage is concerned: storing VMs on file shares, enhancements to Cluster Shared Volumes, guest Fibre Channel, DirectDMA and Offloaded Data Transfer, plus the new VHDX format.

Let's start with file sharing and if I had to pick one foundational technology that's taken the biggest leap forward in Windows Server 2012, it'd be SMB 3.0. This stalwart of file sharing has been around since the dawn of Windows, but the version that's in Server 2012 (and Windows 8) is a very different beast to its predecessors. It comes within 97-98 percent performance of Direct Attached Storage and is optimized for hosting application server application workloads such as SQL Server 2012 and Hyper-V VM disks in ordinary file shares, providing unprecedented flexibility.

|

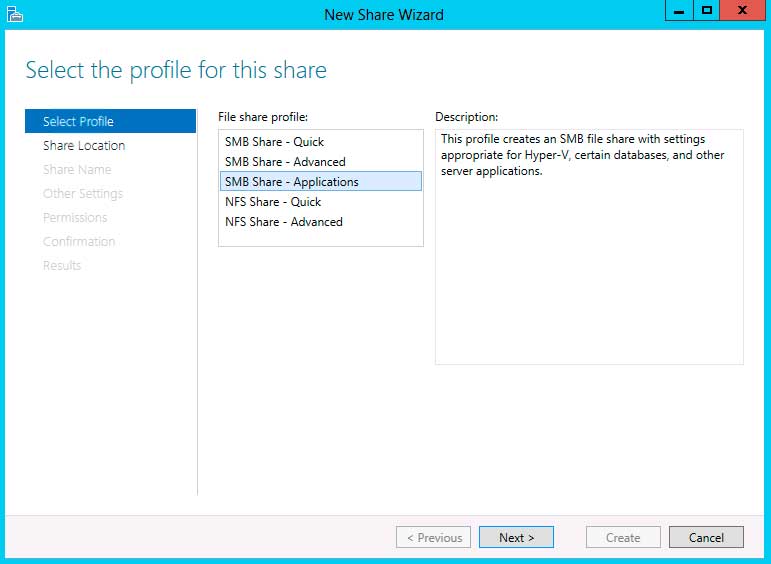

Figure 1. Make sure you create the right type of file share if you're planning to store your VMs on file shares. (Click image to view larger version.) |

VMs that have their VHD(X) files stored on SMB 3.0 file shares can be Live Migrated between Hyper-V hosts, but don't throw out your cluster setup – this feature doesn't mean that it offers high availability. If a Hyper-V host goes down, other hosts won't be notified (since they're not in a cluster) and thus the VMs won't automatically restart.

While Microsoft outlines designs of converged clusters where some nodes are storage nodes, providing shared storage for Hyper-V host nodes, note that it's not supported for a host to be both a file share host and a Hyper-V host simultaneously. If the link between a Hyper-V host and its storage is temporarily interrupted, Hyper-V will cache I/O in both ends for up to one minute. SMB 3.0 Multichannel will utilize all available network paths between a host and a file share without any additional configuration (NIC teaming, which I covered in part 4 is only necessary when you want other protocols to be teamed) and offers protection against an accidentally disconnected network cable.

Potential datacenter designs becomes even more interesting when you consider using SANs as the back-end storage infrastructure for SMB 3.0 file shares with CSV 2.0, the new version of Microsoft's Cluster Shared Volume file system. This is called a Scale Out File Server (SOFS) and is suitable for server application workloads (SQL Server, Hyper-V) but not general document file sharing (the traditional file share cluster role should be used for this). In this scenario each file share host can access the same back-end data and serve it out to Hyper-V nodes. If one file share host goes down it'll transparently fail over to another file share host.

CHKDSK has been improved in Windows Server 2012 servers by splitting the time consuming analyzing of the disk from the fixing phase and made the analyze phase able to run while the disk is online. This means that CHKDSK can now check and fix huge volumes with only minimal downtime (minutes compared to hours). In a SOFS, however ,the quick fixing phase can be performed by one node while other nodes still access the volume. The result is zero downtime when disk checking.

No discussion about storage for Hyper-V in Windows Server 2012 is complete without mentioning Storage Spaces, a feature that lets you use commoditize hardware servers and storage to build “SAN like” scalable storage with built-in data protection. While Storage Spaces isn't a replacement for a high-end SAN there are plenty of scenarios, both in the SMB and enterprise world, where this type of storage can be effective. Another option for HA Hyper-V storage is the built-in support for shared SAS chassis, where RAID controllers in each host synchronize their information. This is known as clustered PCI RAID.

The final piece of the puzzle of high-speed file sharing is SMB Direct, where Remote Direct Memory Access-capable NICs can provide phenomenal performance for storage access. Improvements inside of Hyper-V -- such as having one I/O channel per 16 vCPUs in the VM (Windows Server 2008 R2 only offered one for entire VM), one I/O queue per SCSI disk (limited in 2008 R2 to one per controller) and I/O Interrupts scaling dynamically across vCPUs instead of using only one in previous versions -- all contribute to high levels of performance. Microsoft has demonstrated one million IOPS from a single VM.

Volume Shadow Copy Services (VSS) has also been improved, making it possible to back up data sources in a consistent state from remote shares just as you could with local storage in Windows Server 2008 R2.

CSV: another file system?

If you look at a shared volume in Server Manager that has CSV enabled in Windows Server 2012, it specifies the file system as CSVFS but it still relies on NTFS underneath. There are other improvements in CSV 2.0 apart from the zero downtime CHKDSK mentioned above; it has also been enhanced to be more compatible with back-up software. The older version used custom reparse points to mount shared storage, which required back-up software vendors to customize their application to know how to back them up; Windows Server 2012 uses standard mount points which should make things easier for ISVs.

There's also a new feature called CSV cache that uses system memory on file share hosts to cache reads, which can substantially improve performance, especially in VDI scenarios where I/O is often a bottleneck. The suggested cache size is 512 MB, but you'll need to test it in your environment with your load. There's no limit on the number of CSV volumes you can have, nor on the number of files you can have on each volume, so any limits are based on what your hardware supports.

Taking a load off

Modern SANs are powerful with many advanced features and offering Offloaded Data Transfer (ODX) adds the ability for Windows to natively use these features intelligently. An example of how ODX works for Hyper-V is when creating an 80 GB fixed VHD disk: Windows merely asks the ODX SAN for a file of that size and doesn't have to send data over the network. Contrast this with a non-ODX SAN where a host has to send 80 GB of zeros to the new file on the SAN with the accompanying network, processor and memory load. For file copies and moves from one location to another, Windows merely handles ODX tokens and all the transfers are done directly within the SAN.

Windows Server 2012 implements ODX in two ways: firstly when a Hyper-V host is connected to an ODX SAN any operation such as creating or deleting VHD(X) files, or merging snapshots, is offloaded to the SAN. Secondly if you have Windows 2012 VMs that reside on an ODX-enabled SAN, file operations inside them will also be accelerated. This reduces the time for these operations from minutes to seconds, especially for large files.

A new virtual hard disk

The new VHDX virtual hard disk format has been mentioned several times in this article series already, but the seemingly minor addition of an X offers many benefits. For one, it is more resilient to corruption if a power outage occurs during a metadata operation, as it keeps internal logs for these operations. Also, there's now the opportunity for developers and users to embed their own management data in VHDX files. But the biggest improvement is the max size of 64 TB (with a theoretical max size of 1.4 PB).

There are built-in tools for converting VHD to VHDX files (and vice versa, as long as it's smaller than 2 TB). The new format also correctly aligns on newer hard drives with 4K or 512e sectors, unlike the VHD format which can have issues on 512e disks. The issue is also fixed if you create a new VHD in Windows Server 2012 and you can take an existing VHD from 2008 R2 and convert it to a VHD in Windows Server 2012 which will also fix alignment issues.

|

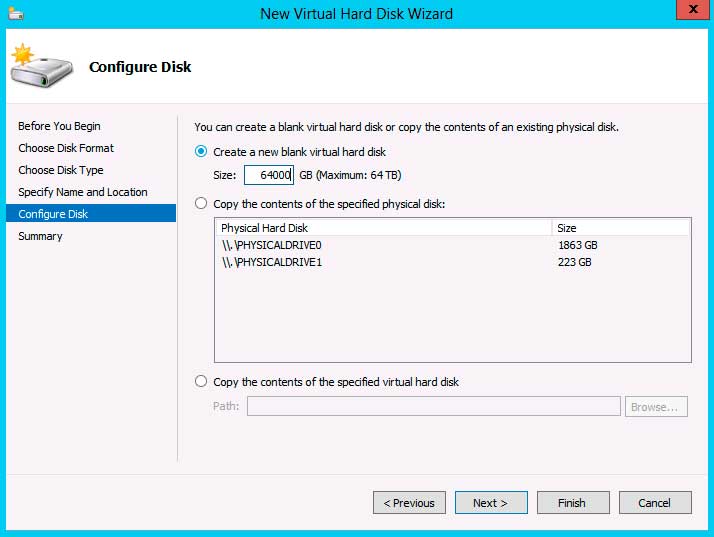

Figure 2. With disk sized increasing every year having the ability to create 64 TB virtual hard disks is future proofing the platform. (Click image to view larger version.) |

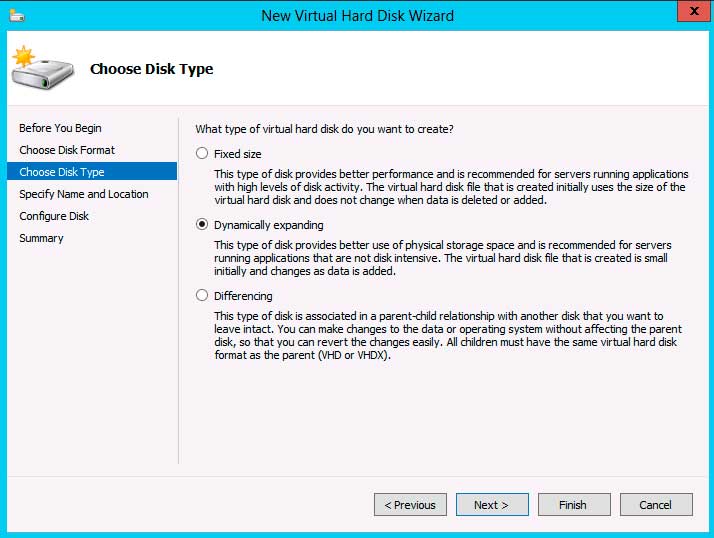

The performance difference between fixed size (thick provisioned), dynamic (thin provisioned) and pass-through disks have been steadily diminishing and in Windows Server 2012 there's no reason to use pass-through disks for performance, especially since you lose most of the benefits of virtualization when you use them. The performance gap between fixed and dynamic is also minute. So, just be aware if you do decide to use dynamic disks, make sure you have a mechanism to track and forecast your disk usage -- it's all too easy to run out of storage space.

|

Figure 3. With the performance difference between fixed and dynamic VHD(X) now minimal, using dynamic disks in production is a viable option. (Click image to view larger version.) |

VHDX also supports reclaiming unused space, similar to how TRIM works for SSDs and unmap works for SANs. Traditional file systems don't actually delete files, they merely delete the pointers to the data, with TRIM the OS reclaims the space. In normal VHDX operations this is done lazily in the background, so if you're using a dynamic disk and you delete files, that space will be used effectively for new files without having to expand the physical VHDX file again. If you shut down the VM the VHDX file will be physically reduced in size, neither of which can happen with VHD files.

Virtual Fibre Channel

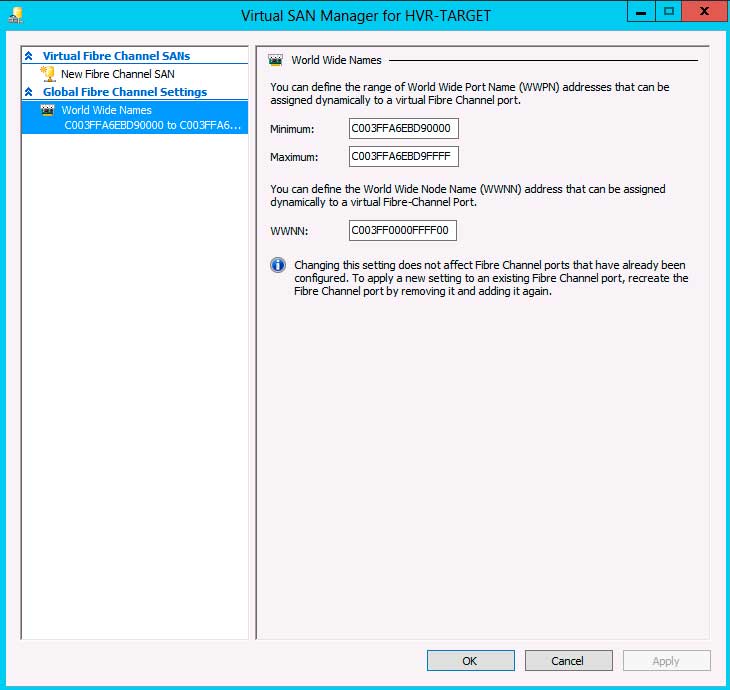

Another area where VMware was ahead was the fact that if you wanted to create a guest cluster (for application HA) in Windows Server 2008 R2 Hyper-V you had to use iSCSI, as there was no way to connect VMs to a Fibre Channel (FC) SAN. This is fixed in Windows Server 2012 and Virtual Fibre Channel (VFC) gives the ability to cluster VMs (guest clustering) with supported applications, with each VM being able to have up to four VFC HBAs. The Microsoft virtual Host Bus Adapter (HBA) directly exposes FC LUNs to VMs, so be aware that the SAN, your FC switch and your HBAs has to support N_Port ID Virtualization (NPIV). Even in a testing lab you can't get away with a direct FC cable connection -- you need a FC switch.

|

Figure 4. Setting up Virtual Fibre Channel is easy, as long as your HBAs have drivers for Windows Server 2012. (Click image to view larger version.) |

True to the theme of this release of Hyper-V Microsoft wasn't content just to replicate VMware functionality but went a step further and made it possible to Live Migrate a VM using VFC. SANs however generally take between 30 and 60 seconds to unmap a LUN and exposing a port clearly won't work for Live Migration. Instead each VM uses two World Wide Port Names and two World Wide Names with one being idle; if the VM is moved the other port takes over, making the original one the idle one. If you run your SAN vendor's management tool inside a VM and it hasn't been updated to understand this switching, it's likely to throw alerts when the ports are switched.

Other storage improvements

Windows Server 2012 offers built-in file deduplication but this only works for files that aren't in use so it'll come in handy for VHD(X) libraries, where it'll achieve amazing success (90 percent+) because of the duplicated content across most virtual hard disks. Another cool feature is that the new Server Manager allows you to deploy roles and features directly to an offline VHD file, opening another avenue for managing your VM templates.

That was quite a bit of stuff on storage. Next time, we'll look at mobility of VMs.

More on this topic: