If the message was not clear enough, it is time to move away from the full install of ESX (aka ESX classic). VMware's ESXi hypervisor -- also called the vSphere Hypervisor -- is here to stay. The vSphere 4.1 release was officially the last major release that will include both hypervisors.

In the course of moving from ESX to ESXi, there are a number of changes you need to be aware of that can stump your migration, but none that cannot be overcome in my opinion. Here are my tips to make the transition easy:

1. Leverage vCenter Server for everything possible.

The core management features of ESX and ESXi are now effectively feature on-par with each other when using vCenter for all communication and third-party application support. Try to ensure that specific dependencies on specific host-based communication capabilities can be achieved with a vCenter Server connection or, better still, with an ESXi host directly. A good example of one direct task to an ESXi host would be syslog forwarding; this still can easily be configured directly on an ESXi host.

2. Ensure third party applications fully support ESXi.

There are plenty of applications that we all can use for virtualization. This can include backup, virtualization management, capacity planning, troubleshooting tools and more. Ensure that all vendors fully support ESXi for the products that are being leveraged for the vSphere environment. This also may be a good point to look how each of these tools support ESXi, specifically to ensure that all of the proper VMware APIs are fully supported. This includes APIs such as the vStorage APIs, the vSphere Web Services, the vStorage APIs for Data Protection and more. Here is a good resource to browse the vSphere APIs to see how they can be used both by VMware technologies, and leveraged by third-party applications.

3. Learn the vSphere Management Assistant.

The vMA will become an invaluable tool for troubleshooting as well as providing basic administration tasks for ESXi servers. The vMA is a virtual appliance that is configured to connect to the vCenter Server for a number of administrative tasks to be performed on an ESXi host. Be sure to check out this video on how to set up the vMA.

4. Address security concerns now.

Many virtualization and security professionals are concerned about the lack of ability to run a software-based firewall directly on the host operating system (as can be done with ESX). If this is a requirement for your organization, the best approach is to implement physical firewalls in front of the ESXi server's vmkernel network interfaces.

5. Address other architectural issues.

If there is going to be a fundamental change in the makeup of a vSphere cluster, it may also be time to address any lingering configuration issues that have plagued the environment. While we never change our minds on how to design our virtualization clusters (or do we?), this may be a time to enumerate all of the design changes that need to be rolled in. Some frequent examples include removing local storage from ESXi hosts and supporting boot from flash media (be sure to use the supported devices and mechanisms), implementing a vNetwork Distributed Switch, re-cabling existing standard virtual switches to incorporate more separation across roles of vmkernel and guest networking interfaces, and more.

The migration to ESXi can be easy with the right tools, planning and state of mind. Be sure also to check the VMware ESXi and ESX information center for a comprehensive set of resources related to the migration to ESXi.

What tips can you share on your move to ESXi? Share your comments here.

Posted by Rick Vanover on 06/14/2011 at 12:48 PM2 comments

VMware has made an important feature of the latest and greatest ESXi (a.k.a. the vSphere hypervisor) -- the command-line environment or tech-support mode -- a bit complex to access. (Tech-support mode was easy to access in older versions, and I cover it here in an

earlier post.).

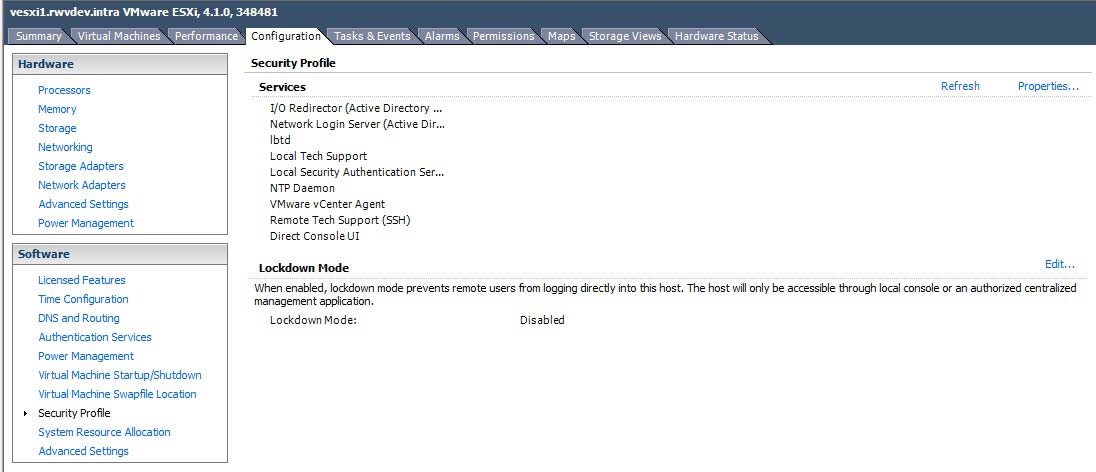

For modern versions of vSphere, tech-support mode and other network services are controlled in the Security Profile section of the vSphere Client (see Fig. 1).

|

Figure 1. The vSphere client security profile allows control of critical services, including tech support mode. (Click image to view larger version.) |

Once tech local tech-support mode is selected to be running (either started one-time or persistently), the command prompt can be accessed from the direct console user interface (DCUI). Within the DCUI, accessing tech support mode is done in the same manner as previous versions, by pressing ALT+F1.

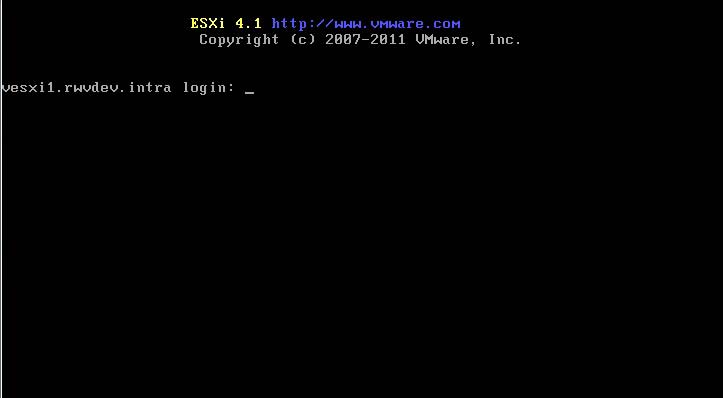

Because local tech support mode is now an official support tool for ESXi, the interface is somewhat more refined in that there is an official login screen. The tech support login screen is shown in the figure below:

|

Figure 2. Accessing local tech support mode is less cryptic than previous ESXi versions. (Click image to view larger version.) |

Within tech support mode, a number of command line tasks can be performed. In my personal virtualization practice, I find myself going into tech support mode less and less. Occasionally, there are DNS issues that may need to be addressed; and reviewing the /etc/hosts file to ensure DNS resolution is correct and no static entries are in use. If you have been in the practice of using host files for resolution directly on an ESXi (or ESX for that matter) host, now is a good time to break that habit. A better accommodation would be to ensure that the DNS environment is entirely correct and all zones are robust for the accuracy required by vSphere.

Utilizing tech support mode is one of those things that you will need only occasionally, so give some thought to leaving it on persistently on an ESXi host. What strategies have you uses with tech support mode on vSphere? Share your comments below.

Posted by Rick Vanover on 06/08/2011 at 12:48 PM7 comments

Making virtualization work for small organizations is always tough. Recently, I've been upping my Hyper-V exposure, and in the meantime I've been using direct attached storage for the virtual machines. Here are some positive factors for using DAS for virtualization:

- DAS is among the least expensive ways to add large amounts of storage to a server

- There is no storage networking to administer

- Local array controllers on modern servers are relatively powerful

- Storage direct attached will be accessed quite fast over SAS or a direct fibre channel connection

Before I go on about DAS, I must make it clear that every configuration has a use case in virtualization. DAS in this configuration can be a great way to make a small virtualization requirement fit into ever-shrinking budgets.

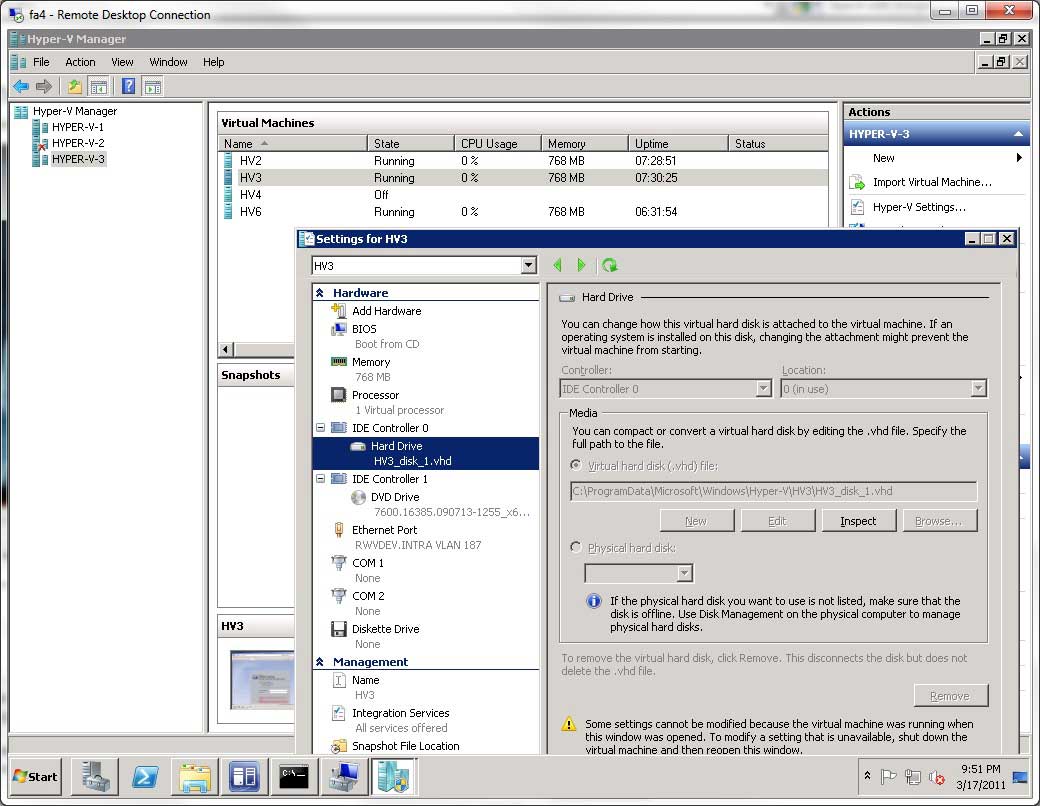

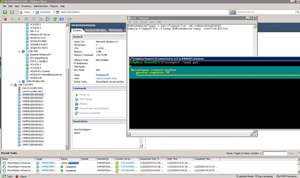

DAS can be anything from a local array controller in drive slots on a Hyper-V server or it can be a drive shelf attached via a SAS interface or direct fibre channel (no switching). Figure 1 shows a Hyper-V server with DAS configured in Hyper-V Manager:

|

Figure 1. Configuring virtual machines in Hyper-V to use DAS can save costs and increase performance for small environments. (Click image to view larger version.) |

Of course there are plenty of concerns with using DAS for Hyper-V, or any virtualization platform for that matter. Failover, backups and other workload continuity issues come to mind. But for the small virtualization environment, many of those solutions come easy. Just as using DAS has the above mentioned benefits, there are downsides:

- Host maintenance made very complicated and migration not available

- Data protection complicated

- Expansion opportunities limited

Again with any situation, there are a number of solutions. This idea came to me in a discussion I had with someone from my offer to help get started with virtualization. For really small virtualization environments; a single host with DAS may be the right solution.

Have you utilized DAS for Hyper-V? Share your comments here.

Posted by Rick Vanover on 04/28/2011 at 12:48 PM2 comments

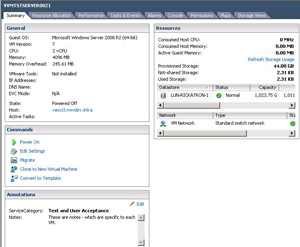

Even without a virtualization management package, vSphere administrators have long zeroed in on the attribute and annotation fields to organize virtual environments. Individual virtual machines have virtual machine (VM) notes to describe an individual virtual machine. Notes can be as simple as when the system was built, denoting if the server went through a physical-to-virtual (P2V) conversion, or it can be something where you add more descriptive notes fields for virtual appliances. Fig. 1 shows examples of individual VM attribute fields and notes.

|

|

Figure 1. This virtual machine has a number of attributes defined and populated as well as the notes field providing a description of the VM. (Click image to view larger version.) |

Attributes can also be applied to hosts. Having host attributes can be very handy for something as simple as specifying the location of the ESX(i) host system. For troubleshooting vSphere environments, anything that can be organized in such a fashion that is self-documenting is a welcome step. Fig. 2 shows a host attribute applied with the rack location.

|

|

Figure 2. This attribute specifies the physical location of the ESX(i) server. (Click image to view larger version.) |

In my personal virtualization administration practice, I've found it a good idea to do a number of things up front. I'll include a revision note attribute for critical indicators, such as the revision of the template in use. While each VM can be investigated within the operating system, it can be much easier to see which VMs originated from template version 2.1.23 (see Fig. 2).

The change log can be something as unsophisticated as a text file or as complicated as a revision-controlled document. In this way, each VM can quickly see their information on the summary pane of each VM, but also in the view of all virtual machines and can utilize a quick sort (see Fig. 3).

|

|

Figure 3. The vSphere Client allows sorts based on attributes, which is a quick view into the running VMs in the environment. (Click image to view larger version.) |

Regardless of the level of sophistication of the vSphere environment, simple steps with attributes and notes on host and VMs can give a powerful boost to the information obtained within the vSphere Client.

How do you use attributes and notes? Share your comments here.

Posted by Rick Vanover on 02/22/2011 at 12:48 PM2 comments

With vSphere 4.1, VMware removed the easy-to-use Windows Host Update Utility from the standard offering of ESXi. Things are made easy when VMware Update Manager is in use with vCenter, but the free ESXi installations (now dubbed VMware vSphere Hypervisor) are now struggling to update the host.

The vihostupdate Perl script (see PDF here) can perform version and hotfix updates for ESXi. But I found out while upgrading my lab that there are a few gotchas. The main catches are that certain post update options can only be done through the vSphere Client for the free ESXi installations. As I was updating my personal lab, I went over the commands to exit maintenance mode and reboot the host from this KB article. It turns out that none of these will work in my situation -- vCenter is not managing the ESXi host.

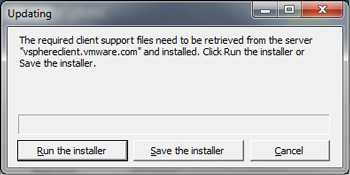

This all started when I forgot to put the new vSphere Client on my Windows system ahead of time. We've all seen the error in Fig. 1 when an old vSphere Client connects to a new ESXi server.

|

|

Figure 1. When an older vSphere Client attempts to connect to a newer ESXi server, an updated client installation is required. (Click image to view larger version.) |

This is fine enough, as we simply retrieve the new vSphere Client installation and proceed along on our merry way. Unfortunately, this was not the case for me. As it is, my lab has a firewall virtual machine that provides my Internet access. Further, the new feature with vSphere is that the new client installation file is not hosted on the ESXi Server, but online at vsphereclient.vmware.com.

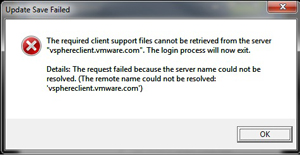

Fig. 2 shows the error message you will get if there is no Internet access to retrieve the current client.

|

|

Figure 2. The client download will fail without Internet access. (Click image to view larger version.) |

The trick is to have the newest vSphere Client readily at hand to do things like reboot the host and exit maintenance mode when updating the free ESXi hypervisor.

It's not a huge inconvenience, but it's definitely a step that will save you some time should you run into this situation where the ESXi host also provides the Internet access.

Posted by Rick Vanover on 01/27/2011 at 12:48 PM3 comments

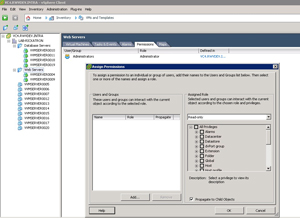

The virtual machine floppy drive is one of those things that I'd rate as somewhere in the "stupid default" configuration bucket. In my virtualization practice, I very rarely use it, and so I add it as needed. While it is not a device that is supported as a hot-add hardware component, that inconvenience can be easily accepted for the unlikely event that it will be needed. The floppy drive can be removed quite easily with PowerCLI.

To remove a floppy drive, the vSphere virtual machine needs to be powered off. This is easy enough to automate with a scheduled task in the operating system. For Windows systems, the "shutdown" command can be configured as a one-time scheduled task to get the virtual machine powered off. Once the virtual machine is powered off, the Remove-FloppyDrive Cmdlet can be utilized to remove the device.

Remove-FloppyDrive works in conjunction with the Get-FloppyDrive, which retrieves the device from the virtual machine. This means that Remove-FloppyDrive can't, by itself, remove the device from a virtual machine. The Get-FloppyDrive Cmdlet needs to pass it to the Remove-FloppyDrive command. This means a simple two-line PowerCLI script will need to accomplish the task. Here's the script to remove the floppy drive for a virtual machine named VVMDEVSERVER0006:

$VMtoRemoveFloppy = Get-FloppyDrive -VM VVMDEVSERVER0006

Remove-FloppyDrive -Floppy $VMtoRemoveFloppy -Confirm:$false

This is done with the "-Confirm:$false" option to forego being prompted to confirm the task in PowerCLI. Fig. 1 shows this script being called and executed to the vCenter Server through PowerCLI.

|

|

Figure 1. The PowerCLI command reconfigures the virtual machine to remove the floppy drive. (Click image to view larger version.) |

This can be automated with PowerShell as a scheduled task, and used in conjunction with the Start-VM command to get the virtual machine back online if the tasks are all sequential in a period of scheduled downtime.

Posted by Rick Vanover on 11/22/2010 at 12:48 PM0 comments

In the course of an ESXi (or ESX) server's lifecycle, you may find that you need to add hardware internally to the server. Adding RAM or processors is not that big of a deal (of course you would want to run a burn-in test), but adding host bus adapters or network interface controllers comes with additional considerations.

The guiding principle is to put every controller in the same slot on each server. That way, you'll be assured that the vmhba or vmnic enumeration performs the same on each host. Here's why it's critical: If the third NIC on one host is not the same as the third NIC on another, configuration policies such as a host profile may behave unexpectedly. The same goes for storage controllers: If each vmhba is cabled to a certain storage fabric, they need to be enumerated the same.

When I've added NICs and HBAs to hosts, I've also reinstalled ESXi. Doing so, though, has its pros and cons.

Pros:

- Ensures the complete hardware inventory is enumerated on the installation the same way.

- Cleans out any configurations that you may not want on the ESXi host.

- Is a good opportunity to update BIOS and firmware levels on the host.

- Reconfiguration is minimal with host profiles.

Cons:

- May require more reconfiguration of multipath policies, virtual switching configuration and any advanced options if host profiles are not in use.

- Additional work.

- Very low risk that the vmhba and vmnic interface enumeration is not the same as the install or the other hosts.

I've done both, but more often I've reinstalled ESXi.

What is your take on adding hardware to ESXi hosts? Reinstall or not? Share your comments here.

Posted by Rick Vanover on 11/18/2010 at 12:48 PM3 comments

The vSphere annotation field is one of the most versatile tools to put basic information for the virtual machine right where it is needed most. If you are like me, you may change your mind on one or more things in your environment from time to time.

Administrators can create annotations for the virtual machines, but need to be careful not to dilute the value of the annotation by creating too many values. Among the most critical attributes I like to see in the virtual machine summary is an indicator of its backup status, development or production status, or some business-specific information.

|

|

Figure 1. The annotation, in bold, is a global field for all VMs while the Notes section is per-VM. (Click image to view larger version.) |

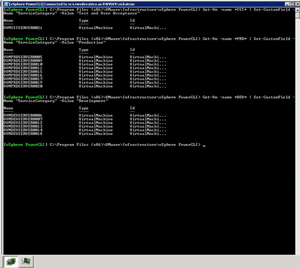

Using PowerCLI, we can make easy work of setting an annotation for all virtual machines. I'll take a relatively easy example of setting all virtual machines to have a value for the newly created ServiceCategory annotation.

For a given virtual machine inventory, let's assume that the virtual machine name indicates where it is production or some other state. If the string "PRD" exists in the name, it is a Production virtual machine; "TST" is Test and User Acceptance. Finally, "DEV" tells us it's a Development VM.

Using three quick one-liners, we can assign each virtual machine a value based on that logic:

Get-Vm -name *DEV* | Set-CustomField -Name "ServiceCategory" -Value "Development"

Get-Vm -name *PRD* | Set-CustomField -Name "ServiceCategory" -Value "Production"

Get-Vm -name *TST* | Set-CustomField -Name "ServiceCategory" -Value "Test and User Acceptance"

Implementing this in PowerCLI is also just as quick (see Fig. 2).

|

|

Figure 2. The PowerCLI command will issue the annotation to multiple virtual machines in a quick iteration. (Click image to view larger version.) |

Using the Get-VM command, additional features such as reading a list from a text file can be used as well as many other interpretive parameters.

Do you deploy virtual machine annotations via PowerCLI? If so, share your comments here and some of your most frequently used fields for each virtual machine.

Posted by Rick Vanover on 11/16/2010 at 12:48 PM1 comments

I am not sure if I'm being increasingly brave here, but I'll say that I was inspired to weigh in with my comments on the contentious issue regarding the number of virtual machines per volume. It's good timing too. Recently, I just came across another great post by Scott Drummonds in his Pivot Point blog,

Storage Consolidation (or: How Many VMDKs Per Volume?).

Scott probes the age-old question: how many virtual machines do we put per datastore? The catch-all answer of course is, "It depends."

One factor that I think is critical to how this question is approached and isn't entirely addressed in Scott's post is the "natural" datastore size that makes sense from the storage processor. Most storage controllers in the modular storage space have a number of options in how to determine the best size of a logical unit number (LUN) to present to the vSphere environment.

The de-facto standard is often 2 TB as a common maximum, but there are times when smaller sizes make more sense. Take into account other factors such as storage tiers, hard drive sizes, RAID levels and number of hard drives in an array; and 2 TB may not be the magic number for a datastore size. Larger storage systems can provision a LUN as a datastore across a high number of disks and can be totally abstracted from these details, making the comfortable LUN size something smaller, such as 500 GB or even less.

Scott also mentions cautioned use of a configuration command to increase the queue length for a datastore from the default value of 32. I mentioned this value in a previous post (see On the Prowl for vSphere Performance Tweaks). Like Scott, I issue caution and testing to see if it make sense for each environment. The simple example is, if there will be a small number of datastores, this command makes perfect sense. But if the vSphere environment would be highly consolidated (i.e. 60 or more virtual machines per host) across a high number of datastores (i.e. 60 or more separate datastores), the risk of the microburst phenomena may be introduced. There is no defined threshold of virtual machines or datastores, and this example (60) isn't even that extreme any more.

My general practice is to put lightweight virtual machines in the 25 or less range per 2 TB datastore for lower tiers of storage. These workloads are the basic applications that we all have and hate; yet don't really require much in terms of throughput or storage requirements. I utilize all of the good stuff such as thin provisioning and would like to always keep headroom of about 40-50 percent free space on the datastore.

For the larger virtual machines, I usually go the datastore-per-VM or datastore-per-few-VMs approach on higher tiers of storage. I'll still utilize thin provisioning, but with a smaller amount of virtual machines per datastore the free space is kept at a minimum, as usually these applications have a more defined growth requirement.

I've always thought that capacity planning (and I'd lump this into a form of capacity planning) takes a certain amount of swagger and finesse. Further, this isn't always compiled of discreet information from application requirements, storage details and a clear roadmap for growth. But we all have that clearly spelled out for us, right?

Posted by Rick Vanover on 11/09/2010 at 12:48 PM2 comments

In my virtualization journey, I frequently find storage and memory as the most contended resources. This contention is usually based around memory growth by newer OSes and everyone wants more storage that performs faster. With

vSphere, we now can assign eight virtual symmetric multiprocessors (vSMP) to a single virtual machine. This is available only for the Enterprise Plus offering (see

last week's post), as all other editions limit a virtual machine to four vSMP.

Straight out of the box, that means that a virtual machine can be configured with eight vSMP but not used in the most typical situation. This is presented as eight processors (sockets) at one core each. In the Windows world, we have to be careful. If the server operating system is the Standard edition, it is limited to four sockets. See the Windows 2008 edition comparison, where each edition's capabilities are highlighted. If we have a Windows Server 2008 R2 Standard edition server with eight vSMP, it will only recognize four of them within the operating system.

This means that if ESXi is installed on a host system with two sockets (with four cores each) and licensed at Enterprise Plus with a Windows Server 2008 R2 Standard edition guest virtual machine provisioned with eight vSMP, it will only see four vSMP. Flip that over, and if Windows Standard Edition is installed directly on that server without virtualization, the operating system will see all eight cores.

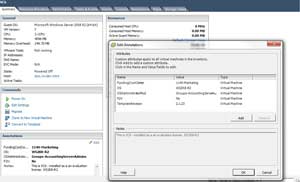

The solution is to install a custom value in the virtual machine configuration that presents the vSMP allocation as number of cores per socket. This is the cpuid.coresPerSocket value, and values such as two, four or eight are safe entries. Figure 1 shows this configured on a vSphere 4.1 virtual machine.

|

|

Figure 1. Adding a configuration value to the virtual machine will enable Windows Server Standard Editions to see more than four processors by presenting cores to the guest. (Click image to view larger version.) |

vSphere 4.1 now supports this feature and it is explained in KB 1010184. Consider the licensing and support requirements of this value, as I can't make a broad recommendation but can say that it works. Duncan Epping (of course!) had posted about this feature last year and explained that it was an unsupported capability of the base release of vSphere. Now that it part of the mainstream offering for vSphere, it is clear to use.

From a virtual machine provisioning standpoint, I'd still recommend that this would be used exclusively on an as-needed basis. Have you used this value yet? If so, share your comments here.

Posted by Rick Vanover on 11/04/2010 at 12:48 PM7 comments

When it comes to providing a virtualized platform for servers, we always need to consider the application. In my experience, I’ve been on each end of the spectrum in terms of designing the application and the impact it brings to the virtualized platform.

One of the options we’ve always had is to have an application that is designed with redundancy built-in. These are the best, as you can meet so many objectives without going through major circus acts on the infrastructure side. Key considerations such as scaling, disaster recovery, separating zones of failure and test environments can be built-in if the right questions are asked. This can usually come with the help of technologies such as load balancers, virtual IP addresses or DNS finesse.

The issue becomes how clear do the benefits need to be before a robust application coupled with an agile infrastructure is a no-brainer? There is usually higher infrastructure and possibly higher application costs to design an application that can cover all of the bases on its own, yet frequently this can be done without significant complexity. Complexity is important, but the benefits of a robust application can outweigh an increase in complexity.

Whether the application be a pool of web servers spread across two sites or more involved as something that includes replicated databases with a globally distributed file content namespace, the situations will always vary but there may be options to achieve absolute nirvana. If the application is able to cover all of the bases, then the virtualized platform doesn’t become irrelevant.

The agile platform can still provide data protection, as well as on-demand scale if needed. I won’t go so far as to call it self-service, but you know what I mean. I’ve been successful in taking the time to ask these applications and dispel any myths about virtual machines (like – DR is built-in). Do you find yourself battling application designers to bake-in all of the rich features that complement the agile infrastructure? Share your comments on the application battle below.

Posted by Rick Vanover on 11/02/2010 at 12:48 PM1 comments

vSphere has many options for administrators to organize hosts, clusters, virtual machines and all of the supporting components of the virtualized infrastructure. I was talking with a friend about how it is always a good idea to review and reorganize the virtualized infrastructure (see

Virtualization Housekeeping 101) and the topic of resource pools came up. I still find people who use a DRS resource pool as an organizational unit instead of the vSphere folder.

The DRS resource pool is not an organizational unit, but a resource allocation unit. Sure, the resource pool can be created with no reservations or limits, making no perceived impact on the available resources; but the parent-to-child pool chain is modified with the pool in place. The vSphere folder can contain datacenters, hosts, clusters, virtual machines and other folders.

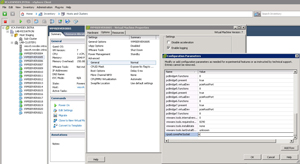

The beauty of the vSphere folder is it can have its own permissions configuration. By default, it inherits the permissions from the parent; but it can be configured for explicit permissions. Fig. 1 shows a folder of Web servers and the ability to assign permissions for that collection of virtual machines.

|

|

Figure 1. Permissions can be extended to folders to function as containers for roles or simply organizing virtual machines. (Click image to view larger version.) |

I find myself using the permissions of a folder to function like a group membership assignment that follows in the Active Directory world. The role functionality of vSphere allows the administrator to delegate a lot to different categories of users of a virtual machine. This can be as simple as view the virtual machine console, allow virtual media mount capabilities or even the virtual power button.

Do you use the folder for organization or permissions? If so, share how you use it here.

Posted by Rick Vanover on 10/28/2010 at 12:48 PM1 comments