In-Depth

Virtualization in System Center 2016

Better orchestration, security and networking highlight some of the upgrades.

The next version of Microsoft's on-premises suite of management software is here. System Center 2016 is full of new abilities. This article will look at what's new and enhanced specifically for virtualization in the different suite applications that make up System Center.

We'll start with Virtual Machine Manager (VMM) and its support for rolling cluster upgrades, Storage Spaces Direct (S2D), Storage Replica (SR), Storage QoS, public cloud management, Shielded VMs and the Host Guardian Service (HGS). Then we'll look briefly at other parts of the suite, Data Protection Manager and Operations Manager and what they bring for virtualization.

VMM Orchestration

In earlier versions of Windows Server, there was sometimes a time lag between a new feature in Hyper-V and VMM support. This wasn't conducive to adoption by customers, so this time around the two teams have worked closely together; it shows, as many new features making their debut in Windows Server 2016 are also supported in VMM.

This starts with rolling cluster upgrades. Now VMM can take the role of orchestrating the draining of VMs from one host in a Windows Server 2012 R2 cluster; evicting the node; installing Windows Server 2016 (through the built-in support for bare metal deployment in VMM); and joining it back into the cluster. VMM can automate the whole process for the cluster, including the final upgrade of the cluster functional level to 2016.

VMM 2016 also fully supports creating S2D clusters, both as a dis-aggregated cluster and in a hyper-converged implementation. Because of the added flexibility for adding more storage that S2D brings, you can also use VMM to deploy additional nodes when you need more capacity or better storage IO.

Speaking of creating clusters, what used to be a two-step process for new hardware in VMM is now a single operation. With bare-metal deployment, VMM can now deploy the OS (as a bootable VHDX) and create a new cluster, all through one wizard. This works for Hyper-V nodes and storage clusters, through either Scale Out File Server (SOFS) or S2D.

It turns out that in the real world SOFS clusters are more frequently deployed in front of an existing SAN than as front-ends for Storage Spaces enclosures. This makes sense, as it limits the number of FC HBAs and switches needed while providing simple, SMB 3.0 file sharing for storing VHDs for VMs. Following this trend, VMM can now automatically zone the SAN and set up iSCSI connectors where required, as well as deploy and manage the SOFS cluster.

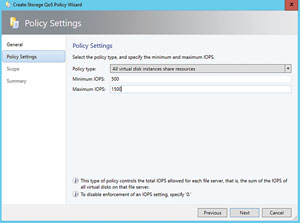

The new policy-driven, centralized Storage QoS engine can only be managed through Windows PowerShell in Windows Server 2016, whereas VMM adds the GUI to create and assign policies. You can either assign policies to existing VMs, or set the policy when you create new VMs (see Figure 1).

[Click on image for larger view.]

Figure 1. Creating a Storage QoS policy.

[Click on image for larger view.]

Figure 1. Creating a Storage QoS policy.

Storage Replica (SR), the new synchronous and asynchronous data replication engine in Windows Server 2016, can be managed by VMM. You can define a primary and a recovery volume in a single cluster, in two different clusters or on stand-alone hosts.

Early technical previews didn't support Nano Server, but there's now full support for the entire lifecycle of Nano Server, either as hosts or VMs. For hosts, prepare an image with the VMM package for Hyper-V nodes or the storage package for SOFS/S2D nodes.

Next, copy the newly created VHD to the physical host, set it as a bootable disk and make sure it's joined to the same domain as the VMM server. It can now be added to management, just like any other Hyper-V server. Note that there's no support (yet) for automated bare-metal deployment of Nano Server using VMM. If you want to run Nano Server as a guest, simply create a VHD or VHDX image with the VMM agent preinstalled; it can then be used in VM templates, just like any other OS image.

Networking Enhancements

Another area where VMM shines as the orchestrator of Windows Server 2016 platform technologies is in software-defined networking (SDN). VMM Service Templates enable you to deploy and manage a multi-node Network Controller (NC), the new Software Load Balancer (SLB) and Windows Server Gateway (WSG) for tenant VPN connectivity. If you're using Azure Pack, you can provide the ability for tenants to self-service the creation of VM networks, configure Site-to-Site VPN connectivity and pick NAT options for VMs.

The NC also lets you set Access Control Lists (ACLs) on virtual networks, virtual subnets or NICs, making it easier to segregate and control traffic.

The logical switch has been a bit of a problem child in VMM. When it works, it's great: Create a centralized Hyper-V switch in VMM with all the right settings and connectivity, and push it out to all Hyper-V nodes. If something goes wrong, though, it can lead to hours of troubleshooting. If the logical switch deployment fails for any reason in VMM 2016, it'll roll back the changes so that the switch configuration present on the node before is untouched. Another great new option is to set up the switch on an actual Hyper-V host with all the right settings and then turn this into a logical switch in VMM for deployment to other hosts.

You can add or remove a virtual network interface from a running VM, as well as alter the amount of allocated memory for VMs with static memory (those not using Dynamic Memory).

If you're deploying Generation 2 VMs, you can now "inject" a consistent naming standard for virtual NICs in the VMs, making it easy to pick the right one when doing automated, post-deployment configuration.

Security Upgrades

To truly take advantage of Shielded VMs in Hyper-V 2016, you'll need to use VMM. This starts with creating a catalogue of hashed and digitally signed OS virtual disks. Your tenants or application administrators then create the encrypted provisioning key files (called PDK files), which contain the information a host administrator would normally enter, such as an admin account name, password, domain join settings and so on. These files are also linked to particular virtual OS disks with which they can be used.

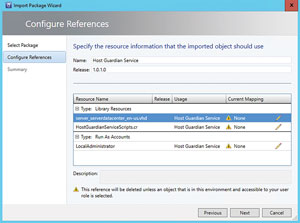

There are VMM Service Templates along with detailed guidance on how to deploy HGS servers and then add them to VMM. You can then define global settings for HGS in VMM (shown in Figure 2), which are applied to your HGS servers.

[Click on image for larger view.]

Figure 2. The Host Guardian Service template.

[Click on image for larger view.]

Figure 2. The Host Guardian Service template.

Finally, VMM (and Azure Pack, if you're using it) allows you to deploy Shielded VMs to authorized hosts. Note that while it's possible to shield an existing VM, this provides less security assurance because it may already have been compromised. You can also define that new VMs are automatically shielded when they're created.

Other Important Changes

Microsoft Server Application Virtualization (Server App-V) is deprecated in VMM 2016, presumably being replaced by the infinitely more capable PowerShell DSC or a third-party deployment engine. There's one exception: if you upgrade from VMM 2012 R2 to 2016, your existing service templates with Server App-V deployments will continue to work. You just won't be able to scale out the tier where Server App-V is used.

If you haven't been keeping up with the Update Rollups (URs) for VMM 2012 R2, the (now built-in) option to add an Azure subscription to VMM (Figure 3) is neat; this was first added in UR6. This lets you start, stop or restart Infrastructure-as-a-Service VMs in Azure, as well as connect to them via RDP.

[Click on image for larger view.]

Figure 3. Microsoft Azure virtual machines in System Center Virtual Machine Manager.

[Click on image for larger view.]

Figure 3. Microsoft Azure virtual machines in System Center Virtual Machine Manager.

Support for VMware vCenter 4.1 and 5.1 has been removed from VMM 2016, as has support for Citrix XenServer. On the other hand, VMware 5.5 and 5.8 are supported, but curiously not 6.0 (yet). The IT GRC Process Management Pack that linked VMM and Operations Manager with Service Manager has also been deprecated, along with the Service Manager Cloud Service Process Pack (CSPP). The functionality of the latter is replaced by Azure Pack.

Data Protection Manager 2016 supports backing up VMs on an S2D cluster and Shielded VMs, but doesn't support backing up Nano Servers.

Operations Manager 2016 continues its close relationship with VMM, including monitoring the fabric -- hosts, networking and VMM itself -- as well as any private clouds created in VMM.

App Controller is deprecated altogether from System Center, which is no great surprise. Azure Pack is a much more comprehensive, self-service interface for VMM, and the public Azure changes far too rapidly to be well-served by a static client interface.

A Necessary Upgrade?

I think Microsoft will have a tough job when it comes to convincing enterprises to upgrade their existing System Center deployment to 2016. Unlike the difference between Windows Server 2012 R2 and Windows Server 2016, which forced an upgrade to gain the new features, System Center 2012/2012 R2 has been on a quarterly update release cycle for a long time now.

That means that new features requested by the community have already been incorporated into each product; in other words, VMM 2012 R2 today (or any other application) is very different with the latest UR compared to the original RTM release, so what you gain with an upgrade is much less than with a Windows Server upgrade. Nevertheless, if you're planning to deploy Windows Server 2016 Hyper-V, and your company uses VMM, you'll need to upgrade your VMM infrastructure.

Another important consideration is the rapidly growing capabilities of the Operations Management Suite (OMS) cloud service. It integrates with parts of System Center -- in particular Operations Manager -- but also with VMM for resource utilization forecasting.

Also worth mentioning is that Azure Stack, unlike Azure Pack, does not require System Center to build a private cloud. It'll be interesting to see how Microsoft positions the future of the on-premises System Center against (or maybe with?) OMS and Azure Stack.

About the Author

Paul Schnackenburg has been working in IT for nearly 30 years and has been teaching for over 20 years. He runs Expert IT Solutions, an IT consultancy in Australia. Paul focuses on cloud technologies such as Azure and Microsoft 365 and how to secure IT, whether in the cloud or on-premises. He's a frequent speaker at conferences and writes for several sites, including virtualizationreview.com. Find him at @paulschnack on Twitter or on his blog at TellITasITis.com.au.