News

Azure Spreads its Wings at Build 2021

Paul Schnackenburg takes an IT view of the developer conference, focusing on two main themes and several releases.

Microsoft's annual developer conference, Build, took place last week. In this article I'll cover two main themes that I think will affect more IT folks than just developers, plus mention a few other releases to keep an eye on.

These are my main takeaways and by no means a full coverage of all the announced features and services -- here's the Build 2021 Book of News if that's what you're looking for.

Azure Everywhere

The single most interesting development revealed at Build is that Azure App Service (a PaaS platform to run web sites, mobile application backends and APIs), Functions, Logic Apps, API Management and Event Grid can run on any (CNCF compliant) Kubernetes cluster -- anywhere. This preview expands the reach of Azure, and in business terms it frees your internal dev teams from having to maintain different code for different environments. For example, if you're writing an app that needs to process health data that you'd normally run in public Azure -- but in some situations with no internet connectivity -- you need to run it at the edge in a hospital. No problem: The same app can run in both places with Azure App Service on top of Azure Kubernetes Service (AKS).

The fact that not only App Service comes along for the ride but also Functions (serverless, event-driven computing), API Management and Event Grid (routing events from any source to any destination) -- plus Logic Apps (automated workflows for connecting services and data, with over 400 connectors) -- provides a complete platform for any app.

And if you need a database platform you can opt for Arc-enabled data services which lets you run SQL Managed Instance or PostgreSQL Hyperscale on any Kubernetes cluster. As I predicted in my piece on Azure Arc back in August 2020, Arc is spreading to more workloads.

Furthermore AKS also is now Generally Available on Azure Stack HCI (Hyper Converged Infrastructure). If you haven't followed the proliferation of children in the Stack family, it looks like this today:

- Stack Edge -- three different sizes of "Hardware as a Service" devices: Pro GPU, Pro R and MiniR for running workloads on the edge, particularly ML and custom vision applications.

- Stack Hub -- four to 16 servers in a stamp that you buy from a small set of OEMs, which runs the same software as public Azure. You can't run your own agents on the servers. It's essentially an appliance-like experience for extending Azure to your own environments (and you can connect it to your Azure subscription for metered billing).

- Stack HCI -- two to 16 physical servers running a special version of Windows Server, Storage Spaces Direct (HDD, SSD and NVMe storage pooled across all nodes), Hyper-V, software-defined networking (SDN) and validated hardware from a range of OEMs. Here (unlike Hub) you have full access to the nodes and can run whatever monitoring and backup agents you need. Billing is again linked to an Azure subscription.

So, having a managed AKS instance on your Stack HCI clusters means that you get an AKS cluster that Microsoft keeps up to date for you, on top of a cluster that's easy to keep updated and secure. And now you can run App Service on top of that cluster -- truly providing a single surface for deploying your applications, without the hefty price tag that Azure Stack Hub brings.

Using an Arc-enabled Kubernetes cluster also allows you to use cluster connect to let users and administrators securely access the cluster from anywhere. To be able to manage microservices at scale on top of AKS, Microsoft has released a preview of OSM (Open Service Mesh), an open source project that lets you capture metrics, control routing, manage access control, and secure service to service traffic with mutual TLS.

Encryption Everywhere

Back in May 2019 I looked at the fledgling preview of Azure Confidential Computing. As an industry we've pretty much got on top of securing data in transit using TLS (thanks in large part to Edward Snowden) and we know how to use encryption to protect data at rest (on by default in public clouds, optional but easy to implement on-premises). What we haven't managed until recently is protecting data while it's in use. Any on-premises VM admin can attach a debugger and inspect the memory of a VM, or a database administrator can look at data that they shouldn't have access to. A core concept in Confidential Computing are CPUs that can encrypt portions of the memory they access and also parts of the processor, creating an "enclave" that other processes (including the hypervisor itself) don't have access to.

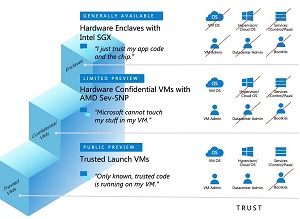

When Azure Confidential Computing was new it was only really for those companies with very specific regulatory or security needs that justified the development of custom code. This Build we got to see how far Confidential Computing has come and how far it's spread into different services in Azure. First of all, there's a whole spectrum of options for you to choose from when protecting your confidential data. If you need to move a sensitive workload into Azure but you trust your VM admins, you can opt for AMD SEV-SNP based VMs (currently in preview) where the entire VM is encrypted using keys that never leave the CPU. This requires no code changes in your applications but means you no longer need to trust the hypervisor, the control plane or Microsoft's datacenter admins -- and it obviates the risk of boot kits. You still need to trust the OS in your VM and your administrators.

[Click on image for larger view.] The Ladder of Trust in Azure Confidential Computing (source: Microsoft).

[Click on image for larger view.] The Ladder of Trust in Azure Confidential Computing (source: Microsoft).

If you do need the full protection of the Intel SGX protected hardware enclaves, you don't need to trust any external entities, including your VM OS and your own administrators. The only thing you need to trust is your app code and the chip it's running on.

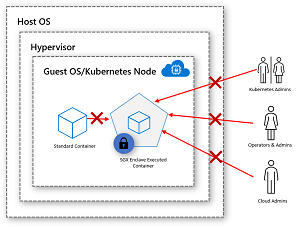

In between those ends of the spectrum is Confidential Containers where you need to do some code changes to enable containers to run on enclave-enabled VMs. Confidential containers mean you don't need to trust Microsoft or your guest admins.

[Click on image for larger view.] Confidential Containers (source: Microsoft).

[Click on image for larger view.] Confidential Containers (source: Microsoft).

Azure SQL Database also extends Always Encrypted to enclaves, stopping your DBAs from being able to read sensitive data in the database, while still allowing the engine to execute queries against protected data.

And there's also a public preview of Trusted Launch VMs which will stop boot and root kits on your VMs in Azure -- it relies on a virtual TPM chip and integrates with Azure Security Center and Azure Defender. If you're deploying IoT devices you can use Azure IoT Edge to ensure the confidentiality of your data using enclaves. And if you're running ML inferencing the Confidential ONNX Inference Server beta relies on enclaves as well.

Finally, a new service, Azure Confidential Ledger (ACL -- not to be confused with Access Control Lists) runs entirely in enclaves and provide a tamper-proof and tamper-evident blockchain-based record-keeping service for businesses that need these features for regulatory compliance.

Other News

I found the Developer Velocity Lab (DVL) research initiative interesting, as it looks at improving developer productivity and well-being. Here's their first publication. And the collaboration between Accenture, GitHub, ThoughtWorks and Microsoft in the Green Software Foundation is noteworthy. It aims to establish green software industry standards to fulfill the Paris Climate Agreement target of 45 percent carbon reduction by 2030.

Bicep, the language on top of the Azure Resource Manager (ARM) language that templates in Azure are written in, has progressed to version 0.4, adding a built-in linter and "resource scaffolding" -- adding the code for a resource you're declaring automatically.

Azure Purview is still in preview but has now been extended to support scanning open source databases in Azure.

There were many other things revealed at Build 2021, but I think App Service outside of Azure's datacenters is going to be the biggest game changer, along with the spread of confidential computing to more and more services.