How-To

Using Oracle Cloud, Part 3: Checking Network Performance on VMs

Tom Fenton decides to set up and test the network between a VM and the outside world after previously detailing the Oracle Cloud's "Always Free" offering and using VMs as a web server.

In the previous article of my Oracle Cloud series, I created AMD virtual machines (VMs) that I used as a web server. This isn't the most practical uses for free VMs -- I imagine that Oracle envisioned that corporations would use Oracle's cloud services to run business applications and many, if not all, of these applications would be multitiered and require communication to the outside world.

With this in mind, I decided to set up and test the network between a VM and the outside world, and I will walk through these steps and results in this article. These tests should absolutely NOT be taken as any sort of a benchmarking test because there are far too many variables in my setup, but it will serve as an example of one way you could test it to see if the speed is adequate for a hybrid (cloud/on-premises) environment.

Adding an Internal NIC to VMs

By default, Oracle Cloud VMs come provisioned with a single NIC with an IP address that is reachable to the outside world. I thought it would be interesting to see what it takes to add a second internal NIC to a VM, as many customers would want to have internal-only VM-to-VM network connectivity. After logging into the Oracle Cloud portal, I took the following steps:

-

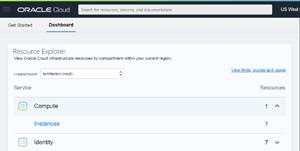

I Selected Dashboard, expanded Compute and clicked Instances.

[Click on image for larger view.]

[Click on image for larger view.]

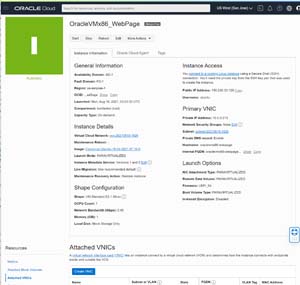

- I selected my Instance.

-

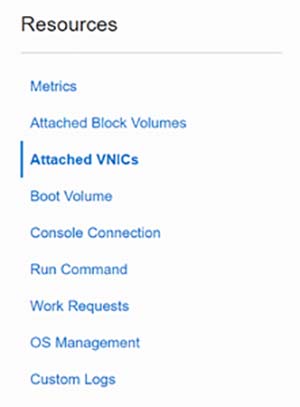

In the left Resources column, I selected Attached VNICs.

[Click on image for larger view.]

[Click on image for larger view.]

-

At the bottom of the page, I selected Create VNIC.

[Click on image for larger view.]

[Click on image for larger view.]

-

From the VNIC Information page, I gave the VNIC the name of Internal Only ARM NIC and selected the virtual cloud network and subnets that were available. Finally, I clicked Save Changes.

[Click on image for larger view.]

[Click on image for larger view.]

-

I received a message that I couldn't add a NIC to this instance type.

[Click on image for larger view.]

[Click on image for larger view.]

The limitation of a single NIC is an interesting choice by Oracle, even in its "Always Free" tier, as many individuals and companies would likely want to test network connectivity between VMs in the cloud.

IPerf3

iPerf3 is a free, widely available and popular network testing application. It uses a server/client scheme to create an artificial load between two systems to report the bandwidth, latency, jitter and packet loss between them. The source code is readily available, but most Linux distributions (including Ubuntu 18.04) have precompiled packages for it.

Installing iperf3

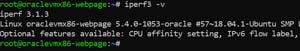

From the SSH connection that I had to the ARM-based VM, I installed iperf3 and verified that it was operational by entering the following:

- apt install iperf3

- iperf 3 -v

[Click on image for larger view.]

[Click on image for larger view.]

Running Ipef3

Before running iperf3, I needed to open its default port (5201) to both UDP and TCP traffic on the Oracle Cloud to the VM. Instructions on opening the ports on Oracle Cloud can be found in the first article in this series. I then installed iperf3 on a VM in my home lab as the client for my tests.

From my SSH connection to the Oracle VM, I opened the iperf3 port and started the server by entering:

- iptables -I INPUT 6 -m state --state NEW -p tcp --dport 5201 -j ACCEPT

- iptables -I INPUT 6 -m state --state NEW -p udp --dport 5201 -j ACCEPT

- iptables --list | grep 5201

- iperf3 -s

- netfilter-persistent save

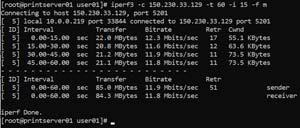

From a system in my lab in the Pacific Northwest, I started an iperf3 test by entering:

Iperf3 –c 150.230.33.129 –t 10 -i 15 -f g

This started the client system (-c) and connected to the IPerf3 server (150.230.33.129); iPerf ran the test for 60 seconds (-t 60), showed the results in Mb (-f m) and reported statistics every 15 seconds (-i 15).

The results showed that the bandwidth between the two systems was 15 Mbps.

[Click on image for larger view.]

[Click on image for larger view.]

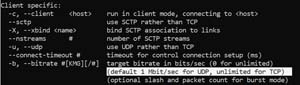

I then started a UDP test by appending a -u to the end of the command:

Iperf3 –c 150.230.33.129 –t 10 -i 15 -f g -u

The results showed that the bandwidth between the two systems using UPD was considerably slower at 1 Mbps. To verify the results, I reran the test three more times; each time it came back with the same results.

[Click on image for larger view.]

[Click on image for larger view.]

I found the answer to why it was running so slow in the main page for iperf3: it will limit the bandwidth for UDP to 1 Mbps by default. However, appending -b0 will override this.

[Click on image for larger view.]

[Click on image for larger view.]

The results now showed that the bandwidth between the two systems using UPD was now significantly faster at 12 Mbps.

[Click on image for larger view.]

[Click on image for larger view.]

Summary

It is helpful to measure how much, and how cleanly, network traffic can be passed between two systems. This information can be used for capacity planning, to see how changes affect the system or to troubleshoot issues that you are having. As noted above, you shouldn't give these tests too much weight regarding Oracle Cloud's performance abilities, but it does provide a starting point for determining whether a system cloud or otherwise is suitable for the use of your application or need. In my next article, I will try to create an Arm-based VM using the Always Free tier of Oracle Cloud.

About the Author

Tom Fenton has a wealth of hands-on IT experience gained over the past 30 years in a variety of technologies, with the past 20 years focusing on virtualization and storage. He previously worked as a Technical Marketing Manager for ControlUp. He also previously worked at VMware in Staff and Senior level positions. He has also worked as a Senior Validation Engineer with The Taneja Group, where he headed the Validation Service Lab and was instrumental in starting up its vSphere Virtual Volumes practice. He's on X @vDoppler.