News

Azure OpenAI Service Opens Up, ChatGPT On Tap

Microsoft opened up access to its groundbreaking OpenAI artificial intelligence (AI) tech, promising all users will soon be able to get their hands on the much-hyped ChatGPT chatbot.

The general availability of the previous invitation-only Azure OpenAI Service was announced yesterday (Jan. 16) by company CEO Satya Nadella in a tweet, followed up 16 minutes later by a companion announcement from OpenAI that said: "We've learned a lot from the ChatGPT research preview and have been making important updates based on user feedback. ChatGPT will be coming to our API and Microsoft's Azure OpenAI Service soon."

ChatGPT is a fine-tuned version of a more general machine language model, GPT-3.5, that has been trained and runs on Azure AI infrastructure.

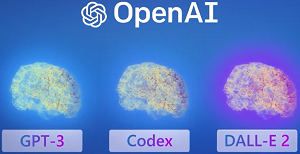

[Click on image for larger view.] Azure OpenAI Service (source: Microsoft).

[Click on image for larger view.] Azure OpenAI Service (source: Microsoft).

It's a much-hyped example of the Azure OpenAI Service, which has three main components:

- GPT-3: Human-like language generation using a large model that generates content based on natural language input

- Codex: Translates natural language instructions directly into code

- DALL-E 2, a new model that generates realistic images and art from natural language descriptions

Azure OpenAI Service was unveiled back in November 2021 at the company's Ignite tech event, where Nadella said: "We are bringing the world's most powerful language model, GPT-3, to Power Platform. If you can describe what you want to do in natural language, GPT-3 will generate a list of the most relevant formulas for you to choose from. The code writes itself."

The service provides large, pretrained AI models to unlock new scenarios, along with the ability to create custom models with fine-tuned data and hyperparameters.

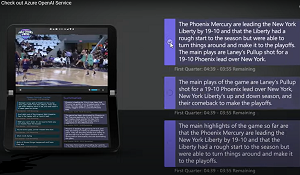

[Click on image for larger view.] Example App (source: Microsoft).

[Click on image for larger view.] Example App (source: Microsoft).

The infusion of OpenAI tech into Microsoft services comes after an investment of about $1 billion into partner company OpenAI, with recent reports speculating on even more investment, possibly to the tune of $10 billion.

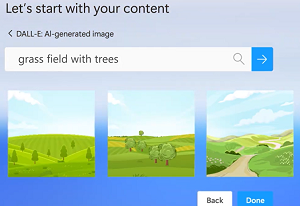

Along with bolstering the low-code Power Platform, Microsoft earlier this month was reported to be integrating the chatbot into its Bing search engine to provide more human-like answers to user questions. Also benefitting from the investment is GitHub Copilot, a coding assistant -- or AI "pair programmer" -- that helps developers write better code, coming from the Microsoft-owned open source code repository/development platform. Yet another service getting an AI boost is Microsoft Designer, another product with a waitlist that helps creators use natural language prompts to build content like images and graphics.

[Click on image for larger view.] Generating Content (source: Microsoft).

[Click on image for larger view.] Generating Content (source: Microsoft).

"We debuted Azure OpenAI Service in November 2021 to enable customers to tap into the power of large-scale generative AI models with the enterprise promises customers have come to expect from our Azure cloud and computing infrastructure -- security, reliability, compliance, data privacy, and built-in Responsible AI capabilities," Microsoft said in a Jan. 16 blog post. "Since then, one of the most exciting things we've seen is the breadth of use cases Azure OpenAI Service has enabled our customers -- from generating content that helps better match shoppers with the right purchases to summarizing customer service tickets, freeing up time for employees to focus on more critical tasks."

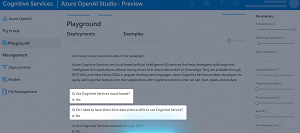

[Click on image for larger view.] Azure OpenAI Studio (source: Microsoft).

[Click on image for larger view.] Azure OpenAI Studio (source: Microsoft).

Organizations wanting to evaluate the service can get started in the Azure OpenAI Studio, which lets users experiment with prompts before actually building an app, but they still need to apply to use the service -- still in preview status -- and do some other Azure housekeeping first. The application process seems to work with corporate accounts only, as email addresses from popular services associated with personal accounts aren't allowed.

Along with the nuts-and-bolts machine learning functionality of the service, Microsoft touts responsible usage and enterprise security, as early-stage AI tech has been put to nefarious use by bad actors and comes with various security implications.

"As an industry leader, we recognize that any innovation in AI must be done responsibly," the company said. "This becomes even more important with powerful, new technologies like generative models. We have taken an iterative approach to large models, working closely with our partner OpenAI and our customers to carefully assess use cases, learn, and address potential risks. Additionally, we've implemented our own guardrails for Azure OpenAI Service that align with our Responsible AI principles. As part of our Limited Access Framework, developers are required to apply for access, describing their intended use case or application before they are given access to the service. Content filters uniquely designed to catch abusive, hateful, and offensive content constantly monitor the input provided to the service as well as the generated content. In the event of a confirmed policy violation, we may ask the developer to take immediate action to prevent further abuse."

About the Author

David Ramel is an editor and writer at Converge 360.