News

Azure OpenAI Service Gets ChatGPT

Microsoft today announced that ChatGPT is available in the Azure OpenAI Service.

The generative AI chatbox offering, based on an advanced ML large language model (LLM), will start usage billing on March 13 for a price of $0.002 per 1,000 tokens, but only for Microsoft managed customers and partners who have been vetted and granted access to the cloud service.

Availability was announced by Microsoft AI exec Eric Boyd, who said: "Since ChatGPT was introduced late last year, we've seen a variety of scenarios it can be used for, such as summarizing content, generating suggested email copy, and even helping with software programming questions. Now with ChatGPT in preview in Azure OpenAI Service, developers can integrate custom AI-powered experiences directly into their own applications, including enhancing existing bots to handle unexpected questions, recapping call center conversations to enable faster customer support resolutions, creating new ad copy with personalized offers, automating claims processing, and more."

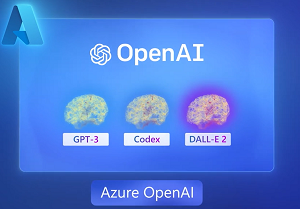

[Click on image for larger view.] Azure OpenAI (source: Microsoft).

[Click on image for larger view.] Azure OpenAI (source: Microsoft).

As we reported here, Azure OpenAI Service became generally available in January. Approved organizations can use advanced AI models from Microsoft partner OpenAI, such as Codex (a coding-optimized LLM), DALL•E 2 (for generating images) and GPT-3.5, a fine-tuned version of which is used by ChatGPT.

As for the differences between Azure OpenAI Service to the generalized version of OpenAI, Microsoft said the cloud offering comes with enterprise features such as security, noting that APIs are being co-developed by the Azure team and OpenAI.

About the Author

David Ramel is an editor and writer at Converge 360.