If you have not had a chance to play in the public cloud yet, I invite you to give it a test. To do that, point your browser to www.windowsazure.com and sign up for a free 90 day account. With the cloud fever going into full force this month, especially after the VMware-Amazon "extravaganza" and the rumored VMware public cloud offering, it is clear that what I've been saying on this blog for a few years now is beginning to come true: That the public cloud will take away workloads from enterprise data centers, and for good reason.

That being said, Windows Azure provides a good toolset for testing what a workload would look like in the public cloud. For those who have built a private cloud around Microsoft System Center and want to get your feet wet with hybrid cloud, I'll show you how you can connect Microsoft System Center App Controller to a Windows Azure subscription and manage both your private, on-premise cloud and your public cloud. I'll also show you how to move workloads between the two, thereby realizing the hybrid cloud.

You need to do a few things before you can enable a hybrid cloud:

- A Windows Azure subscription

- A connection between the Windows Azure subscription to the App Controller infrastructure, which will require uploading an SSL certificate with a .PFX to Windows Azure and which will authenticate your App Controller server

- A storage repository on Windows Azure, which would allow you to upload VMs, the ISOs and any other resource you'll need

Once you have signed up for a Windows Azure subscription, the first step is to upload your App Controller SSL certificate to Azure. Now remember, you will need to export two files for your certificate, the .CER and also the .PFX that includes the Private Key. Once you have both certificates, follow these steps to connect Azure with App Controller:

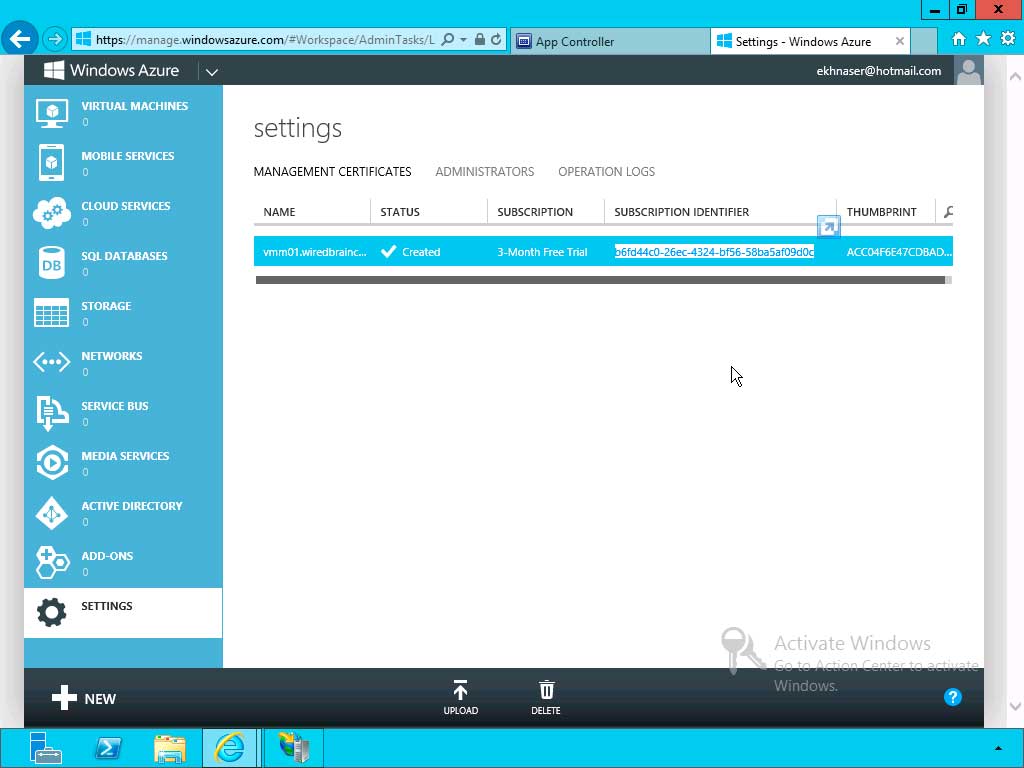

- From within the Azure dashboard, in the left pane, scroll down all the way to Settings and then in the right pane select certificates and click on upload management certificate.

- At this stage, upload the certificate with the .CER extension.

- Once uploaded, your Azure subscription ID will be generated and you can find it in a similar location to what's shown in Fig 1. Copy the string to the clipboard because you will need to use it in a later step.

|

Figure 1. Generating an Azure subscription ID. (Click image to view larger version.) |

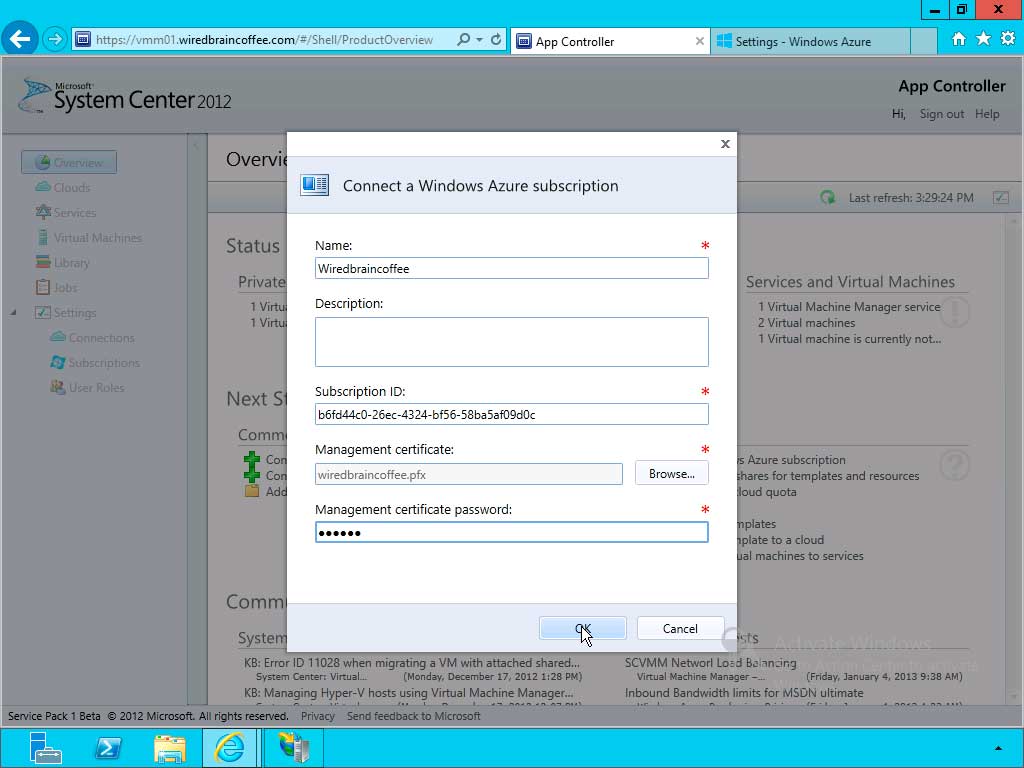

- Switch to your App Controller server and make sure you are connected to your dashboard and click on Connect a Windows Azure.

- You will get a dialog box similar to Fig. 2; give it a name and in the Subscription ID field paste the string of numbers you copied from step 3.

|

Figure 2. Almost done connecting Azure and App Controller. (Click image to view larger version.) |

- In the Management certificate field, upload the certificate with the .PFX extension and enter the appropriate password.

When you click OK, you'll create the connection between App Controller and Azure. The App Controller dashboard should now show you one active subscription with Azure.

Even with App Controller connected with Azure, you cannot yet copy resources in Azure because you don't have a storage repository to which you can move resources into. You can use a storage repository or a library of resources that Microsoft makes available by default, but here's how to upload our own storage repository:

- From within the App Controller Dashboard, in the left pane, click on Library.

- On the right expand Windows Azure select your cloud

- Click on Create storage account, give it a name and specify a region (in the U.S. in my case) that you want this storage repository hosted in. the idea here is to create the storage repository as close as possible to your location, which would increase the upload speeds and would make the syncing of these files easier. Now keep in mind, create this repository as close to where your private cloud infrastructure resides. Click OK.

You now have a storage repository which you can move resources into from the same pane of glass that you are managing your private cloud from.

Is your company considering service like IaaS from public cloud providers? If so I am really interested in which public cloud providers you are going with, why and what type of workloads you've determined to be most suitable for these clouds. I am also interested to know if you plan on using these public IaaS cloud for burstability; please share in the comments section here.

Posted by Elias Khnaser on 03/11/2013 at 1:34 PM2 comments

I have been very pleased with the strategy, execution and the road map that Citrix has developed around Enterprise Mobility. With the announcement of XenMobile MDM and the Mobile Solutions bundle, I can very easily say that the Citrix solution is the most complete and feature-rich offering on the market today.

XenMobile MDM is simply a name change for Zenprise, which Citrix acquired a few months earlier. I expected Citrix to simply change the "Z" to "X" and keep the name, but I guess Citrix marketing did not find that as amusing. That is not the only change that occurred: A new version of "Zenprise" also accompanies this release, and XenMobile MDM now brings it to version 8.0.1.

Many customers and colleagues have asked me why Citrix acquired an MDM provider -- what are the value-adds and isn't the world moving towards MAM anyway? To answer, we have to make a clear distinction between the use cases. I agree and concur that for BYOD initiatives, MAM is a better, cleaner way of doing this things and that MDM is not the ideal solution.

That being said, there are plenty of use cases where MDM is the only solution that makes sense and I will give you real-world examples. Have you heard of the "Belly" card? It is a customer recognition and rewards program from a company HQ'ed in Chicago that offers merchants a locked down iPad for display in their place of business. Customers can come in and scan their mobile phones on the iPad provided and after a certain number of check-ins they are offered a reward for their loyalty. In this case, belly would have very little use for MAM; they need an MDM solution to manage the thousands of iPads they have deployed.

Another example: United Airlines and American Airlines allow customers to use mobile devices in the cabin to purchase goods in-flight. Obviously, the airlines don't want the flight attendants to use their own device for this, MDM shines again here.

Finally, what about financial institutions that want to continue to issue corporate-managed devices of different flavors? It'd be for security reasons, obviously. In this case, MDM shines.

When I see bloggers and analysts disqualify MDM, they are not thinking beyond BYOD, where the business world could have a use case built around an application they issue on a mobile device.

Did Citrix strike gold with its acquisition of Zenprise? I will say this much: It was one of the best acquisitions the company has ever made. The natural follow-up question is, what about CloudGateway? And my answer is, it is the glue that holds everything together and is the most important product in the Citrix solution today. Everything will go through CloudGateway moving forward and at version 2.5 has the following features:

- Enterprise app store with identity management capabilities for a single sign-on like experience

- Windows Applications and Desktops through XenApp and XenDesktop

- Mobile applications integration, provisioning, etc.

- SaaS applications integration, provisioning, etc

- Integration with Citrix ShareFile for enterprise DropBox functionality

CloudGateway also has a connector for Citrix Podio, and here I'll be critical of Citrix the same way I'm critical of VMware for not integrating SocialCast. Why Citrix doesn't make Podio the workspace that users initiate all their activities from is a mystery to me, so I invite Citrix to consider Podio the workspace of the future with integration points for GoToMeeting, ShareFile and tabs for Mobile, Windows SaaS and other resources.

The XenMobile MDM and Mobile Solutions Bundle suite also introduce a sandboxed e-mail and Web browser capability. If you have been following my blogs, you probably know that one of the biggest security challenges for mobile devices is the native e-mail and Web browsers. It's hard or impossible in some cases to wrap policies around these two applications, and any solution that does not provide an alternative sandboxed option is not complete.

In the final analysis, I am very happy with the progress Citrix has been making on the road to Enterprise Mobility Management and there are many companies that are already iintegrating their NAS solutions with XenMobile, Cisco ISE being one and ForeScout being another. My one and only concern about Citrix is that it does not price itself out of the market -- Citrix tends to stick a hefty price tag on these solutions and I sincerely hope this time these solutions are priced to win because the technology is definitely capable. Your thoughts?

Posted by Elias Khnaser on 03/04/2013 at 1:35 PM8 comments

Did you know that with Windows Server 2012 you can now backup Hyper-V VMs with very little effort and very little configuration? Now, I'm not recommending that you throw out your existing enterprise backup system and standardize on Windows Server Backup in 2012, especially if you are using products like System Center Data Protection Manager (which is fantastic for backup) or Veeam or others. But, in a pinch Windows Server 2012's backup is quite handy.

Before you can use Windows Server Backup, you must add that feature from Server Manager. To do that, launch the Roles and Features wizard and select the Windows Server Backup feature and follow the wizard prompts.

Once installed, you can then launch it from within Administrative Tools | Windows Server Backup. Now, the cool thing about Windows Server Backup is the ability, when configured, to back up to cloud storage. You can, for example, integrate with Windows Azure for online backups. For the purposes of our discussion, we will focus on local backups and in a later blog I will cover backing up to Azure (before my Azure trial account expires).

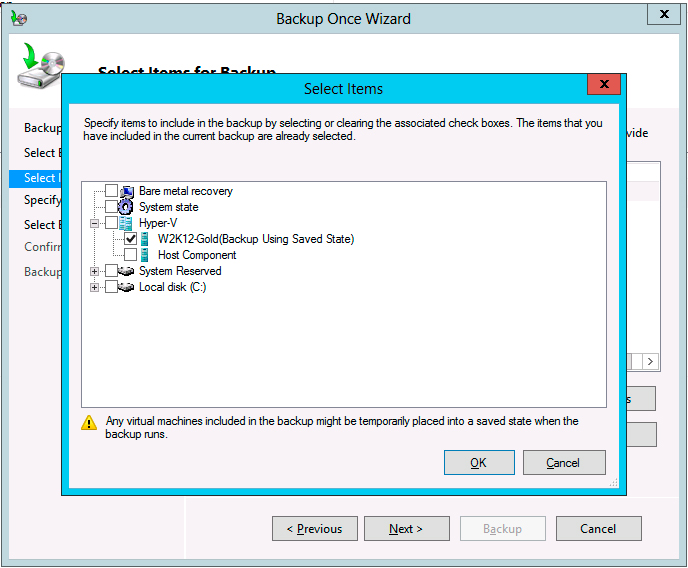

From within the Windows Server Backup console, right-click Local Backup. You will be presented with several options for backup, including scheduled backups and Backup Once (see Fig. 1).

|

Figure 1. Virtual backups done right. (Click image to view larger version.) |

The latter is what we will be using, as it is a somewhat streamlined process aimed at allowing you to back up rapidly. Follow these steps:

- The first screen shows you whether or not you are going to use a scheduled backup or Different options. This is necessary since we are not using any pre-configured options which would be the case with Scheduled Backups. As a result we have to specify a few things. Click Next to continue.

- Next up you have the option of selecting a full server backup or a custom backup in which you can specify volumes and files.

- Select Custom and click Next.

- At this point, the wizard is asking you to specify what it is you want to back up. Click on Add Items, expand Hyper-V, select the VM you wish to backup, and click OK.

Follow the rest of the wizard to finish the backup process. You will be prompted to select a destination for backing up your VM. Confirm your backup settings and finally execute the backup.

Backing up VMs with Windows Server Backup really is not difficult at all and you have probably done it a million times. I did want to draw your attention to this capability, as I find it useful when I need to do a quick backup of a single VM.

Posted by Elias Khnaser on 02/27/2013 at 1:38 PM4 comments

VMware and Citrix simultaneously launched their enterprise mobility management suites last week and if you are a follower of this blog you will know that I have been singing the tune of an end-user computing strategy for a while now that includes physical and virtual desktop management, MDM/MAM/MIM and collaboration. VMware and Citrix both are coming very close to realizing this strategy except they both are insisting on ignoring collaboration for some reason.

Today, we are going to tackle what the VMware suite offers and we will revisit the Citrix some time later. It is no big secret that I am an adamant believer in VMware’s vision, its products and its impressive, consistent ability to execute on that vision. That being said, I am a bit disappointed in this release of Horizon. Let’s take a look!

Horizon suite is comprised of three main components: Horizon View, Horizon Mirage and Horizon Workspace. The first thing you may have noticed is the name change for these products. Quite frankly, the renaming is a good move to unify the products under the Horizon brand. Horizon View is now at version 5.2 with some really cool new features:

- HTML 5 Support: This has been a long time in the making since we saw AppBlast at VMworld a few years ago. You will note that the word “App” has disappeared and VMware now simply uses Blast to refer to the launching of Windows desktops using any supported HTML 5 browser. While Blast is a welcome feature it is very limited in that it has no multimedia capabilities, no local resources redirect, no ThinPrint etc. -- it is simply a Windows desktop. Helpful for when you are in a jam and need to get something done, but most definitely not what you can use as an alternative to PCoIP.

- NVidia Hardware Accelerated 3D graphics support: More accurately, it's the NVidia Virtual Graphics Platform, which will allow remoting of 3D applications.

- Project AppShift: The gesture-oriented interface is aimed at improving and simplifying the Windows user experience with tablet devices.

- Support for Microsoft Lync.

As far as Horizon Mirage is concerned, I am truly disappointed that Mirage has not been integrated with View yet. This has really dragged considering how long it has been since VMware acquired Wanova, but I remain hopeful that integration will happen soon as integration is an imperative requirement for the suite.

In this release of Horizon Mirage, integration with ThinApp is now supported. But the more interesting feature is that Horizon Mirage now supports a multi-layered approach whereas as prior to this release, it only supported three layers, it now has the ability to isolate applications in their own dedicated layers, definitely a very cool and welcome feature that is very similar to the Unidesk approach, except Unidesk does it for VDI whereas Mirage does it for physical machines (even though it is capable of doing it for VDI as well, just not supported). Finally, Horizon Mirage now also integrates with VMware Fusion to extend Windows VMs on Apple MACs. I don’t understand how it was able to integrate with Fusion and delivery Windows VMs but can’t integrate with View, but oh well.

To aggregate and tie all these pieces together, VMware introduced Horizon Workspace, a self-service end-user portal for consuming enterprise mobility services. VMware organized Horizon Workspace into three tabs:

- Files is the integration of the much-awaited “Project Octopus,” VMware’s enterprise DropBox like tool for file syncing. This is a great addition to the suite and VMware has done a really good job integrating it. You can whitelist which applications can open files from Horizon Data, a very welcome feature for enterprises. The only drawback is that as of this release, it does not integrate with existing file storage systems that are present in all enterprises. VMware has promised it will not be long before that is a supported capability.

- Applications is where you aggregate your SaaS, mobile and Windows applications so your end users have a one-stop shop to request and consume these applications. This is obviously where VMware is also implementing its Mobile Application Management platform, which at this point is also very limited to simply presenting these applications. We were also promised that a quick post-release will add significant functionality, but more on that later.

- Computers is where Horizon stores all the Horizon View Windows VDI instances that a user has access to. When you click on a View desktop, your connection will be initiated using the VMware View client which remains a separate application. If no client is detected, your desktop is launched using the HTML 5 client within a browser.

Single sign-on capabilities is very cool in that you only need to sign on to Workspace once and get access to all the resources that are presented to you.

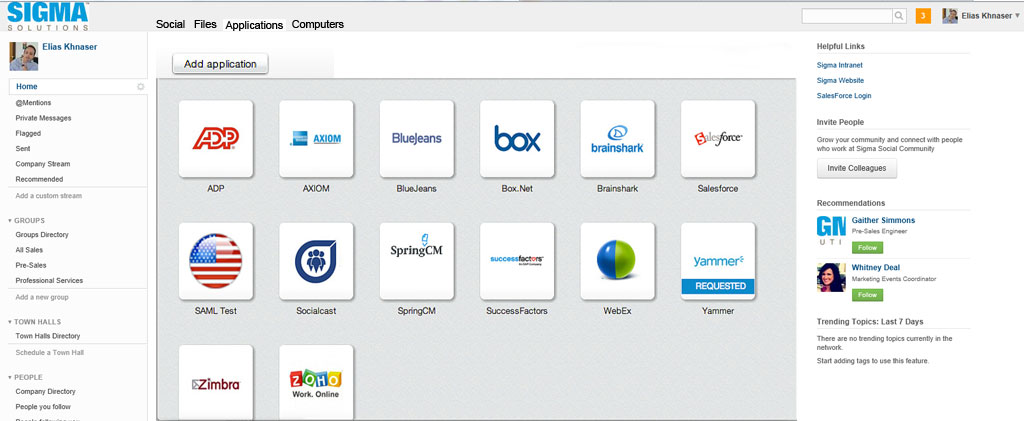

Now what really annoyed me is lack of integration with SocialCast, which would have been the better “Workspace.” Why not add the tabs to SocialCast? You would end up with a Social tab, a Files tab, an Applications tab and a Computers tab. Moreover, why not integrate SocialCast with Horizon Data so that I can insert and collaborate on a file within SocialCast? And why not even add a link to launch a WebEx meeting? VMware could have also very easily integrated the functionality of the SocialCast mobile application into the Horizon app and again created a tab or icon for social where all things SocialCast could have lived.

|

Figure 1. Socialcast, reimagined in my world. (Click image to view larger version.) |

Horizon Suite puts VMware well on its way to execute on its vision for enterprise mobility management, bu VMware needs to reinforce the product with acquisition. I think VMware will exhaust its resources trying to create a mobile virtualization platform to deliver a VM to Android devices, while it pursues a different strategy for iOS devices in the form of application wrapping. All the while, we still have to deal with mobile Windows apps and even BlackBerry at some point and who knows what else? The inconsistency of approaching these platforms is a distraction. Moreover, I believe that VMware’s bet that MDM will not be relevant will be very short-lived and it will realize very quickly that in order to have an end-to-end enterprise mobility strategy, MDM is necessary. All that being said, I think VMware should acquire an MDM company which also has a Mobile Application Management strategy. This will significantly reinforce the platform and unify the vision for iOS, Android and other platforms and if VMware still thinks that Mobile Virtualization Platform is a value-add then make it a feature but at least it will have a consistent way across all platforms.

I also think it's high time for VMware to acquire a Remote Desktop Session Host -- aka a Terminal Services company like Ericom -- in order to provide seamless Windows applications. Instead of dancing around the topic and one second creating an integration point for Citrix XenApp, another creating a direct PCoIP connection to RDSH, why not just own that technology? The time it will take to build the Blast product to be able to support Windows applications with all the required functionality is huge. Frankly, it's also unnecessary. There are 80 million users of RDSH worldwide; a highly successful acquisition in this space would resonate among the VMware customer base.

In a future blog, I'll look Citrix XenMobile. In the mean time, I am interested in hearing your views on some of the issues that I raised here.

Posted by Elias Khnaser on 02/25/2013 at 12:49 PM1 comments

If you are considering workloads for the cloud or an entire cloud strategy or moving your entire datacenter into the cloud, I think you are already on the right path for success within your IT organization. The cloud, when properly leveraged, could be a huge cost cutter and a significant ally to deliver services in a timely manner to meet business expectations.

While the decision to offload certain workloads to the cloud is a good one, spotting and understanding the fine print of what each service provider offers can be the determining factor between a successful cloud project and a disappointing one. So what are the questions you should be asking your cloud service providers? Here are a few that are imperative:

1. Who can see my information, and how do you audit changes?

Data loss and leakage is a huge concern in internal IT, let alone the cloud. Typically, administrators have access to manipulate your data (copy, send, delete, etc.) and there might be a legitimate reason to empower these admins. So the question is, what are the processes and procedures that the cloud provider has implemented to monitor these elevated privileges and how are they being used?

2. What is your data protection strategy?

Knowing who can see your data is important, and it is even more important to know what the cloud provider is doing to protect the data. You are essentially trusting the cloud with your data and you should be well versed in how the cloud provider intends to protect it, back it up and restore when asked. Does the provider have full or incremental backups? If the latter, can they restore full images from these incrementals at any given time?

3. How do you handle multi-tenancy?

The cloud is all about economies of scale. It's all about shared infrastructures and that should be an acceptable notion going into this endeavor. So, what you should be asking is, how does the cloud provider enforce logical separation, how do they ensure security isolation? How do they ensure that your data is not mixed with other tenants' data? In some cases where you require your data to be completely isolated, you should be asking, what systems am I sharing, how much load do you put on these systems?

4. If I use your service, am I locked in?

Most of us don't like the idea of being locked in with one cloud provider, as that gives them too much leverage over us. It's also a barrier to agility and flexibility. A very valid question you should be asking your cloud provider is, how easy is it to migrate to another provider should the need come up?

5. What Service Level Agreements do you provide?

If you put your workloads in the cloud and these workloads do not perform up to or better than your expectations and requirements, the entire project can be rendered pointeless. As a result, it is crucial that you investigate the SLAs that the cloud provider offers, especially if your workload is a production one which can impact your company's primary income-generating process.

6. What is your financial status?

You are obligated to ask about the financial health of the provider you are trusting your data and workloads to. You need to know how they are funded, if they are profitable and who is behind the company. If individuals, you need to know who they are and what their history is. If they're venture capitaists, you want to investigate the companies and find out what other companies they have launched successfully.

These are just a sample of questions that you should be thinking about. How they address these questions will be a good indicator of your cloud provider's readiness. For example if you ask for their financials and they take two weeks to respond, you can easily detect that no serious customer has used its services before. It's the small things that will make a huge difference when you are investigating these providers.

Posted by Elias Khnaser on 02/11/2013 at 12:49 PM2 comments

When I mention consumerization of IT, many people immediately think of mobile devices. Some might even think the conversation even goes towards desktop virtualization. Others might say it's both. They are all correct, but CoIT is more than just about mobile and devices and desktops. It's also about cloud and a new, agile, faster services delivery model. CoIT is also about Big Data Analytics.

CoIT represents a seismic shift in technology approach. It changes the way we do, well, everything! Many years ago, technology companies marketed their products and solutions to the enterprise customer first and foremost, and only some of these technologies made their way to the average consumer. This trend gave birth to our industry, where everything is enterprise-focused. In the not too distant past, this trend has shifted, it has changed drastically in the opposite direction. Technology companies now market directly to the consumer. In it, they have found a bigger market that adopts their technologies much more quickly and in smaller transactions that collectively represent massive income.

This shift is what is putting huge pressure on enterprise IT to cope, to adapt, to change its ways. I will not bore you with how CoIT is affecting the BYOD or desktop virtualization market, as you already know this. Instead, I want to focus on how CoIT is also putting pressure on the fast adoption of private cloud models inside organizations.

One of the challenges that IT faces today is the ability to control departments inside the organizations from going to Amazon-like providers, swiping their cards and getting instant access to a virtual machine that they can then use for whatever business initiative they have. When told not to do that, they ask for a similar service from IT, one that is as quick, effective and seamless, one which IT cannot deliver today. Such services still needs be requested, which is then channeled to the right person for approval and then takes anywhere from a day to a week to turn around. As far as the consumer is concerned, IT is slow, and they can see an alternative that gets the job done quicker and at an identified pay-per-use cost. They can easily make the case to business that they would have to pay a lot of money for IT to provision a server.

The private cloud's automation, orchestration, and self-service capabilities coupled with an identifiable, measurable showback or chargeback is the only way IT can modernize its processes and adapt to a market that is not going back to the old processes -- ever!

It does not end here. CoIT also is leading the big data analytics revolution, one that I think will change our world for the better. Because everything is consumer-centric and innovation is centered on consumer needs, we are seeing the rise of "sensors" embedded in all sorts of things, sensors that are capable of sending and receiving data. Imagine a sensor in your shipped package that informs you via e-mail or text message when it reached a port and when it will be picked up. Imagine a sensor in your luggage which can inform you if it is lost or on the wrong plane, or maybe even rerouted on the right plane and when it will arrive. How about a sensor in your body that maintains data about your health, average heart rate at different time of the day? Data that your doctor can either automatically check or can scan the sensor when you visit to get relevant information about your health.

Can you imagine all this data? It is estimated that in the next three years, more than four million big data analytics jobs will be created and only a third of these jobs will be filled. Looking for the next big thing? How about a career in big data analytics as a data scientist? Data engineer, maybe? Heck, make your own title because you are at the stage where you can. The need for skilled workers that understand data management and have a business-centric approach will sky rocket. That does not affect you? Think again.

I have been touting for a while now that converged infrastructures will slowly but surely become the norm. Imagine a world where you need to process in real time information that is coming at you from multiple different sources. This unstructured data is very different than the data warehousing structured data approach we are used to. Do you think these data scientists will have the time or patience for a "piece it together" IT approach? No. They will ask IT for hardware and if that hardware is not there fast, they will find alternatives. And what do you think that alternative will be? Assuming the data will be processed locally and the cloud will not be leveraged, that hardware will be converged for quick deployment and provisioning. Remember, it's all about business projects. These are no longer IT projects!

I am sharing this with you because I want to clear the air with all the confusion surrounding the consumerization of IT. I hope I made the case that it's more than just a significant shift in IT, that these are not isolated to the proliferation of devices or BYOD initiatives. CoIT is much bigger than that. We all should be aware of it and plan accordingly.

I am interested in your comments about how you are planning to deal with many of these issues in your organizations. Are you starting to see these trends? If so, how do you plan on transitioning or adopting technologies that allow IT to transform itself. IT will become even more relevant to the business, but IT roles will significantly change.

Posted by Elias Khnaser on 02/04/2013 at 12:49 PM2 comments

VMware recently released a new virtual appliance for vCenter Server. VMware vCenter Support Assistant 5.1, which centralizes and streamlines the creation of technical support tickets, is a free download that once registered with vCenter Server gives you access from within the vSphere client or vSphere Web Client to open and manage technical support tickets, generate and upload logs, and even scrub any potentially sensitive information out of these logs automatically. It's a pretty nifty tool for administrators, if you ask me. I despise opening tickets and uploading logs, and so this appliance makes it easy, convenient and accessible.

The VMware vCenter Support Assistant 5.1 supports the following vCenter Server versions:

- vCenter Server 4.1

- vCenter Server 5.0 Windows and Virtual Appliance

- vCenter Server 5.1 Windows and Virtual Appliance

From a hypervisors perspective, it runs on vSphere ESXi 4.1 and newer. While vCenter Server itself does not require Internet access, the Support Appliance and the vSphere Client you are using require Internet connection for obvious reasons to function properly.

Now, I have not been able to test this yet, but I am very curious if any of you have. Can you, for instance, access this appliance and open tickets, upload logs, etc., from an iPad? In a world dubbed by VMware itself going more and more towards mobile applications, it'd be an ideal application were it available on a mobile device. It is very convenient for me to use my iPad to open, manage or upload logs, especially if it is after hours, or on weekends where I am not in the office, maybe not even at home. Come to think of it, My VMware app on the iPhone could also be a good place for this but would need infrastructure access, I guess.

If anyone has tried this with a mobile device, please share your experience in the comments section on things like user experience, has the application been configured to be mobile friendly, and so on? Do I need to scroll and zoom, etc.? If you've used it, the floor is yours.

Posted by Elias Khnaser on 01/30/2013 at 2:58 PM0 comments

The cloud is going to arrive in bits and pieces, not in a large uprooting of environments. When I try to explain that to some customers, they automatically change the conversation because they are not interested in cloud. Some of them view the cloud as a threat or they simply don't understand it.

The irony comes when some of them change the conversation and they want to discuss orchestration. (VMware vCenter Orchestrator and Microsoft System Center Orchestrator are examples, and are both great orchestration products.) I can't help but smile at that point because they are going down the path of a private cloud without realizing it.

Orchestration is at the heart of the private cloud. It's the tool that you will use to automate repetitive tasks that you do daily or weekly. When designing your private cloud, orchestrators are what you will need to tie in with your ticketing system so that you can automate user requests.

Your private cloud might be designed so that when a user needs a new server, he fills in a service request from the service request portal. That service request will then require one or more approvals and once approved it is then passed on to orchestrator for execution at the different levels of systems within your private cloud.

If you were building your private cloud around Microsoft technologies, Orchestrator would receive the approved service request from System Center Service Manager and execute the automation in System Center Virtual Machine manager, thereby completely automating what is otherwise a manual process of right clicking and deploying a VM from scratch or template.

It is imperative to realize that orchestration is a fundamental building block of your private cloud and while today you may only be interested in automating repetitive, boring tasks, you should recognize that you are almost half way there from a cloud perspective. What is left is integration with ticketing and change management systems, monitoring and some other components.

What admins need to understand is that the private cloud is not just about them anymore, and that they are building a framework that has built in accountability, change management, ITIL best practices, chargebacks or showbacks, etc.

I encourage you, if you have not started working with an orchestration tool, to start doing so. Once you start down the orchestration path, you are well on your way to a much more robust environment that is more agile and responds to customer needs much faster. If you have automated VM provisioning and have built the right templates, you are then simply approving requests. Imagine how much time you now have on your hands to do other things that are more exciting and important to the business, like building showback models.

Stopping at a highly virtualized environment is not good enough anymore and getting pre-occupied in whether vSphere or Hyper-V is better is also a waste of time and a very old school mindset that will not save you money and that will keep you isolated.

VMware and Microsoft both have provisions to manage one another's hypervisor. Your job now is to build the framework to automate repetitive tasks in a trackable way so that you have time to execute other projects.

I am very interested to know how many of you are working with an orchestration tool or are looking to start working with one. Share your comments here.

Posted by Elias Khnaser on 01/23/2013 at 2:59 PM7 comments

Lately, I have been asked about my thoughts around Mobile Device Management and whether or not I thought it was a valid and valuable solution in the enterprise or whether it was a passé -- essentially an outdated solution. My answer? Well it's not that cut and dried, it's not that simple.

I have heard the arguments about how MDM is dead, that Mobile Application Management is the wave of the future and I agree with that. What I don't agree with is when bloggers, analysts, etc. deal in absolutes. I'm not just a blogger -- I also consult and deal with enterprises daily and I can assure you that, as of right now, MDM is not dead. But, it's also not a smashing success at any organization.

Let's face it, MDM is an outdated approach that is still trying to control the device, lock it down, dictate usage, and so on. In today's world as soon as you tell the user that you want them to enroll their device in order to get access to corporate resources at the expense of IT-managed device and remote wiping capabilities, will find that user opting out immediately.

We live in a world where the consumer is king. Long gone are the days of IT command and control. Today, the focus should be on governing the device, and not even all of the device but just the corporate assets that reside on that device.

So MDM isn't dead exactly. We're in a transition period. IT has not fully accepted the fact that they have lost the battle. Many of them will nod their heads and say we get it, but in practice they are still the same IT shop of 10 years ago.

There still is a legitimate need for MDM. I was at a client that wants to continue to issue phones and now wants to issue tablets to users. The client wants to own the devices so they can continue to manage it. This particular client has convinced its management to continue to make that investment. MDM is very valid in this situation.

Yet, in most cases with most of my customers, MDM was a purchase that either was never implemented or was implemented with such basic features that it might as well not have been.

In 2013, many organizations will finally get around to implementing MDM, and what we'll see is a surge in MDM deployments with very loose security and enrollment requirements. This will satisfy many of the security officers' need for DLP security enforcement and will be a good bridge into MAM as that technology continues to mature.

I predict in the 2014-2015 time frame that MAM will quickly replace MDM as IT finally comes to terms with the fact that its efforts to regain control have proven fruitless and also as users also come to terms that in order to consume corporate resources and get convenient access anytime, anywhere on any device, some level of governance is necessary.

And that's where governance of enterprise mobile applications and data will come into play. Instead of launching an SSL VPN for the entire phone, let's launch an SSL VPN for that particular application. Rather than remotely wipe the device, we selectively wipe corporate resources. And so on.

If you are watching the MxM space closely, you will find that consolidation is inevitable but also that many of the MDM vendors have seen the writing on the wall and are starting to venture into the realm of MAM as a natural evolution of their products.

Will MDM ever die? Well, no technology ever completely dies, maybe it'll just become less mainstream. There is always a use case for technologies like MDM, especially in high-security environments like government, pharmaceuticals and others. What we'll see is a natural evolution to Mobile Application Management, which is a lot more consumer friendly, less intrusive, and just as secure and manageable.

Posted by Elias Khnaser on 01/16/2013 at 12:49 PM6 comments

I'm wrapping up my TrainSignal course (shameless plug) on Microsoft Exam 70-247: Configuring and Deploying a Microsoft Private Cloud with System Center 2012 and I've come across a few tips that I thought would be useful. Let's start off with a useful how-to around ISOs.

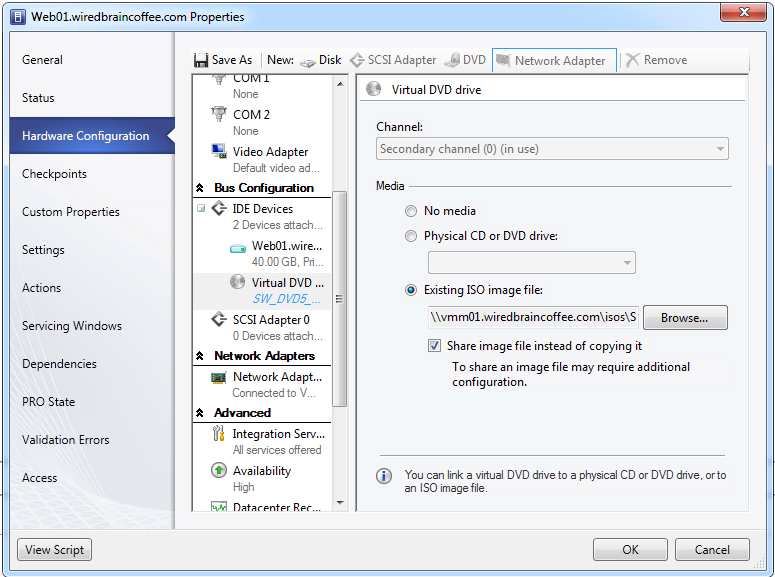

When you create a VM, the default behavior of an ISO attached as a virtual CD/DVD is to copy that ISO into the VM's directory. This is Microsoft's way of avoiding Live Migration failures when you try to move a VM with an attached virtual CD/DVD from host to host. As you should be aware, in both Hyper-V and ESX/ESXi an attached ISO would prevent a vMotion or a Live Migration.

Personally, I prefer that the VM fail the migration -- it would then force me to change my configuration rather than implement this overly over-thought and very space-inefficient method. Luckily, Microsoft has provided a work-around that is much better: Why copy the ISO image? Instead, share the ISO image from the VMM library, end of story. Of course, I wish it was that simple. The checkbox in Fig. 1 enables the sharing of the ISO image (as opposed to copying it), but you still have to perform some added steps in the background to make it work:

- Grant the VMM Service Account Read access on the share that is hosting the ISO image in the library.

- Grant each one of your Hyper-V hosts Machine Account read access to the shared location of the ISO.

|

Figure 1. In Hyper-V, enable sharing of the ISO and then perform the extra steps. (Click image to view larger version.) |

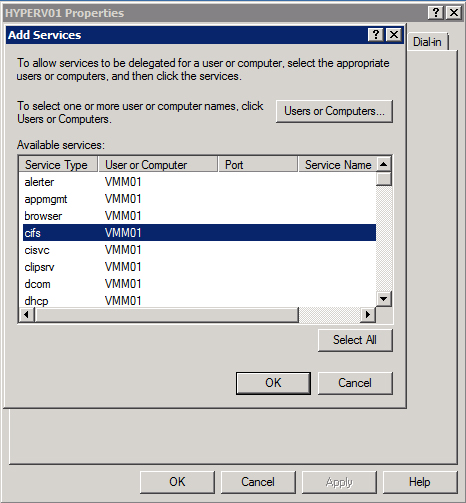

Once you complete the two steps, you have to configure constrained delegation. You can do this from any computer that has the Active Directory Users and Computers console or from a Domain Controller.

Constrained delegation basically instructs every Hyper-V host's machine account to present delegated credentials for the CIFS/SMB protocol to the VMM server where the library hosting the ISO file resides. You will have to perform these steps on each Hyper-V host's machine account in AD separately:

- Open Active Directory Users and Computers.

- Locate the Hyper-V host machine account, right-click and go to Properties.

- Select the Delegation tab and click Select this computer for delegation to specified services only.

- Click Use any authentication protocol.

- Click Add.

- Add the VMM Library servers that contain the ISO you want to share and click OK.

- In the Available Services list, click cifs (see Fig. 2) and click Add.

|

Figure 2. In the Available Services list, click cifs and click Add. (Click image to view larger version.) |

This should now allow you to share the ISO image each time you attach it. This will also allow you to have successful Live Migrations while a CD/DVD virtual drive is attached.

Posted by Elias Khnaser on 01/09/2013 at 2:57 PM2 comments

It is now tradition to kick off the new blogging season with predictions for the upcoming year. Last year I made some pretty good predictions, so let's see if my crystal ball is even more finely tuned for 2013.

The Private Cloudd

This year

is, without a doubt, the year of the private cloud. We will see VMware, Microsoft and others make a huge push for automation and orchestration at every level and the technologies resulting from acquisitions made in 2012 by Cisco and others will find their way into products in 2013. I predict that automation and orchestration at the converged infrastructure level will play a major role in data centers. I also predict that Microsoft, VMware and other companies' private cloud solutions will go mainstream, with enterprises rushing to upgrade from highly virtualized environments to private cloud environments.

Converged Infrastructures

Systems from providers like VBLOCK, Flexpod, PureSystems and others will become much more favorable to organizations when they're finally convinced that the speed, agility and support of infrastructures from those providers far outweighs the traditional piece-it-together-myself approach. As pressure is placed on IT to break the 80/20 rule and do more with less, enterprises will start to finally let go of the notion that we must build and start to adopt these converged infrastructures that will integrate with automation and orchestration a lot more seamlessly than the traditional approach.

Software Defined network

While there is a lot of noise and buzz about SDN, this technology will not realistically start to be considered before the last quarter of this year. It will also be slow to adopt and will go through the same cycle of doubt and resistance that server virtualization went through in the early stages, followed by rapid adoption. I predict that VMware will play a major role in this new revolution but I also predict that it will not enjoy the same level of monopolized success as was the case with server virtualization. Players like Cisco, Microsoft, Juniper and others will most definitely weigh in and pick up market share.

Public Cloud

We will see more and more organizations export certain services and technologies onto the public cloud, and we will also find a huge role for the hybrid cloud not only in IaaS deployments but also in mixed services deployments. Take, for instance, unified communications -- we might find elements of this deployment in the public cloud while IT maintains certain aspects on-premise.

End-User Computing

We will continue to see organizations adopting a complete, end-to-end end-user computing strategy with desktop virtualization and mobile device management and mobile application management leading the charge. I think that all the voices that were vehemently opposed to desktop virtualization have started to come around. Citrix is very well positioned to capitalize in this space, while VMware has significant catching up to do in several areas, including, specifically, MDM. For that matter, I predict that VMware will acquire something in this space. If it's smart, it will finally acquire Teradici, which would increase its desktop virtualization offering, considering PCoIP can now connect to Microsoft RDSH. Cisco acquiring a company like Ericom would even be better. The Horizon product would also need to be enhanced in order to level the playing field.

Big Data Analytics

I'm a huge believer in big data analytics -- it will change the world and the way we do things. I think 2013 will amplify the tools and capabilities to use big data analytics in an easier and more streamlined approach, where small and large business will be able to benefit from it.

We can't end the predictions blog without acquisition speculation now, can we? In a nutshell, will be the year of Cisco and HP making some key acquisitions. Cisco would be best served acquiring Citrix, but getting NetApp would also interesting. I predict HP will break up into at least two and maybe even three entities. EMC will make a networking acquisition in 2013, with Arista Networks a very likely candidate even though Brocade would be the better choice. (There are many factors against the Brocade acquisition.) VMware will make a few acquisitions and probably an MDM provider -- possible candidates are OpenPeak or AirWatch.

If Citrix does not get acquired, I predict a quiet acquisition year for Citrix. It has made fantastic acquisitions in 2012, but if they do end up buying anything this year it won't be for end-user computing. More likely, it will be more on cloud and probably something in SDN or cloud security.

Whatever 2013 has in store for technology -- virtualization and cloud computing, in particular -- it will be a great year for innovation. Agree or disagree with my predictions? Let's hear it in the comments section.

Posted by Elias Khnaser on 01/07/2013 at 12:49 PM0 comments

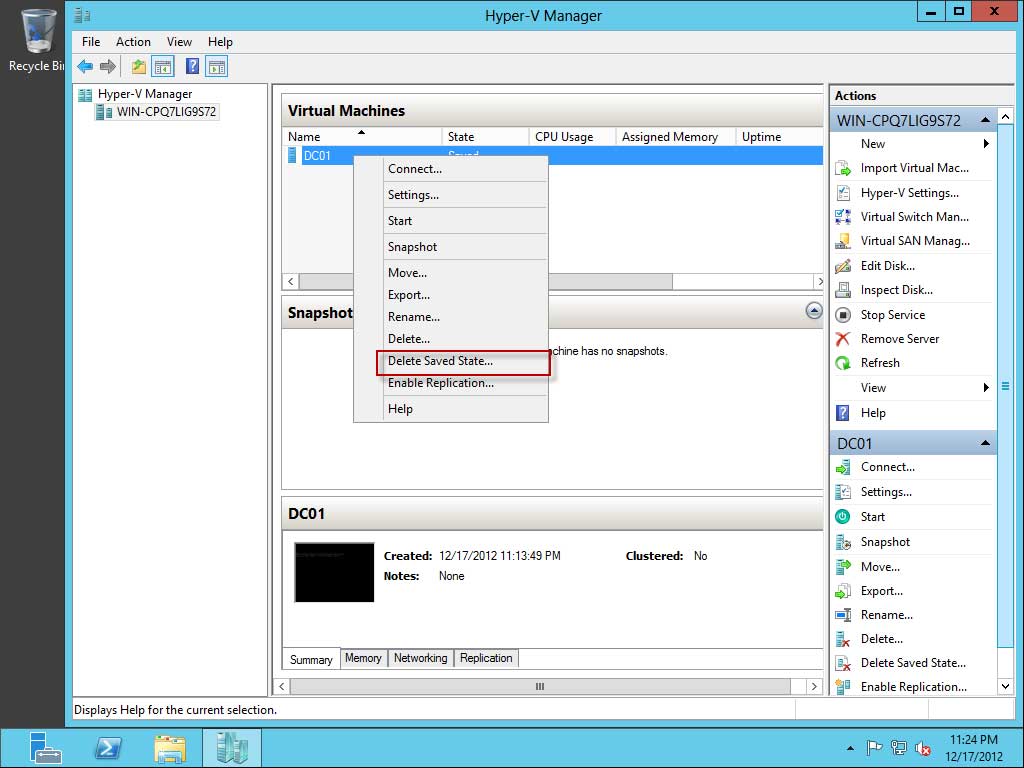

I was engaged with a customer recently that was recovering from a SAN meltdown and unreliable (or, more like non-existent) backups. To make a long story short, while we were trying to power on Hyper-V virtual machines, we found quite a few of them were in a saved state with corrupt or missing hard drives.

We found ourselves in a bit of a pickle -- we could not power on the VMs as it would throw an error because one or more hard drives were missing, and we could not delete the missing disks from the VM settings as that was not allowed either.

If you find yourself in a similar situation, here is a tip that will help you at least power on the VM. I must admit, though, that it did not help us much, as most of the missing disks were the data drives and what was salvaged was mostly operating system disks. My customer was baptized under fire about the importance of reliable backups.

Even so, here is what we did:

- Launch Hyper-V Manager.

- Right-click the VM and click on Delete Saved State.

- Confirm your command.

- Now open the VM settings and delete the missing hard disks.

- Power on your VM.

|

Figure 1. Delete Saved State command in Hyper-V. (Click image to view larger version.) |

The Delete Saved State command is the equivalent of pulling the power cord on a physical server: It will literally kill power to the VM and, as a result, release the locks that the saved state has on the VM files that are preventing you from making changes to the settings.

Posted by Elias Khnaser on 12/19/2012 at 3:02 PM2 comments