In-Depth

The Virtualization of Infrastructure Optimization

As companies virtualize physical servers and provision processor and memory resources to specific virtual machines delivering I/O to these VMs presents the next set of challenges. We examine these and look at two I/O solutions: InfiniBand and 10 GbE.

Network infrastructure optimization (I/O) is emerging as the next obstacle to delivering on the promise of server virtualization. As companies virtualize physical servers and provision processor and memory resources to specific virtual machines (VMs), delivering I/O to these VMs presents the next set of challenges. Virtualized servers demand more network bandwidth and need connections to more networks and storage to accommodate the multiple applications they host. Furthermore, when applications operate in an environment where all resources are shared, a new question arises: How do you ensure the performance and security of a specific application? For these reasons, IT managers are looking for new answers to the I/O question.

Why Virtualization Is Different

Traditional servers do not encounter the same I/O issues as VMs. The objective of server virtualization is to increase server hardware utilization by creating a flexible pool of compute resources that can be deployed as needed. Ideally, any VM should be able to run any application. This has two implications for server I/O:

-

Increased demand for connectivity: Each VM needs physical connectivity to all networks. In this model, each VM may require connectivity to FC SANs, secured corporate Ethernet networks, and unsecured Ethernet networks exposed to the Internet. This creates a requirement to isolate and maintain the security of each of the connections to these separate networks.

-

Bandwidth requirements: In the traditional data center, a server may use only 10 percent of a processor's capacity. By loading more applications on the device, utilization may grow beyond 50 percent. A similar increase will occur in I/O utilization, revealing new bottlenecks in the I/O path.

Options with Traditional I/O

Traditional I/O leaves administrators two options to accommodate virtualization demands. The first is to employ the pre-existing I/O and share it among virtual machines. For several reasons, this is not likely to work. Applications may cause congestion during periods of peak performance, such as when VM backups occur. Performance issues become problematic to remedy because diagnostic tools for VMware are still relatively immature and not widely available. Even if an administrator identifies a bottleneck's source, corrective action may require purchasing more network cards or rebalancing application workloads on the VMs.

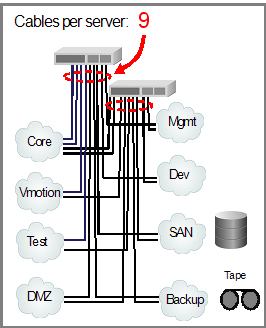

Another issue with pre-existing I/O is the sheer number of connections needed by virtualization. Beyond the numerous connections to data networks, virtualized servers also require dedicated interconnects for management and virtual machine migration. Servers are also likely to require connectivity to external storage. If your shop uses an FC SAN, this means FC cards in every server.

For these reasons, most IT managers end up needing more connectivity, which raises the second option: add multiple network and storage I/O cards to each server. While this is a viable option, it is not always an attractive one. Virtualization users find they need anywhere from six to 16 I/O connections, which can add significant cost and cabling. More important, it drives the use of 4U-high servers to accommodate the needed I/O cards. The added cards and larger servers increase cost, space, and power requirements, so I/O costs may exceed the cost of the server itself.

Server blades present a different challenge: accommodating high levels of connectivity may prove to be either costly (sometimes requiring a double-wide blade) or impossible (depending on the requirements).

What's in Store for Virtual I/O

Running multiple VMs on a single physical server requires a technology that addresses the following concerns:

-

Avoids the need to run a slew of network cables into each physical server.

-

Maintains the isolation of multiple, physically distinct networks.

-

Provides sufficient bandwidth to alleviate performance bottlenecks.

Emerging I/O virtualization technologies now address these concerns. Like VMs that run on a single physical server, virtual I/O operates on a single physical I/O card that can create multiple virtual NICs (vNICs) and virtual FC HBAs (vHBAs) that behave exactly as physical Ethernet and FC cards do in a physical server. These vNICs and vHBAs share a common physical I/O card but create network and storage connections that remain logically distinct on a single cable.

Virtual I/O Requirements

For virtual I/O to work in conjunction with VMs, there are specific virtual I/O requirements that the physical I/O card and its device driver software must deliver, including:

-

Virtual resources (vNICs and vHBAs), deployable without a server re-boot

-

Single high-bandwidth transport for storage and network I/O

-

Quality of service management

-

Multiple operating systems

-

Cost effectiveness

While these attributes serve the needs of both traditional server deployments and virtualized servers, the demands of virtualization make these attributes especially important in that world. Virtual NICs and HBAs, for example, eliminate the need to deploy numerous cards and cables to each virtualized server. Unlike physical NICs and FC HBAs, vNICs and vHBAs are dynamically created and presented to VMs without needing to reboot the underlying physical server.

Similarly, a single transport can reduce the number of physical I/O cards and cables the physical server needs to as few as two. A primary objective of virtual I/O is to provide a single very-high-speed link to a server (or two links for redundancy) and to dynamically allocate that bandwidth as required by the applications. Combing storage and network traffic increases the utilization of that link, which ultimately reduces costs and simplifies the infrastructure. Since each physical I/O link can support and handle all the traffic the server can theoretically deliver, multiple cables are no longer needed.

Quality of service (QoS) goes hand in hand with a single transport because the use of a shared resource implies that rules will be available to enforce how that resource is allocated. With virtualization, bandwidth controls become particularly useful since they can be used in conjunction with virtual I/O to manage the performance of specific virtual machines. In production deployments, critical applications can receive the same guaranteed I/O they would achieve with dedicated connections without the need for dedicated cabling.

Finally, compatibility and cost requirements are obvious. For I/O to be consolidated, it must function across servers with different operating systems. It must be cost effective because companies will generally only pursue an alternative course of action if it is financially feasible and as cost effective as their current approach.

Virtual I/O Choices

With these requirements in mind, companies now have a choice of two different topologies to meet their virtual I/O requirements: 10 Gb Ethernet and InfiniBand.

A Partial Solution Today: 10 GbE

Compared with 1 Gb Ethernet, next-generation 10 Gb Ethernet solutions offer more performance and new management capabilities. Vendors now offer "intelligent NICs" that allow a single card to spawn multiple virtual NICs, thus advancing these solutions for virtualized I/O. These solutions can consolidate storage and network traffic into a single link, assuming the storage is iSCSI (as there currently is no bridge to Fibre Channel attached storage).

Many consider 10 Gb Ethernet to be the network interface that is the logical choice for consolidating current 1 Gb Ethernet data networks and 4 Gb Fibre Channel storage networks into a common network. Ethernet is pervasive, cheap, and well understood by businesses. When coupled with the additional bandwidth that 10 Gb Ethernet provides, the interface seems a logical choice.

Next-generation Ethernet cards are sold by vendors such as Intel, Neterion, and NetXen and are available in two configurations -- standard and intelligent. An intelligent NIC card can create multiple virtual NICs (vNICs) and assign each VM its own unique vNIC with its own virtual MAC and TCP/IP address. Using these addresses, each VM can have its own unique identity on the network that enables each VM to take advantage of features such as quality of service that some TCP/IP networks provide. However, if companies use a standard 10 Gb NIC, all of the VMs on the physical server will need to use and share the same MAC and TCP/IP address of the 10Gb NIC. This additional intelligence comes at a price. An intelligent 10 Gb Ethernet will cost at least $1000 versus $400 for a standard 10 Gb NIC.

While 10 Gb Ethernet does provide a strong follow-on to 1 Gb Ethernet, as an I/O virtualization approach there are issues that may limit its applicability in enterprise data centers. For one thing, sharing a common connection for all application data is not always possible in enterprise data centers. In these settings, IT managers often require physically distinct networks to maintain the security and integrity of application data as well as to guarantee they meet agreed-upon application service level agreements (SLAs).

Storage presents another challenge. Fibre Channel remains the most common storage transport in enterprise data centers, and although Fibre Channel over Ethernet (FCoE) standards are under development and expected in the near future, after they are adopted it will still take some time before FCoE-based storage systems are available and deployed in customer environments, assuming this happens at all. Although companies can connect their storage systems over Ethernet networks using iSCSI now, using TCP/IP for storage networking becomes problematic in high-performance environments.

When too many NICs try to access the same storage resources on the same network port using Ethernet links, packets are dropped and retransmissions occur, slowing high-performance applications. Furthermore, on switched Ethernet networks, the queues on Ethernet ports can fill up, at which time the switch starts to drop packets forcing the server to retransmit packets. In storage networks with a physical server employing multiple VMs, the odds of this occurring increase because read and write I/O-intensive applications will transmit and receive more data in a storage network than they do in a data network. These higher throughputs found in storage networks further contribute to the problems of using TCP/IP.

The InfiniBand Option

InfiniBand is a competing topology to Ethernet that has been largely utilized and deployed in high-performance computing (HPC) environments. It is now emerging as a viable option for enterprise business computing. Unlike Ethernet, InfiniBand was originally envisioned and designed as a comprehensive system area network that provides high-speed I/O, making it well-suited for the high-performance communication needed by Linux server clusters used in HPC environments. Until now, most businesses did not demand the type of bandwidth and high-speed communication that InfiniBand provides, and as a result InfiniBand support was not widely pursued outside of the HPC community.

New options are emerging, however, to employ the high-speed transport capabilities of InfiniBand while retaining Ethernet and Fibre Channel as the network and storage interconnects. This hybrid approach incorporates InfiniBand HCAs within servers as the hardware interface, but presents virtual NICs and HBAs to the operating system and

applications. An external top-of-rack switch connects to all servers via InfiniBand links, and connects to networks and storage via conventional Ethernet and FC.

The approach capitalizes on one of the distinctive benefits of InfiniBand's design: the interface was originally intended to virtualize nearly every I/O found in datacenters including both FC and Ethernet – a feature that 10 Gb Ethernet does not yet support. Because InfiniBand is already shipping 10 Gb and 20 Gb HCAs and its roadmap calls for 40 and 80 Gb throughput, the interface has ample bandwidth now and more becoming available in the future.

Adding to InfiniBand's appeal is that InfiniBand is highly reliable switched fabric topology where all transmissions begin or end at a channel adapter. This allows InfiniBand to deliver much higher effective throughput than 10 Gb Ethernet where its effective throughput of 30 percent is generally viewed as the maximum.

While InfiniBand possesses QoS features similar to 10 Gb Ethernet (in that it can prioritize to certain types of traffic at the switch level), administrators can also reserve specific amounts of bandwidth for virtualized Ethernet and FC I/O traffic on the HCA. Bandwidth can then be assigned to specific VMs based on their vNIC or vHBA, so administrators can increase or throttle back the amount bandwidth to a VM based upon its application throughput requirements.

The cost of InfiniBand HCAs has also been reduced by its adoption in HPC environments: 10 and 20 Gb InfiniBand HCAs are priced as low as $600 and are available from several vendors, including Cisco Systems, Mellanox Technologies, and QLogic Corporation. One new component that enterprise companies may need to connect their existing Ethernet data networks and FC storage networks to server-based InfiniBand networks are InfiniBand-based solutions such as Xsigo Systems' VP780 I/O Director. These new solutions provide virtual I/O while consolidating both Ethernet and Fibre Channel traffic on a single InfiniBand connection to the server. Because the traffic to respective networks is brought out through separate ports, the approach maintains network connections that are logically and physically distinct.

Ten Gb Ethernet and InfiniBand present the best options for companies that need to improve network I/O management for the growing number of VMware servers in their environment. Both topologies minimize the number of network cards needed to virtualize I/O while providing sufficient bandwidth to support the VMs on the physical server. However, for data centers that must guarantee application performance, require segregated networks, and need the highest levels of application and network uptime, InfiniBand is emerging as the logical topology to use to virtualize I/O.