How-To

Build 2020: Azure News

Paul Schnackenburg looks at some of the highlights in the Azure space, and how you can apply some of these new technologies and improvements to your cloud deployments.

The Microsoft Build 2020 conference has been and gone. Of course, this year it was delivered virtually, which isn't the same as an in-person conference, but on the other hand the virtual delivery (48 hours continuous livestream) and the fact that it was free opened the conference to many more attendees (230,000 people registered) who never would have been able to travel to the U.S. to attend in person.

This article will look at some of the highlights in the Azure space, and how you can apply some of these new technologies and improvements to your cloud deployments.

Azure Synapse

Combining enterprise data warehousing, DW, (think historical data in traditional relational databases that you analyze after the fact to predict the future) and Big Data analytics (dump unstructured data into huge datastores and create the schema when you need to read and analyze it) into a single solution is the goal of Synapse. which we first met at Ignite in November 2019. A challenge is managing data that is both operational and the analysis of that data. Traditionally, there are two options: firstly separate systems which means you have to set up complex extract, load and transform (ETL) pipelines to move the data from the operations side into the DW for later analysis; secondly you overprovision your systems to handle both the day to day transaction side of the database as well as the analysis, which is costly.

At Build Microsoft introduced Synapse Link which provides a cloud native hybrid transactional analytical processing (HTAP) solution, linking Cosmos DB (with Azure SQL/PostgreSQL/MySQL coming in the future) directly connecting current data into your historical analysis solution. You can also link Synapse with PowerBI to create interactive dashboards.

[Click on image for larger view.] Synapse and PowerBI (courtesy of Microsoft)

[Click on image for larger view.] Synapse and PowerBI (courtesy of Microsoft)

Azure CosmosDB

The coolest (and most expensive?) planet-spanning database on the globe added a few new features as well. The always free tier of 5 GB of storage and 400 Request Units /s (RUs) will help with development and test scenarios as well as small production applications.

If you have a workload that is idle most of the time with spikes in request processing, the new serverless option might be a good fit. Instead of provisioning a set RU value up front you simply pay for the database stored plus the RUs used. CosmosDB serverless will be available in preview in the next couple of months.

On the other hand, if you have a high throughput workload that nevertheless varies, the autoscale option might be cost effective. You define a maximum RU value and the database will automatically scale between 10 percent of that value and the max. You are then billed for the highest RU the system used each hour. Autoscale (known as autopilot during the preview) is now Generally Available (GA), allowing you to set custom values for max RUs as well as enable it for existing databases and containers.

For additional security you can now use your own encryption keys that you control as well as configure Private Link for a CosmosDB to restrict access to your private IP addresses only. The SLA when you have multiple regions as writable endpoints for your Cosmos database is now 99.999 percent.

Azure Kubernetes Service

Woolworth's, a large supermarket chain here in Australia, has been using Windows containers to modernize their applications to run in Kubernetes (K8s), which turned out to be important when the pandemic hit and they had a 330 percent increase in the traffic to their online shopping site. The ability to run Windows and Linux containers side-by-side in a K8s cluster (on different hosts) opens up a lot of options, particularly for taking older ASP.Net applications and migrating them to K8s.

Developers who are looking at modern approaches are often daunted by microservices patterns -- the old joke is that if you take an application and make it into a distributed system, you now have two problems instead of one. Distributed Application Runtime (Dapr) is an open source project from Microsoft that attempts to help developers by providing building blocks for communication between services as well as connecting to external systems through a sidecar architecture (either as a container or as a process).

Arc

Azure Arc is a very interesting technology that actually comes in three flavors. The first one is Arc-enabled Windows and Linux servers both as VMs and physical machines, on-premises and in other clouds that can be managed through the Azure control plane. You can use Azure Policy and security baselines across all your workloads and manage them in a single place.

Secondly, there's Arc for databases, which will let you manage SQL databases in your datacenter, again through Azure's control plane (portal, API and PowerShell / CLI).

Finally, there's Arc for K8s, which was released in public preview at Build. This lets you manage and govern your K8s clusters from Azure, whether they're in Open Shift, in other clouds and in Azure Stack Hub. This lets you inventory and organize all your K8s clusters and applications in a single pane as well as apply Azure Policy across all your deployments. You can also push out configurations (including YAML configuration files and Helm charts), which can be stored in any Git location (Github, Github Enterprise, Git on-premises etc.).

Azure ARM Templates and Language

There was a time over a year ago when there was very little love shown for Azure Resource Manager (ARM), the underlying templates and control plane that powers all of Azure. This has changed and Microsoft is pushing hard to improve the experience. For example, the new Deployment Scripts (preview) experience combines ARM templates with the ability to run a PowerShell or Bash script from the template itself.

Another sign that ARM is growing up is the new Arm Testing Toolkit (ARM-TTK) that does static analysis on your template code. There's another feature that's a favorite of mine -- what-if. This lets you test your template without actually deploying resources, which is a real time saver. You can also incorporate this with the -confirm option, which will run what-if first and then actually run the deployment if the what-if passes OK.

A sore point has been good management of templates across an organization as the dependency on them grow as businesses adopts infrastructure as code. The new Template Specs (preview coming in the second half of 2020, sign up here) should help. It's a private registry of templates, that tracks versioning and lets you group multiple templates and supporting files together for deployment and sharing. There's also a new language coming (codename Bicep ... get it? Arm -- Bicep), today ARM templates are built on JSON, which means they're not very readable. It will be interesting to see what they come up with; they're looking to open source the 0.1 version in the next few months.

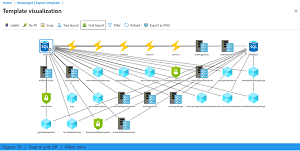

The new ARM template visualizer, available both in Visual Studio / Code and in the preview Azure portal, is a powerful way of seeing all the components in a template. It also lets you edit the labels, try different layouts, filter out objects you don't need to see and export the diagram as a PNG graphic.

[Click on image for larger view.] ARM template visualizer

[Click on image for larger view.] ARM template visualizer

Conclusion

Obviously there are many more improvements and new features that I couldn't cover here. Highlights include: new rules for routing with Azure Front Door (AFD); the Confidential Computing DCs v2 VMs are generally available with the Azure Attestation service in preview; Azure Migrate supporting directly migrating ASP.Net applications from on-premises to AKS; Azure Quantum Service and the Q# language going into public preview later in 2020; and AKS coming to Azure Stack (the "buy an integrated hardware rack of servers and run Azure in your own datacenter" solution). I'm an IT Pro, not a developer, but since Build is a developer conference there were a few highlights for them as well.

About the Author

Paul Schnackenburg has been working in IT for nearly 30 years and has been teaching for over 20 years. He runs Expert IT Solutions, an IT consultancy in Australia. Paul focuses on cloud technologies such as Azure and Microsoft 365 and how to secure IT, whether in the cloud or on-premises. He's a frequent speaker at conferences and writes for several sites, including virtualizationreview.com. Find him at @paulschnack on Twitter or on his blog at TellITasITis.com.au.