It's hard telling just how many PaaS vendors there are out there. I found one list that includes 20, so that's a starting point. Microsoft Azure, Amazon Web Service Elastic Beanstock, Google Apps, and Force.com PaaS from Salesforce.com are all, of course, prominent players.

Currently, when People think about PaaS, they associate it with the Web development community, but Lucas Carlson, founder and CEO of AppFog has a much grander view of PaaS and its role in the cloud. In his vision, PaaS provides the last mile to the cloud, and will expand the cloud's presence by bringing many more developers to it, while promoting SaaS and IaaS opportunities.

"Clearly, to deploy large SaaS implementations, you need PaaS technology to power them," Carlson declares, adding "PaaS is the best sales tool for IaaS." He goes on to say that PaaS is more "ground-shifting" and provides greater opportunities than virtualization, and his company already has "tens of thousands of customers." All this, and the golden age of PaaS is just beginning. As he puts it, "To me, it's a Greenfield opportunity."

You'd never guess this guy is the boss of a PaaS company, would you?

For its part, AppFog recently took the wraps off an add-on program for third-party service providers that provisions the accounts purchased by developers via a single interface, and displays partner information for the customer to "easily integrate additional functionality from these third-party services into the applications they build on the AppFog platform. This will make it easier still for developers to deploy and scale web-based applications without having to become part-time IT support on the side."

Carlson believes that the PaaS company with the best ecosystem will be victorious in the market, and toward that end, AppFog has added Mongolab--which will offer its hosted MongoDB to the AppFog community--and New Relic, which says its Web application performance tool gives developers "deep, 24x7 visibility from the end-user experience all the way to a line of application code, which is a crucial capability for developers using AppFog for deploying apps to the cloud."

Posted by Bruce Hoard on 01/06/2012 at 12:48 PM5 comments

I like keeping up on users, so when this case study from Avere Systems popped up, I was intrigued because it described a straight-forward solution to a vexing problem that more than one VDI system has encountered.

It all starts with the Belchertown School district in Massachusetts, which thought it was making all the right moves. Its IT team put together a system based on five Cisco UCS systems running VMware View connected to 10 data stores hosted on a NetApp FAS2020, and supporting one-terabyte volumes, with a 20 percent snapshot reserve. Most VMDK files are held to 40GB and are typically linked clones from one of a few golden master images.

This configuration supports PowerSchool, a virtualized application utilized by teachers and students alike to access class materials, log attendance and store and back up their work and grades. All in all, a locked-and-loaded system--until the boot storm ensued when some 250 concurrent users logged on at the same time.

It typically took 15 minutes for the storm to subside. During that time, students were unable to access their class documents, teachers got errors when they tried to save their work, and the school district's small, but valiant, IT team couldn't keep up .

They evaluated bigger, more updated NetApp filers, but the costs were prohibitive, and the time required to implement them was unacceptable. The remedy was to be found at a VMware user group meeting in Maine, where they discovered Avere's NAS Optimization solutions.

It was a Eureka moment for Scott Karen, the school district's director of Technology. As he puts it, "Avere was able to get us an FXT Series node for evaluation almost immediately. We tested it, and found it eliminated the boot storm and turned 15 minutes of logging in per class into about three minutes with no more write errors or corrupted files. Overall latency is no longer an issue. And it didn't require me to rethink and re-engineer my storage network."

Karen moved quickly, and within three weeks of choosing Avere, the FXT 2500 two-node cluster was up and running. The nodes are configured in read/write mode during the school day, and at 6:00 P.M., the cluster automatically switches modes to read-only in an effort to support the district's existing backup strategy with Veeam. Twelve hours later, the cluster switches back to read/write mode for maximum performance during the school day.

The FXT 2500 clusters are reliable, they don't need tweeking, and Karen hasn't had to touch them since they were implemented. He refers to them as "magic boxes," adding "Because of Avere's tiered storage architecture, all future disk purchases can be lower cost SATA drivers rather than higher cost SAS, so Avere has not only solved our performance problems, but will be saving us money going forward."

It is, as they say, all good.

Posted by Bruce Hoard on 01/04/2012 at 12:48 PM3 comments

The Virtual Geek is an EMC blog written by a funny guy who is coincidentally known as the Virtual Geek.

His Official Unofficial VMware Storage Survey Results came out last week, and there are, as they say, some interesting data points here that dovetail with my "vSphere 5: Slow to Roll? blog, which also posted last week. In it, I asked the question, "Could it be that we are underestimating the rigors of implementing vSphere 5?"

Virtual Geek answered that question in an indirect fashion when he revealed that 59.2 percent of the 1,935 respondents said they were already using vSphere 5. That's a lot of users who are implementing vSphere 5 post haste, since it only GA'ed in late August. Working backwards, 85 percent of respondents identified themselves as vSphere 4.1 users, followed by 24.3 percent saying they were vSphere 4.0 users, 12 percent saying they were on V13.5, and 1.4 percent reporting they were pre-V13.5 users.

Methodology nugget: The number of virtual machines at respondent sites ranged from four to 200,000.

Methodology non-nugget: Virtual Geek did not describe respondents in a meaningful fashion, other than saying they came from 65 unique countries, and 941 unique cities.

In response to the question, "Do you use any other Virtualization Technologies?", 48.8 percent cited the rapidly up-and-coming Hyper-V, followed by 26.5 percent noting XenServer, which, it would seem, is benefiting handsomely from its close relationship with VDI meister XenDesktop.

Virtual Geek's survey also revealed that a lot of the low-hanging virtualization fruit has indeed been picked. To wit, when asked what percentage of their x86 environments were virtualized, 11.3 percent said 100 percent, 32.2 percent said 90 percent, 80 percent replied 23.7 percent, 60 percent noted 18.3 percent, 40 percent said 8.3 percent, 20 percent said 4.2 percent, and 10 percent reported that two percent of their x86 environments were virtualized.

With a nod toward Virtual Geek, I point out that EMC topped the list of replies when respondents were asked to check off their favorite vendor of choice in virtualized server environments. EMC basically lapped the field with 41.1 percent of the vote, followed by the usual storage suspects, NetApp, HP, Dell, IBM and Hitachi -- none of whom received even half the responses chocked up by EMC. It's good to be King.

Posted by Bruce Hoard on 12/20/2011 at 12:48 PM3 comments

Now that Veeam has announced that its Backup & Replication v6 product provides native support for Hyper-V, the company is looking to ingratiate itself with IT pros who have Microsoft certifications, and expand sales by offering them free software licenses for demo use in home labs. Veeam did the same thing last year for VMware-certified pros.

Overall, these free, not-for-resale, two-socket software licenses are now available to VMware vExperts, VMware Certified Professionals, VMware Certified Instructors, VMUG members, Microsoft Most Valuable Professionals, and Microsoft Certified Professionals.

Veeam has been extremely bullish on Backup & Replication dating back to its pre-production days, and says it was downloaded over 15,000 times during its first week of availability.

In a canned quote, Microsoft MVP Derek Schauland seemed jubilant, saying "Veeam for the Microsoft community? Awesome! Having a program giving NFR licenses of Veeam software to the Microsoft community will be invaluable to the home lab and test needs if IT pros in pursuit of training, blogging and other activities."

The licenses are available here.

Posted by Bruce Hoard on 12/15/2011 at 12:48 PM4 comments

I realize that I recently blogged on the results of a survey from Nasuni about cloud-based data security, but I thought readers would be interested in the company's latest research findings, which are based on the results of an ongoing 26-month stress test of 16 major cloud storage providers (CSP).

According to Nasuni--which uses raw cloud storage from these companies--only six of the 16 CSPs passed the test, meaning they provided the "minimum level of performance, stability, availability and scalability that organizations need to take advantage of the cloud for primary storage, data protection and disaster recovery." The six with passing grades are Amazon S3, AT&T Synaptic Storage as a Service (powered by EMC Atmos), Microsoft Windows Azure, Nirvanix, Peer 1 Hosting (also powered by EMC Atmos), and Rackspace Cloud.

The two top performers are Amazon S3, which ranked highest across the board, and Azure.

Nasuni will not reveal the names of the 10 CSPs that flunked out.

Regarding methodology, the tiered tests are designed such that each CSP has to pass an initial test before moving on to the next one. The five testing stages are:

- API integration, which ensures it is possible to test the service

- Unit testing, wherein larger software components are broken down into their building blocks--units--and then tested for inputs, outputs and error cases

- Performance testing, which measures the speed of cloud interaction, meaning how rapidly data moves back and forth to the cloud, and the reaction to high stress levels

- Stability testing, which measures the prolonged reliability of CSPs

- Scalability testing, which reveals how the CSPs react to high object counts

"Though Nirvanix was 17% faster than Amazon S3 for reading large files, and Microsoft Azure was 12% faster when it comes to writing files, no other vendor posted the kind of consistently fast service across all file types as did Amazon S3," Nasuni said.

In addition, S3 had the lowest number of outages and the best uptime, and was the only tested company to register a 0.0% error rate in both writing and reading objects during scalability testing. Nasuni also said that while Azure has a slightly faster ping time than S3--which Nasuni attributes to the fact that S3 is much more heavily used than Azure--S3 still maintained the lowest variability.

Just as an aside, I find it interesting that in its current company description appearing at the bottom of its press releases, Nasuni fails to mention the word "cloud" once, while just over a year ago in early October, it started out a new product announcement for the company's Nasuni Filer 2.0 by calling itself the "creator of the storage industry's leading cloud gateway." Nowadays, Nasuni refers to itself as "a next generation enterprise storage company."

More information on the Nasuni Stress Tests is available at www.nasuni.com/cloudreport.

Posted by Bruce Hoard on 12/13/2011 at 12:48 PM4 comments

Could it be that we are underestimating the rigors of implementing vSphere 5? I ask that because during a briefing I had with vKernel this week, when I asked Product Marketing Manager Alex Rosemblat what he was hearing about vSphere 5 migrations, he replied, "We have very few customers who are using it extensively yet." This, from a guy whose company sells heavily into dedicated VMware environments.

I'm not implying that there are serious hidden problems here. After all, vSphere 5 officially hit the streets in late August, only some three months ago, but it just seems a little odd that Rosemblat hasn't felt a stronger product pulse for the latest and greatest iteration of VMware's primo virtualization environment. He did say that some users were doing pilots, so maybe IT organizations are making sure they've got vSphere 5 down pat via a proof-of-concept approach before they mount a serious migration.

Here at Virtualization Review, we have been doing our best to keep readers abreast of this hot topic via a new, dedicated vSphere 5 page, and blogs from our How-to Guy, David Davis, and Virtual Insider Eli Khnaser. David most recently wrote a blog entitled, "How to Monitor vSphere 5 vRAM Pools," while Eli produced "ESX-to-ESXi Migration Key to Success? The ESX System Analyzer."

As vSphere 5 gains momentum, we will continue to report the news and analyze the issues on this pressing topic.

Posted by Bruce Hoard on 12/08/2011 at 12:48 PM4 comments

iWave Software offers an alternative to users who want to develop their own cloud-based storage services as opposed to hiring a service provider for the task. In addition to giving companies the comfort of knowing they have architected their own, on-demand, cloud storage solutions, iWave Storage Director 1.5 adheres to the immutable rule that states "Thou shalt not displace legacy storage infrastructures."

Storage Director 1.5 caters to budget-sensitive customers by automating the tasks associated with provisioning, reclamation and remediation for storage. In addition, it enables storage admins to push the self-service button, which enables them to automate storage services in private storage cloud environments that are available to storage consumers. In this way, admins are able to cost-efficiently leverage unified workflows across vendor products and within organizationally defined policies for regulatory compliance. Bottom line: Fewer admins are required, and those who remain can focus on other, more core-competency-related tasks.

Reduced storage outages are another benefit provided by Storage Director 1.5, because it ensures best practices are automatically followed with each request for provisioning. This eliminates configuration errors during provisioning. Throw in finding and reclaiming unused storage, and improving end-user satisfaction by reducing the time required for provisioning new storage from weeks to hours, and you have a full solution set.

Company quote: "Our solution gives storage administrators the ability to develop a fully automated storage services catalog based on best practices, making storage easy for users to consume and for administrators to manage."

Posted by Bruce Hoard on 12/06/2011 at 12:48 PM2 comments

Gone are the days of DR tests being done every--say, three months, no make that every six months, no maybe once a year--but it costs so darned much and takes so long, let's just blow it off. Well, those days aren't gone for everybody. In fact, as far as we can tell, they are only gone for customers of VirtualSharp, which assessed the sorry state of DR testing and came up with a product--ReliableDR 3.0 in its latest incarnation--that handles the entire test process, end to end, automatically. No muss, no fuss.

After hearing how one of VirtualSharp's Fortune 20 insurance customers developed an ongoing, automatic DR test that runs every three hours eight times a day--that is smokin'--I'm inclined to believe Virtual Sharp CEO Carlos Escapa when he refers to his agentless product as a "a paradigm shift altogether."

More good news: all that productivity comes bundled with savings of somewhere in the neighborhood of $50,000 to $100,000 for that prescient, F20 customer, who can now stay home and pull for the Green Bay Packers rather than worrying about DR tests that previously required a team of application owners to up and and head off to a Sungard DR center for a gala 3-4-day weekend of humdrum walkthroughs.

Scarpa says his agentless product "delivers an integrated, virtual, disaster recovery solution across the IT stack, from storage to application, that can be delivered for cloud environments using a zero-footprint, self-service architecture. It's good to be king.

ReliableDR 3.0 improves on its predecessor by adding multenancy, a Web Oriented Architecture, Application-specific SLAs, role-based access control and embedded dashboard (probably from a Mercedes).

The product will be available during Q1 of 2012 directly from VirtualSharp and its authorized channel partners. Existing 2.x customers will be entitled to a free upgrade to 3.0 based on their current maintenance agreements.

Question: Would your company benefit from automated DR testing?

Posted by Bruce Hoard on 12/01/2011 at 12:48 PM0 comments

Cloud storage provider Nasuni is fighting the good fight when it comes to selling cloud storage. As everybody knows, a lot of users are still hesitating to move their data offsite and onto the cloud, but it does happen, or CloudSwitch would have not been acquired by Verizon.

In a recent study conducted by Nasuni of 451 IT decision makers at North American enterprises, respondents echoed the concerns that many companies have stressed, e.g. 81 percent of respondents are concerned about data security in the cloud, while 48 percent were concerned about the level of control over cloud-based data storage. Going on, the survey revealed that only 43 percent of respondents have plans to store their data in the cloud over the next year.

So how is this dire news good for Nasuni? The good news is that according to Product Evangelist Chris Glew, Nasuni is ready to ride to the rescue if overriding concerns about cloud storage force users into buying consumer storage solutions that are less secure than the enterprise-grade cloud storage solutions offered by Nasuni.

"Our recent survey makes painfully clear the quandary the cloud presents to IT leaders," Glew opines. "They clearly understand the promise of cloud storage for cost savings, off-site backup, unlimited scale, simpler IT management, and on-demand provisioning, but they are also rightfully concerned about the security of their data and whether they have control over it at all times."

Which takes us back to the longstanding tendency of rogue users who like the idea of a technology--such as cloud storage--but opt for lower quality consumer options without the permission of IT. By "consumer," Glew means services that are typically used to share information among friends--and are potentially disastrous for businesses.

"The survey demonstrates the need for a new, cost-effective approach to storage that harnesses the unlimited capacity of the cloud in a way that guarantees data is always secure, always manageable, and always under IT's control," says Glew.

Nasuni is only too happy to help users save themselves from their penurious ways.

Posted by Bruce Hoard on 11/29/2011 at 12:48 PM1 comments

This year at Thanksgiving, I am thankful for many things, professional and personal. Professionally, I am thankful for:

My job: I have a great job that is very interesting and satisfying. There are a lot of people living in this country who are unemployed and suffering. A job is a precious thing.

My boss: Doug Barney, our group VP, conceived of Virtualization Review during a series of lunch meetings and briefings. Then he hired me, told me what to do, and left me alone to do it. He's there if I need him, which is nice. A good boss is also a precious thing.

Virtualization: When you step back for a minute and think about it, virtualization is a way cool technology with a track record that is pretty amazing. A lot of money has been saved, a lot of productivity has been created, and a lot of interesting companies have fleshed out the virtualization eco-system.

VMware: VMware is the center of gravity in the virtualization and cloud computing industries, a remarkable success story, and a great company to love/hate. They should be very thankful to have a guy like Paul Maritz at the helm.

The people: This is a big one. On the vendor side I am thankful for the never-ending array of entrepreneurs, CEOs, CTOs, and various other executives I meet with over the phone and in person. Turning to analysts, I have found that not everyone has been created equal--that's why I really value the good ones. When it comes to PR pros, I am happy to say that almost all of them are friendly, helpful, and knowledgeable, and I couldn't do my job without them.

Readers: Without you, gentle readers, the whole show grinds to a halt. You are not always easy to read, which can be frustrating, because my overriding goal is to inform you and satisfy your knowledge needs. In a way, I serve at your pleasure, so please, don't spare the comments after you read my ramblings.

Happy Thanksgiving!

Posted by Bruce Hoard on 11/17/2011 at 12:48 PM2 comments

SunGard Availability Services rolled out its Managed Recovery Plan designed to help large enterprises focus on primary production operations as opposed to optimizing application recovery. This Recovery-as-a-Service offering, which serves both physical and virtual environments, can be hosted from various locations and relies on a relationship between a remote SunGard recovery expert and the customer's IT operations.

The "recipe for recovery," as SunGard puts it, goes beyond support for x86 systems and cloud-based applications to also include mainframes, Solaris and other technologies. "With this service, SunGard experts manage the entire recovery lifecycle for organizations, helping lower the cost, burden and risk in the recovery process," the company said.

The program includes planning, scoping, implementing, testing and operating recovery processes, helping customers identify and address root cause recovery pain points. These pain points include change management for disaster recovery plans and procedures, and staff availability and skill levels needed to support testing and recovery activities.

There are four primary Managed Recovery Plan components. The first is definition and maintenance of recovery plans, procedures and recovery infrastructure configurations. The second is recovery procedure execution, including startup of operating system, network and backup servers. The third is recovery management during disasters, and the fourth is post-test reporting, including detailed review of test activities, gap analysis, recommendations for improvements, remediation plan, program status reporting, contract maintenance and updates.

Prices for the plan start in the range of $10,000.

Posted by Bruce Hoard on 11/15/2011 at 12:48 PM1 comments

NexGen Storage has come out of stealth mode with a mid-range SAN it built from the ground up to solve the shared storage mess and unleash the full power of virtualization. The NexGen n5 Storage System uses off-the-shelf hardware to achieve VM density resulting in what the new company claims to be up to 90 percent storage operating expense reduction.

The secret sauce, of course, is not in the hardware. "It's all about the software we load on it," says NexGen CEO and cofounder John Spiers. Spiers has been around the start-up block before, with fellow Lefthand Networks cofounder, and now NexGen cofounder and CTO Kelly Long. Together, they sold Lefthand to HP for a cool $360,000,000 in 2008. It may not be the $1.4 billion Dell dished out for Equalogic, but it's still a decent payday.

Citing the litany of shortcomings associated with storage systems, including scale-out, solid state and the high-end midmarket, NexGen refers to itself as a second-generation storage vendor that is in effect coming in to clean up the mess made by its overly expensive and complex predecessors. For example, speaking of solid state, NexGen VP of Marketing Chris McCall says, "We see it as a component, but it's definitely not a panacea."

NexGen says managing capacity is easy, while managing performance is much more difficult because of the complexity, insufficient tools, difficulties associated with provisioning/allocation, and the fact that "everything impacts everything else."

The company hits on the familiar theme of breaking down silos of information so that it can be centrally maintained, controlled, virtualized and shared. In the case of NexGen n5, that means QoS that provisions performance just like capacity; Dynamic Data Placement that migrates data in real time to deliver guaranteed performance levels, and managed service levels that ensure each volume maintains its priority and performance levels at all times.

Spiers says that his new system is enhanced because rather than implement solid state behind SAS connections and controllers--which degrades performance--it integrates solid state next to the CPU on the PCI bus, which was designed for memory speed. "That way," Spiers declares, "It can unleash the full performance potential of solid state storage, and achieve 43 percent lower cost/IOP."

According to NexGen, when combined, "PCI solid state and Phased Data Reduction enable NexGen n5 to deliver up to 76 times higher VM density than a typical disk drive deployment, resulting in up to 90 percent storage operating expense savings." Phased Data Reduction includes multiple phases leveraging advanced QoS capabilities so application performance is never impacted. In addition, it applies to all tiers--not just solid state--to deliver 58 percent lower $/GB without decreasing performance.

The suggested price for NexGen n5 is $88,000, and the system is available through resellers.

Posted by Bruce Hoard on 11/08/2011 at 12:48 PM0 comments

It would seem that hypervisor users are a restless bunch if you believe Veeam's quarterly V-Index tracking system, which claims that that 38 percent of enterprises using server virtualization, and 34 percent of those using desktop virtualization, have indicated they will change their primary hypervisors in the next 12 months,

Veeam claims the main concerns driving this potential hypervisor infidelity are rising costs, increasingly complex licensing models, and the improved features and maturity that other hypervisors now offer.

Let's start with complex licensing models, a.k.a. VMware. VMware customers might grump, and they might even hedge their bets by bringing in Microsoft Hyper-V gratis for a look if they are a Windows 2008 R2 SP1 shop. Even then, vSphere will continue to do the heavy server virtualization lifting, while the desktop load is divvied up between VMware View and Citrix XenDesktop. That works well enough for the overwhelming majority of VMware customers, who are willing to eat those rising costs cited by Veeam, rather than worry about the allegedly improved features and maturity of competing hypervisors.

Looking at it from another perspective, as Gartner guru Chris Wolf told me a while back, hypervisors are becoming almost as "sticky" as database software. It's not that users can't just convert a VM, because vendors provide suitable tools for that task. The issue is the operational software--backup, security, capacity management and configuration management--that gets tied into the hypervisor. How do you untie those knots?

And then there is the decidedly unpleasant task of going back to the manager you just hit up for your existing system, and explaining to him how suddenly it is no longer such a good investment. Anybody want to volunteer for that?

Posted by Bruce Hoard on 11/03/2011 at 12:48 PM2 comments

Enhanced I/O has become something of a Holy Grail among vendors who are attempting to sell products that optimize virtualization and cloud environments, and users, who are fuming over low sub-par network performance penalties. Enter Diskeeper, with its V-locity 3 Virtual Platform Disk Optimizer, and "invisible background optimization."

Diskeeper portrays itself as a "virtual doctor" that alleviates latency pains induced by constricted I/O bottlenecks, sluggish VMs, laggardly migration, resource conflicts, unfulfilled storage capacity, and woefully slow backup speeds.

Via its V-Aware technology V-locity 3 detects external resource usage from VMs and eliminates host server resource contention. For example, its CogniSAN technology detects external usage in shared storage environments such as SANs, which are frequently reviled for their negative impact on I/O. V-locity overcomes this liability by allowing "transparent optimization by never competing for resources utilized by other VMs over the same storage infrastructure."

The product is fully integrated with VMware ESXi and also supports ESX, as well as Microsoft Hyper-V. It further features an automatic space reclamation function, which zeros out unused data blocks on virtual disks, enabling virtual disk compaction.

Diskeeper, which has been around since 1981, claims to have 96 percent marketshare. According to product manager Damian Giannunzio, "We run the show because we innovate."

Posted by Bruce Hoard on 11/01/2011 at 12:48 PM5 comments

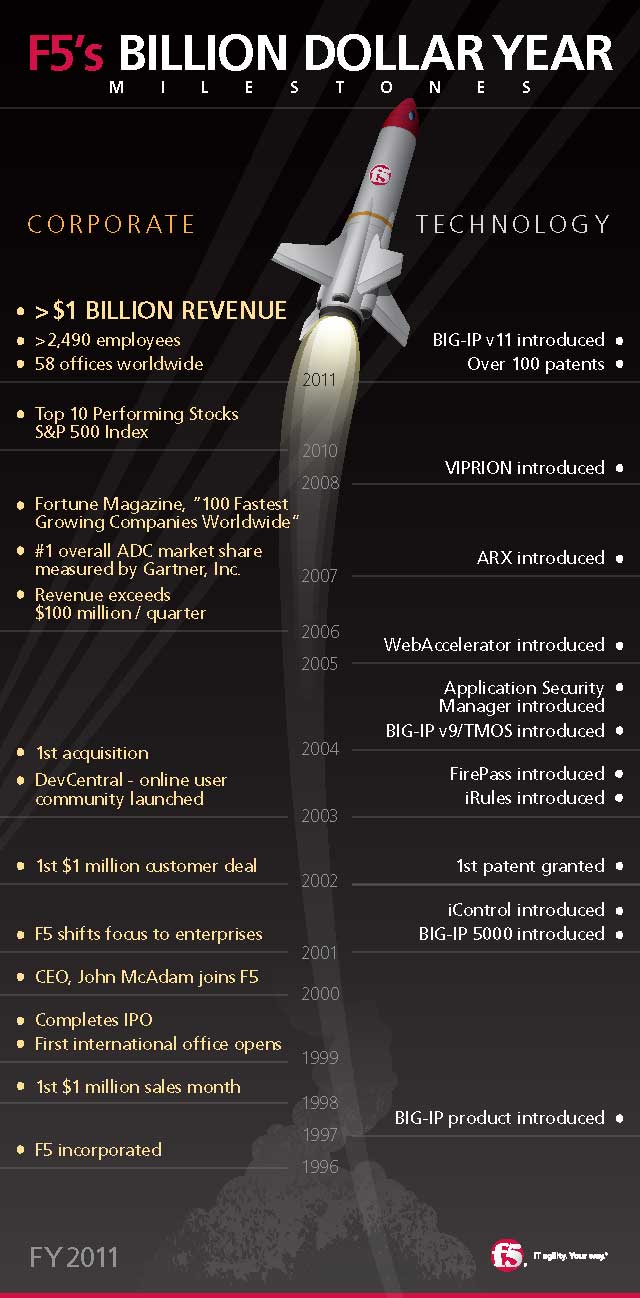

As the very cool infographic timeline details, application delivery controller company F5 Networks has exceeded $1 billion in revenue for its 2011 fiscal year. The company said it earned $314.6 million during Q4, which was a hike of eight percent over the $290.7 million* in the previous quarter, and 24 percent over the $254.3 reported during Q4 of fiscal year 2010. Overall for fiscal year 2011, revenue jumped to $1.15 billion, an increase of 31 percent from $882 million in fiscal year 2010.

You'd think that F5 would want to crow about this impressive milestone, but CEO John McAdam played it close to the chest, stating in bare-bones PR speak that the Q4 results "reflect solid year-over-year gains across all regions and vertical market segments." In addition, he notes, product sales grew stronger during Q4, "driving product revenue up 10 percent sequentially and 20 percent year-over-year."

McAdam does point out the contributions by F5's scale-on-demand chassis products, the Viprion 2400 (designed for mid to large enterprises) and the 4400 (targeted at large service providers), as well as the impact caused by the availability of the company's TMOS v.11 software offering for its Big-IP ADCs. The Big-IP line has been instrumental in F5's upward spiral.

|

|

Source: F5 (Click image to view larger version.) |

F5 made its billion in a highly competitive market featuring competitors such as Cisco, Brocade, and Citrix (which focuses on using them with its own virtualization products). Other competitors include Radware, Zeus Technology, A10 Networks, Array Networks, Barracuda Networks and Crescendo Networks.

[*Corrected 11/1/11.--Editors]

Posted by Bruce Hoard on 10/27/2011 at 12:48 PM0 comments