The Citrix acquisition of Zenprise last week will go down as one of the coolest acquisitions in its history. Simply put, how often does a company acquire another and the only thing that needs to change in the product names is a single letter! Citrix can easily rename the three Zenprise flagship products as follows:

- XenPrise Mobile Manager

- Citrix XenCloud

- Citrix XenSuite

Considering Citrix has been standardizing on Xen in most of its product names, the marketing department should be thrilled with this acquisition.

There's more to that acquisition than just a cool marketing twist, of course. Citrix has become, in my opinion, the very first company to have an end-to-end end-user computing solution for the enterprise. I've been speaking and lecturing about the need for an EUC strategy. The user space has become very complicated and it has been ignored for many years and it is time to bring datacenter-like disciplines, structures and thought processes to a world moving more and more towards mobile, towards consumerization of IT. The standards we deployed in years past do not apply today and they will surely not apply tomorrow.

I break down my EUC strategy into these categories:

User Experience -- This is where Desktop Virtualization and physical desktop and laptop management fall into play. Citrix, with its FlexCast model does a wonderful job bringing different types of Desktop Virtualization capabilities to the enterprise. Whether it's VDI, XenApp, OS Provisioning or even Client hypervisors, Citrix owns that space. Couple that with a powerful remote desktop protocol in ICA/HDX and it has that covered very well.

When you factor in Citrix's strong relationship with Microsoft and its tight integration with System Center Configuration Manager, you find that the use of the Citrix suite compliments and fits very nicely when it comes to managing physical assets. Citrix even has extensions for the System Center consoles so that you can manage XenApp and XenDesktop straight from within System Center. App-V is another strong integration point for the companies as well, so they have the user experience portion for physical and virtual desktops and applications covered extremely well.

MxM -- In this category the differnet acronyms that make up this solution are categorized. Let's start with MDM, which Citrix has a form of in CloudGateway. It's feature-poor when compared to the leading providers, but the acquisition of Zenprise plugs the gap and puts Citrix in the leader position.

Mobile Application Management is really the future of where things are going to eventually end up. No one really wants to still manage the actual physical device, but at the same time, the transition to governing the device -- meaning, the mobile applications and data -- will take some time. It's why MDM is still relevant for many enterprise organizations. A combined Zenprise and CloudGateway can become a very powerful MAM solution, a single unified storefront, so it's goodness all around.

The final category in the MxM family is Mobile Information Management. Citrix has a handle on MIM with its ShareFile offering, but Zenprise brings a significant amount of features that are enterprise friendly and required. Citrix with Zenprise plugs all the shortcomings of using just ShareFile.

Collaboration -- Citrix has an impressive lineup of collaboration tools and solutions from the GoTo portfolio, all the way to the recent Podio acquisition. Once these products are integrated with the rest of the solutions in the Citrix portfolio, the collaboration tools will provide a very nice wrapper to bring the solutions together.

The final two pillars of an EUC strategy are policy-driven security, which Citrix and Zenprise cover in some aspect, and a robust, wireless infrastructure, which is outside of either company's wheelhouse. The policy-driven security that I am referring to are more Network Access Control-based solutions. Cisco ISE coincidentally connects very nicely into Zenprise, which means another connection point for Citrix.

All in all, the Citrix acquisition of Zenprise is a very welcome step and one that solidifies Citrix's offering in the EUC space.

Next time, I'll highlight what I think VMware absolutely must do in order to respond and bring its EUC offering up to par. Until then, I would love to hear from you on what you think of this blog and whether or not I am missing anything for a true end-to end-EUC strategy for the enterprise.

Posted by Elias Khnaser on 12/10/2012 at 3:01 PM1 comments

The quick answer is YES! They are most definitely changing, expanding, getting more involved and complex. For the longest time the IT department in organizations was a feared entity. When I say "feared," I am using the term loosely here to imply that because the rest of the company did not understand what we did, because what we did seemed complex and "very smart" to most of them, they avoided us.

I would venture to say we came across as intimidating and as a result they avoided us on many levels -- even at the highest executive levels. They knew that there is a need for IT. A CIO might be able to communicate and bridge the gap, but IT was always viewed as a cost center, an area of the business that always spent money. But the business never understood or quantified what its return on this investment was. This led to IT organization outsourcing and a whole slew of things -- CXOs would look at the balance sheets and see this big spend in IT.

Fast forward many years and many trials and erros and catastrophes with outsourcing and other models and you find yourself on the brink of the cloud era. I'm aware that this term causes a lot of confusion and I can feel some of you giggling already. The term started a marketing bubble that implied nothing. To this day I sit with customers and when I bring up the cloud, they laugh and then say, "Let's define the cloud."

My advice to you today is, don't be those people anymore. The cloud era is here and whether it's private or public it now has shape, has form, has "teeth." We can define it, quantify it and we can build it. Heck, Microsoft even created a button in System Center Virtual Machine Manager 2012 called "Deploy Private Cloud." (For the record, that is priceless!)

When I say cloud, many of you will probably think, "If it's here, why don't I see it and feel it?" What I am telling you today is, sometimes it takes a while before something gets to your level or starts to affect you.

Recall that even virtualization started off slow. Some customers put it in test and dev, others did not even want it anywhere near the production environment, some customers did not even want it in the production Active Directory (true story) for fear it would corrupt something. All this because they did not understand it, it was a disruptive technology, but virtualization at some point went mainstream.

Private Cloud today is on the brink of a similar widespread adoption phase just as virtualization was. Frankly, the cloud is at that point because of virtualization. When we virtualized our physical compute infrastructure, we saw great CapEx savings and high consolidation ratios, etc. But we really did not do things that much differently: We took the same practices that we had refined over many years and now implemented them in a virtualized datacenters with some minor tweaks here and there to accommodate the technology, but our role did not change much, our daily activities did not change much. We just dealt less with the hardware. Virtualization, however, introduced many new challenges that our fine-tuned processes did not account for, such as VM sprawl, storage sprawl, etc.

While this transformation was happening in the data center, another form of transformation was happening at the consumer level in that technology companies were no longer marketing to us IT folks. Instead, they found a larger crowd that can immediately consume their product using personal credit cards and they did not have to wait for weeks and months for us to make those large purchases. Borne out of that movement was the consumerization of IT.

You are probably asking yourself how that affects you, right? Consumerization broke the fear barrier that consumers had of IT, they found themselves all of a sudden not as "dumb" as they thought they were or maybe how we made them to be. Hey, I can have cloud storage with Dropbox, I can create my own e-mail account, I can do video conferencing with Skype just like I do at work where this mean IT guy will give me attitude if I ask for help. I can go on forever with services that were previously just a privilege to have access to as part of working for this enterprise. Today, the consumer knows what we can do and if we don't facilitate, well, they'll substitute us and more -- often than not -- we don't even know they are going around us.

This consumer also affected the way we do business because they are now used to getting the service they want almost instantly from their new IT best friends. The notion of "it will take two weeks" to get the server hardware and software installed and configured before the application guy or business owner can have access, well that's no longer a viable notion. Business is moving faster, our consumers are moving faster, and as a result we either catch up or get replaced. Period. The end.

So here's where the cloud comes in. When you start looking at private cloud, it very quickly becomes apparent that it is all about automation, orchestration, business process decomposition and automation, using ITIL best practices, getting more involved in accounting practices in order to enable showback and chargeback. Do you want to transform IT from a cost center to a services center? You need to understand your accounting practices, talk to them, design how they will account for these charges, and so on.

I can talk about what cloud does for a while, but I think you get the picture. Your role is not as simple and as isolated or as siloed as it was before. It used to be Toys'R'Us for adults because we focused on the infrastructure so much and our world revolved around it, . Today, I am inviting you to grow that role and expand it. Network architects are no longer going to just deal with routers and switches. Why? Because the virtualization administration took away some of their duties and softwared-defined networking will completely take them away from the hardware. Acquisitions like Cisco's Meraki introduces network management from the cloud, because IP storage forces them to understand storage. I can make the case for each of the other IT roles in an organization. It is converging. Embrace it.

This all culminates in converged infrastructures. No one will have the time or patience to buy things separately and put them together anymore. You are expected to be up and running faster, and be more reliable. Once there is a converged infrastructure in place, then there should be a converged admin/engineer in place -- maybe even a data center admin -- a person or group of people responsible for data center hardware. The rest of us? We get elevated to the software level with no physical data center access.

At the software level, we now need to learn ITIL best practices if we don't already, because private cloud processes are wrapped around business processes. We now have to build processes that govern us as well. We have to get involved in applications, figure out how to quickly deploy them, monitor them, and charge for them. You're probably saying, "Eli, this is not new, we do it today." YES, I know! But we do it in a very manual and slow way. Rather, we have to do it in a very automated and fast way, adding self-service and metering capabilities.

While private cloud is starting to show signs of implementations and while Microsoft and VMware have clearly identified the components and strategies, I want to stress and encourage you to start building your knowledge and understanding of the private cloud away from the sarcastic nuances that exist out there. Deploying a private cloud is not an easy task. It is a very detail-oriented, complex and exhaustive task that requires people with technical skill sets and business sense to pull it off right.

Private clouds provide for better job security because of the complexity. You become more valuable and are looked at differently. I know you are probably still doing things the way you have been, but use this time to build your skill sets so that you are leading the transformation not trying to catch up to it.

I realize this is a controversial post and invite you to share your thoughts in the comments section.

Posted by Elias Khnaser on 12/05/2012 at 12:49 PM5 comments

Welcome to Windows 8 Land! I have been coming across many customers who are starting to play with Windows 8 RT and trying to connect it to their Citrix infrastructures -- with little luck. The reason? Evolution! Most organizations are still using Citrix infrastructure components that are not compatible with Windows 8 RT.

If you are using Web Interface or Citrix Secure Gateway or both, together, then there lies your problems. These two components must be upgraded before a Windows 8 RT client will be able to remotely connect.

There are three requirements for Windows 8 RT remote connectivity as follows:

- Upgrade to Access gateway 10.x or higher: Customers who are still using the Citrix Secure Gateway should be thinking about upgrading to the Access Gateway, which comes in many different editions. For something similar to the CSG, the Access Gateway VPX would be a good alternative.

- Citrix Receiver for Windows 8 Tech Preview.

- Upgrade Web Interface to StoreFront 1.x or newer. We all love Web Interface, and while StoreFront still does not capture all the features of Web Interface, it most certainly has the ones needed for Windows 8 RT connectivity. Thus, it would be a good time to start considering an upgrade.

Another good reason to start considering StoreFront is the fact that the HTML 5 client will only work with StoreFront. Now you have two reasons -- Windows 8 RT and HTML 5 client -- to consider an upgrade. Beware, however, that the Citrix Secure Gateway will not work with StoreFront, so when you plan your upgrade make sure you are mapping the different components and know what the prerequisites are for the upgrade.

Are you using StoreFront or trying to use Windows 8 RT? I am interested in some of the challenges you have faced and how you have overcome them. Please share in the comments here.

Posted by Elias Khnaser on 12/03/2012 at 3:01 PM2 comments

For a while now I have been complaining that Cisco needs to do something to reinvent itself and that it is lagging behind many other companies in our IT industry which are all try to carve their own space in the new IT. So, just like that, as if someone at Cisco wants to tell me, “Oh yeah, Eli? Well here you go!” Cisco makes two acquisitions -- Cloupia and Meraki -- and frankly I am not sure which one to be excited about more. I will say that they are both fantastic pickups and will go a long way in the Cisco reinvention process. Cisco is not done yet, however. This analyst believes that Cisco will inevitably acquire a storage company in 2013 and I am betting it will be NetApp but we will discuss this a bit closer to the end of the year as we make the 2013 predictions that y'all love so much.

Meanwhile, another company undergoing a massive reinvention effort, Dell, has also picked up an excellent automation and orchestration company in Gale Technologies.

At the beginning of 2012 I started blogging heavily about converged infrastructures and how they will enable the private cloud. I also emphasized the point that the traditional method of acquisition will soon fade away and that these stacks, these PODs of physical resources will be sold pre-configured, pre-validated, pre-tested and supported as a unit. I was bashed by my own people and cursed for daring to suggest that things are changing. Lo and behold, at the end of 2012, the emphasis is on automation and orchestration for converged infrastructures in particular, albeit, many of these tools will support different manufacturer stacks as well.

Cisco's pickup of Cloupia and Dell's pickup of Gale Technologies falls in this space. With Cloupia, Cisco takes a giant leap in its automation and orchestration of physical resources all the way to virtualized resources. If the very essence of clouds are automation, then Cisco and Dell have nabbed two companies that are leaders in that space. Now you can truly manage your physical converged infrastructures and virtual resources from a single pane of glass.

I wonder what EMC and VMware will say when and if Cisco decides that it wants to replace UIM with the new Cisco Cloupia solution in VBLOCK. Who knows? Maybe they will come to a friendly agreement of giving the customer a choice of management tools. Both Cloupia and Gale technologies were on my radar and favorites in this space and I am glad they were picked up by these A players.

What about Meraki? Talk about an expensive acquisition, Cisco paid for Meraki just about what VMware paid for Nicira, except if you are thinking that Meraki is Cisco's answer to Nicira, you would be mistaken. Meraki is a very cool cloud networking management technology which allows you to manage your network devices completely from the cloud. Meraki comes with a complete set of hardware like Wireless AP, switches and more that all communicate with the cloud, download their configurations and off they go. Meraki also simplifies the complicated tasks traditionally associated with managing, scaling, monitoring and optimizing a network. It offers profiles for common tasks. For instance, if you want to setup a VPN or a wireless AP, you no longer need to know command line interface or spend an extended amount of time implementing that solution -- Meraki automates it and gives you more time to do things that matter.

Meraki in my opinion represents a true mindset change at Cisco, a true shift and embracing of the cloud era, one where physical hardware is not the main focus.

Are you a Meraki, Cloupia or Gale Technologies customer, I want to hear your comments. What do you think of these solutions?

Posted by Elias Khnaser on 11/26/2012 at 3:02 PM3 comments

Citrix has been touting and finally revealed Auto Support at Synergy Barcelona 2012 nearly a month ago and its purpose is to help Citrix administrators better troubleshoot, diagnose and quickly resolve issues in their environments. The tool goes a step further, however, and aggregates best practices that Citrix developed from working with thousands of customers. So, not only does it help in troubleshooting your environment, it will also measure it against best practice.

The best part is that not that it's free, but that it's also a SaaS application. That makes it all the more interesting, as the information you upload for analytics should be up the minute and accurate. You can access it if you have MyCitrix credentials, here.

Auto Support can be used for:

- Detecting known issues

- Best practices recommendation

- Patches that could resolve your issue

- System update information

- Crash dump analytics for NetScaler

The way Auto Support works is by collecting support data from your different products and uploading it to the system. I am sure you are wondering which tools are supported and how to collect data for them; well, here you go:

- XenApp -- To collect data for upload follow instruction on how to use Scout in CTX130147

- XenDesktop -- To collect data for upload follow instruction on how to use Scout in CTX130147

- XenServer -- Follow knowledgebase article CTX125372

- NetScaler (and you might as well call this XenScaler) -- Follow knowledgebase article CTX127900

If you are thinking that Citrix might incorporate this in all its products moving forward as a way of immediately providing troubleshooting and healthcheck capabilities and boosting the availability of their products, then I am agreeing and nodding my head with you, as it makes perfect sense.

Now what remains to be seen is how accurate and useful this tool really is in real-world implementations. I am very interested in getting some feedback from those who have put it to good use. Please share in the comments section here.

Posted by Elias Khnaser on 11/13/2012 at 3:03 PM0 comments

Apparently the blog I wrote about giving the Windows Server 2012 user a rational user experience is stuck in the Most Popular articles section on the VirtualizationReview.com site, so I am guessing you folks like that article enough to search for it quite frequently

Let's sweeten the deal even more. While I tell you in that article how to give that familiar look to the new server OS, I don't explain how to restore the Start menu. The reason for that is that Microsoft has quite literally removed the components and features that would allow that UI to show up. In other words, Microsoft did not disable the Start Menu -- the developers removed it completely from the source code so there is no registry and no hack that you could use to enable it.

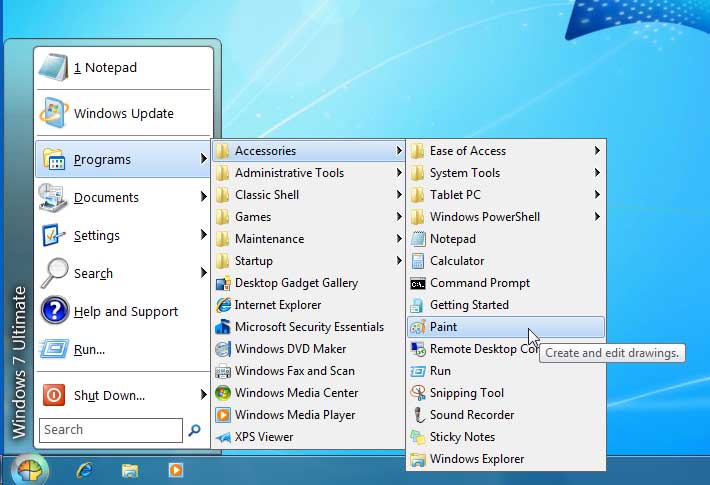

So, there's always someone out there with an answer and that answer is Classic Shell, an open source project that exists on SourceForge. It's a simple application with a very straightforward installation that enables you to restore the Explorer functionality that Microsoft removed. These Explorer functions include the classic Start Menu (Fig. 1) and a classic Explorer (Fig. 2) for browsing your files in addition to a classic Copy and much more. I really enjoyed installing this app and it worked just fine.

|

Figure 1. Classic Start for Windows 8. (Click image to view larger version.) |

|

Figure 2. Enabling classic toolbars. (Click image to view larger version.) |

I would love to get some feedback on how you're doing with Windows 8 and if you plan on using workarounds like Classic Shell in your deployments.

Posted by Elias Khnaser on 11/07/2012 at 3:04 PM4 comments

A question that seems to be on the minds of many, today, is the question of whether to convert to Microsoft's Hyper-V or VMware's vSphere. The first thing I hear after that is a price comparison, followed by an argument in favor of one or the other. My answer, as usual, is, "It depends." IT should not be looking at everything from a cost perspective all the time -- it's not the sole function of IT. I understand that the great recession molded us in a way where all we think about is cost savings.

Today more than ever, IT is an essential part of any business's success. So, IT has to grow up and understand that its role has evolved. We are no longer able to just rack, stack, cable and install operating systems and applications and expect to rule the world. Instead of IT focusing on how to justify every penny it needs and instead of being viewed as a cost center, it is time for IT to start thinking in business terms. I live and die by one rule, and so should you: "There are no IT projects, only business projects."

With that rule in mind, instead of comparing Hyper-V and vSphere from a cost and features standpoint, consider the business implications. Have you considered the implications of your decision not just on today's requirements but also on your one-, three- and five-year IT strategy plans? I will stop with the rhetoric and get more specific. Here is a list of questions that will help you frame which virtual infrastructure is good for you (and in some cases, it might be both):

- Do you have a one-, three- and five-year IT strategic plan?

- Do you have a cloud computing strategy? Private and public?

- Have you evaluated your current applications and their supportability and performance on the virtual infrastructure of choice?

- Have you considered applications that the business could be looking at that you have not been informed of yet? Have you communicated with the business?

- Do you have a backup and recovery plan and, if so, have you considered its implications and requirements?

- Are you considering converged infrastructures?

- Are you considering automation and orchestration at both the virtual and physical layers?

- Are you considering advanced virtual networking, security and data mobility across geographies?

These are just sample questions and one could probably make the argument for both Hyper-V and vSphere, but where your role comes into play is to evaluate where both these infrastructures are at from a capabilities perspective as it aligns with your current and your short- and long-term objectives. Which company's private cloud strategy is more mature and addresses your requirements? Which company's technology is more aligned with other players in your datacenter that could help you implement your strategy? And, more important, which company's vision is more closely aligned with your needs?

You must build a business case and judge the technologies based on what they can deliver today as well as look at the potential for technology delivery in a timely manner tomorrow.

If you are reading this and thinking to yourself, "I am an SMB company and this seems complicated and applies to enterprises only," then I disagree with you, I think SMBs also have a strong ambition to leverage the cloud and limit or eliminate a managed physical infrastructure. As a result, your technology selection will affect your company's ability to deliver services.

If you choose a hypervisor and then realize that your company needs one that can migrate from a private to public cloud, for example, or if you choose a virtual infrastructure that did not have the right level or maturity of integration with a converged infrastructure (again, to deliver services), you should re-evaluate your decisionmaking process. It's not just about "good enough," it's about "what we are doing next." Once you've decided on one virtual infrastructure over another, both Microsoft and VMware offer free tools to help you migrate to their platforms.

Microsoft just released its Microsoft Virtual Machine Converter, which allows you to convert VMDKs created with vSphere 4.0 or newer into VHDX format to run on Hyper-V of Windows Server 2008 R2 SP1 with Hyper-V or newer. Conversely, VMware also has a free tool called VMware vCenter Converter, which would help you convert Microsoft Virtual Hard Drive file format into vSphere.

The point I am trying to make here is that there is no lack of tools to convert you from one platform or another. My advice to you is to put the marketing and vendor pressure aside and make a decision not solely on cost today, but also on alignment and value that you can realistically deliver to your organization.

Posted by Elias Khnaser on 10/31/2012 at 3:04 PM1 comments

We're facing what is being called the biggest hurricane of our generation crippling the entire east coast of the U.S. And this usually turns up the conversation to backup and recovery issues that challenges any organization reliant on physical desktops in the event of a disaster. So, how about a few questions to start off this blog?

- Do you have a DR/BC plan?

- Does that plan account for desktops or just servers and data?

- Do you maintain a physical workspace recovery site?

- Do your plans call for rebuilding desktops?

- How long do you estimate to recover?

I am sure someone will make the case for having laptops and a VPN and my immediate rebuttal is, I sure hope you have enough VPN capacity for all the workers that are about to connect and I sure hope they did not forget their laptops on their desks. I also would add that I hope you have tested all these applications running over the VPN with the capacity of users expected.

With a VPN, we are opening applications locally and communicating over the link, and I hope those apps work for them. I also hope that you don't have to upgrade any applications, as someone is like to forgot to get the latest updates required for them to work. Finally, let's hope you have enough help desk personnel to address the calls that are about to hit the call center.

Let's keep going with this ... I hope that the users understand they should save the files newly created in the datacenter instead of locally on their laptops because you will be constantly figuring out how to back up that data or prevent those laptops with all this valuable information from getting lost. Remember, this is a disaster and things are more prone to happen than not, including theft, destruction, etc. It's another disaster waiting to happen when you have thousands of users running around with their laptops and corporate data on them.

Here is another scenario for you: Power is out, so folks will have to leave their homes and get to Starbucks or some other business that could provide battery and WiFi. So, hopefully those laptops are well secured so that a geeky little fella at the Starbucks who is bored and there to sniff WiFi connections isn't stealing all those files or connecting to your corporate network.

Now I am not suggesting that desktop virtualization, VDI and remote desktop session hosts will address all these issues, but they certainly will make life easier and give somewhat of a consistent user experience to the user had this technology been adopted before the disaster. It would have certainly tested the capacity capabilities of your system and put you in a position to be better prepared. The new files and data you create will definitely remain in the datacenter, your helpdesk would not be as overwhelmed and best of all you can connect from any device, so if you lose power on the main laptop, maybe that iPad has more juice and so on.

For those of you that are affected by Hurricane Sandy, I am very interested in your lessons learned. I am interested in understanding the challenges you faced and the unexpected factors like in my Starbucks example. Please share in the comments here.

Posted by Elias Khnaser on 10/29/2012 at 12:49 PM3 comments

This is not a joke: A CRN report claims that Apple and VMware are working together on delivering the iWorks suite of productivity tools using VMware View. While it could be good news for VMware View and VMware in general, as they can ride the rails of the Apple crazy train, if this rumor is ever confirmed, it couldd be catastrophic for Apple. Brian Madden blogs about it with a bunch of bullet points, all of which I agree with. Still, I can't resist the urge to offer you my thoughts.

First thought is, why do you need VMware View? If you are trying to deliver iWorks to Windows devices (I assume that's the plan; otherwise, what's the point?), then why do you need View? Just for the broker piece? For Horizon? For the client side? Apple could throw a group of developers dedicated to this and it bring up and running in no time. Heck, build Horizon-type functionality into your App Store. ThinApp is of no use to you, Profile Manager does not apply -- so, what do you need View for? If anything Apple should be working with Teradici to OEM PCoIP if they deem that as the remote protocol of choice. Build that into your MAC OS X infrastructure and you can deliver a remote user experience.

The other concern is that Apple has built its reputation on providing users with the best user experience, on a sexier, flashier, trendier product that you want to carry. Those Apple devices are a fashion statement. Is Apple convinced that using a remote protocol to deliver iWorks into the enterprise is the right way of combat Microsoft in the enterprise?

Apple may own the consumer market (for now), but let there be no doubt: Microsoft owns the enterprise. Let's compare the strategies. Microsoft is building an Office version for iOS to improve the user experience natively on the most popular consumer devices in the world. In essence, Microsoft is taking a page out of the Apple book. So, why is Apple trying to combat Microsoft in the enterprise by not building native version of its products to run on Windows?

Now, lets' assume the future is SaaS and mobile applications. Even then, why is Apple not building iWorks as a SaaS offering or a mobile application suite for Windows? Apple, if you do build it as a SaaS offering, please try and make it better than the Google, offering which hasn't really even moved the needle when it comes to enterprise productivity. Sure, Google had some successes with educational institutions, non-profits and some SMBs, but overall, how much did it carve out of Microsoft's enterprise marketshare?

And one other thing: Why is Apple building iWorks on a platform that essentially exists for legacy applications/legacy IT? If we are assuming the world is moving towards mobile apps and SaaS, then VDI is a technology for managing legacy desktops and applications and delivering them more effectively. I can define it differently by saying VDI is a bridge between what we need today and what we will be using tomorrow. And at some point in time, the need for VDI will seize -- albeit, not any time soon -- but it will, so why is Apple building a new enterprise suite for a new platform and delivering it using the wrong mechanism?

If Apple thinks that people have been "wowed" by seeing a Windows desktop on an iPad and it is concluding that if it delivers a Mac OS X desktop to a Windows device that it will "wow" users just as much, Apple is being shortsighted. The Windows desktop on an iPad is cool for a few minutes, but that's it. Besides, given how ugly PCs are, seeing a Windows desktop on a gorgeous form factor offers lots of wow, so I just want my PC to look cool. I like Windows, but Apple computers look awesome. If Apple thinks that this could be a way for them to deliver its software to an open x86 platform without needing to change anything, then that is also a bad strategy with a bad user experience.

I have long maintained that Apple makes innovative and sexy fashion statement products. I own practically all of them: my laptop, phone, MP3 player, Apple TV... you name it, I've got it. But when I want to do real work and not showing off the product, I jump into the Office Suite. So, if Apple wants to win me over in the enterprise space, give me an office alternative that is easier to use, that is not so bulky with features, that is format-compatible and fun to use and I will consider it as long as the user experience is better.

If Apple is serious about getting into the enterprise, it needs to come up with a better strategy. My recommendation to Apple for now is to stick with the consumer. I do wonder how you will continue to maintain your stock price without constantly innovating at the hardware level and, frankly, after viewing this week's keynote introducing the iPad Mini, all I have to say is that creating different sizes of the same product is not innovation. Also, the new iMac impressive from a technology perspective, but it looks like a giant iPad (it's still impressive that it packs all these components into that form factor).

So, my million dollar question: What are you going to do with iMac in three years? You can't go any thinner, so unless you are working on a holographic computer, making the display bigger won't cut it.

Posted by Elias Khnaser on 10/24/2012 at 12:49 PM3 comments

What happens when you take the most powerful application delivery platform and converge that with the most powerful desktop virtualization platform on the market? Well Citrix seems to think that magic will happen, and I tend to agree.

Last week at Citrix Synergy Barcelona, Citrix showed off Excalibur which is supposed to finally integrate XenDesktop and XenApp into a single product with one installation that has a unified back-end infrastructure based on XenDesktop's Flexcast Management Architecture. Those of you who are emotionally attached to Independent Management Architecture, which today is the underlying architecture that runs XenApp, should probably begin to say your goodbyes.

Excalibur also promised to converge Citrix Profile Manager, Citrix EdgeSight (hopefully by then, it is either redesigned or Citrix acquires a new company to replace its technology), and Storefront, which is the successor to the legendary Web Interface. Citrix, do you expect me to believe that after all these years, all these GB downloads, I can finally have one install package, unified architecture, two management consoles to do all this? Tears of joy and contentment are raining down my face, so please get this right.

How is Citrix unifying XenApp and XenDesktop? Well, they are moving away from the traditional component installation of XenApp atop Microsoft Remote Desktop Session Host to use the current XenDesktop Virtual Desktop Agent. So, in essence, you install the VDA on your Windows Terminal Server and that's it.

Now, granted that the VDA for XenApp will install many more components and make significantly more changes to the underlying operating system, the idea here a better, streamlined admin experience installing XenApp than what's available now.

Two things to note here: First, as of this writing Excalibur will not have a VDA package for XenApp running on 32-bit operating systems. But given the history with Citrix, they will bow down to popular demand. With enough customer push back, I am sure this will happen.

Second, as my fellow CTP Shawn Bass pointed out, FMA does not have the concept of Zones, which are an important design criteria in XenApp environments that allows us to isolate interserver communications and limit them to a particular zone. Given the importance of this feature, I would be surprised if Citrix does not address it in one way or another, as it could very well be a barrier for scalability and there are some really large XenApp environments out there. If you want them to upgrade, Citrix, you better get this one right.

Excalibur goes into Tech Preview November 1 and you can be assured we will cover this extensively. For now, I would love to get your reactions, thoughts, and concerns on this convergence.

Posted by Elias Khnaser on 10/22/2012 at 3:08 PM7 comments

In part one, I offered a high-level overview of a suggested end-user computing strateg. Let's break down the topics, starting with the desktop strategy.

Desktop Strategy

While we may be in the post-PC era, it doesn't mean that physical desktops and laptops are going to disappear. We need to continue to fine-tune and deploy desktop management tools like Microsoft SCCM and others. On the other hand, ignoring desktop virtualization and VDI is also not acceptable anymore and continuing the rhetoric and debate about CAPEX vs. OPEX costs and the exaggerated costs of VDI is just a bunch of "malarkey" (sorry, I had to find a use for this word).

A well planned and designed desktop virtualization infrastructure can be very cost-effective and cheaper than a physical implementation. It is also about time to position the benefits of desktop virtualization from a business perspective, a BC/DR, flexibility and more beyond just how much is it going to cost, but rather what do we gain? Anyone can lie with numbers and you can make them look the way you want, so let's agree to just get past the TCO of desktop virtualization -- it has a place and it is an integral part of the strategy.

MDM/MAM/MIM

Mobile Device Management, Mobile Application Management and Mobile Information Management -- they're all new terms, all colorful terms. And so, with the mobile device explosion we need to evolve our mindset from one that has traditionally always been about controlling the device to one that governs the device. Better yet, we should govern enterprise resources on these devices. MDM will aide in enforcing device passwords, remote selective wipe of the enterprise resources on the device, encryption, reporting, etc.

Mobile Application Management is about mobile applications, sandboxing and encapsulating mobile applications so that we can apply policies against them. Without sandbox or application wrapping, it will be very difficult for enterprises to control what applications can and cannot do. This is especially apparent with native e-mail clients. You see, without sandboxing the e-mail client, mobile applications that get installed on the device could gain access to corporate contacts and information that otherwise not be allowed. Native e-mail clients are also so embedded into the mobile OS that it is difficult to sandbox them. It's why organizations like Citrix, VMware and others now provide their own version of a sandboxed e-mail as a complimentary alternative.

MAM can also serve as a consolidated application store for the enterprise where Windows, SaaS, mobile and other applications can be consumed. This is, again, a technology where there might be overlap between MDM vendors and enterprises like Citrix and VMware. As you are making your technology selection, choose a MAM solution that could integrate best with your desktop strategy and technology partner selection.

Mobile Information Management, also known as Mobile Data Management provide essentially a Dropbox-like functionality for the enterprise. The idea here to enforce policy-driven security which would allow or deny file syncing to certain devices in certain locations. More granularly, it would allow or disallow certain file types on certain devices, etc.

Social Enterprise / Collaboration

Do you really enjoy sending one word e-mails, e-mails that say "Thank you" or "Yes"? Do you enjoy searching through thousands of e-mails to locate the conversation you were having, or to find a file attachment? If you are like me, you probably despise e-mail -- I truly hate e-mail and in my consulting world, when working on a customer's statement of work, we start versioning the SOW and send it back and forth. There has got to be an easier way. What if we had a Facebook-like enterprise where we can collaborate with colleagues? Better yet, what if this social enterprise can be linked to our MIM solution so that we can drag files, collaborate on them while they are in a centralized, secure location?

Of course social enterprise platforms still need to mature somewhat for the enterprise and you have to be able to answer questions like:

- What level of use of social networking will you allow?

- Are any social networking services more enterprise friendly than others?

- How are they used for work purposes? (crucial question)

- How do you see social enterprise changing communication and collaboration behavior at your company

I will take one step further and say that I believe social enterprise platforms like SocialCast and Podio and others have the potential to become the next desktop and I have blogged about them here several times.

Wireless

Every customer tells me they have a wireless infrastructure and while I recognize that a wireless infrastructure is part of the DNA of every enterprise, for the most part, what many dismiss or disregard is that these wireless infrastructures were not built to handle the number of devices connecting or will be connecting to the infrastructure. More important, however, are the types of services delivered over these wireless infrastructures that are significantly different.

Remember, in an end-user computing strategy, you have to take into account remoting protocols like PCoIP, HDX, RDP and others. You also have to take into account the new and updated technologies that could make other services better. So, please don't ignore the wireless infrastructure.

We are also looking for a secure and scalable infrastructure with pervasive coverage to detect and mitigate sources of interference. A wireless infrastructure capable of location tracking -- why? Because that will tie very nicely with your MDM tools to enable or disable certain functionality depending on your geographic location.

Security

There is no way you are thinking about an end-user computing strategy and BYOD in particular without taking into account security, and network access control in particular. You should be investigating and planning to control wired and wireless access and dynamic differentiated access policies, enforcing context-based security, and providing self-service access and guest life cycle management via agent or agentless approaches.

Now, it's your turn. Do you agree that an end-user computing strategy is needed? And if so, how we can refine and fine tune the strategy I layed out here? Comment away!

Posted by Elias Khnaser on 10/16/2012 at 12:49 PM7 comments

End-user computing has expanded so much and gotten even more complex, in this two-part series, we will explore the strategies that could be used in enterprises to address all the current issues: from consumerization and BYOD, to desktop virtualization and physical desktop management.

It used to be fairly simple and straightforward: End-users either got a desktop or a laptop and those who needed a bit more accessibility got a Blackberry for mobile email, and that was it. Sophisticated enterprises managed those desktops with either Microsoft SCCM, Symantec Altiris, LANdesk or similar technologies.

Those days are gone and the situation has radically changed, with the needs and requirements of end users having evolved to the point that they have, on average, two or three devices -- a PC and smartphone and/or tablet.

Access to resources has also changed. We used to just load everything on the laptop, but now, end users want and need selective access to resources on their preferred device from anywhere at any time over any connection.

That means it's time to rethink the end-user computing strategy.

For many years, IT treated the end-user space as a second-class citizen, with no real IT talent devoted to it or any serious or major planning or strategy. The attitude was to just get it done no matter how sloppy the method. Most of our time and effort was focused on the datacenter, the crown jewel of every IT engineer's resume. We wanted to go through the ranks, through the helpdesk and get to the datacenter -- where real computing happens.

Well, today, enterprises are demanding that the same level of seriousness we dedicated to the datacenter now gets focused on the end-user computing side.

Where do we start? Let's begin by identifying the components of this new strategy:

- Desktop Strategy --- this means a strategy for physical and virtual desktops and applications

- MDM/MAM/MIM -- necessary to govern the mobile devices, applications and data

- Collaboration-- a modern way of collaborating between end users that goes beyond the traditional tools to reach the social enterprise

- Wireless infrastructure -- a robust, dynamic and scalable wireless infrastructure to support the influx of devices and services

- Security -- at the heart of any strategy is security, and end-user computing security in the age of BYOD is crucial

Now, the challenge is the ability to weave all these technologies together and avoid overlap, as some of the vendors in question provide similar technologies. For instance, most MDM vendors now have some sort of Dropbox-like functionality, but so do desktop virtualization vendors like VMware and Citrix.

Next time, we'll break down these components and discuss the strategy in more details. In the mean time, please share with me in the comments section your feedback, especially if I have missed any high-level topics.

Posted by Elias Khnaser on 10/15/2012 at 12:49 PM0 comments