In-Depth

KubeCon 2026 EU Day 1 Recap -- The World's Largest Open-Source Meet Up

KubeCon 2026 EU officially kicked off at 9 a.m. on Tuesday with a series of keynotes and announcements focusing on how containers and Kubernetes are enabling AI. After spending a day at a pre-event I was excited for KubeCon to officially kick off. Rather than dissecting each speaker's keynote individually, I will present my big-picture takeaways from the keynote sessions.

The event opened with a look at the scale of the conference's growth. The CNCF leadership team reported that the event had over 13,500 attendees, making it the world's largest open-source software meetup! This is a 10% increase over the previous year and orders of magnitude larger than the first KubeCon I attended, a scant 10 years ago in Seattle, which had maybe a couple of hundred attendees. Participants from 100 countries and more than 3,000 organizations filled the halls of the RAI building.

[Click on image for larger view.]

[Click on image for larger view.]

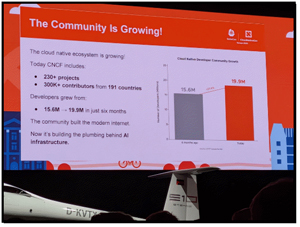

One of the most telling metrics of CNCF's success was the mention of its community, which has over 230 projects and over 300,000 contributors worldwide.

[Click on image for larger view.]

[Click on image for larger view.]

The keynote speakers said that at the heart of this expansion is a milestone that signals a permanent shift in the technology landscape and that, by their count, the world now has nearly 20 million cloud developers. This massive pool of human capital is the essential engine behind one of the hottest trends in IT: the AI Agentic era.

As developers pivoted toward AI, the KubeCon community is not merely helping build this new category of software; K8s and containers are powering the AI infrastructure powering the AI revolution.

Underscoring the AI boom and the CNCF's evolution, AI behemoth NVIDIA announced it was becoming a Platinum CNCF member.

[Click on image for larger view.]

[Click on image for larger view.]

This partnership is a critical point for the industry as it moves the cloud-native ecosystem beyond symbolic support for AI hardware accelerators into engineering alignment for AI workloads. A central part of this announcement was NVIDIA's donation of the NVIDIA GPU driver to CNCF. This will serve as a reference implementation for the vendor-neutral Kubernetes DRA API, which effectively standardizes how AI accelerators interact with the orchestration layer. By providing this foundation, NVIDIA and CNCF are ensuring that AI infrastructure becomes a common, standardized utility rather than a fragmented collection of proprietary silos.

Underscoring its commitment to CNCF, NVIDIA committed $4 million over the next three years to provide CNCF projects with critical access to high-end GPU compute resources. This investment reinforces the reality that Kubernetes has evolved into the de facto control plane for modern AI-era workloads.

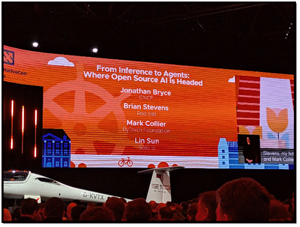

Later on in the keynotes, the speakers emphasized that the industry is currently navigating the "industrialization" phase of artificial intelligence. This is characterized by a transition from model training to model inference. While training builds intelligence, inference is the process of putting that intelligence to work. This shift is occurring with unprecedented speed. In 2023, only one-third of AI compute was dedicated to inference, while two-thirds was for model training. This year, that ratio is projected to flip, with two-thirds of all AI compute used for inference. This transition marks the point at which AI moves from a "science project" to a commercialized product.

[Click on image for larger view.]

[Click on image for larger view.]

The long-term infrastructure requirements to support this shift are staggering, with projections suggesting that 93.3 gigawatts of power will be required for inference by the end of the decade. This demand is expected to exceed the combined power consumption of all other compute workloads. This means that inference will be the biggest workload in human history. To meet this challenge, the community needs to look beyond single-node optimizations and embrace distributed inference clusters. This is necessary to handle the massive volume of tokens, which necessitates a new level of sophistication in how resources are scheduled and managed.

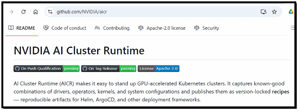

Addressing these tasks is beyond human capacity, and specialized tools are needed to simplify the management of the hardware on which these AI workloads run. Currently, an optimized AI cluster can require navigating over 250 different configuration values, a hurdle that the new AI Cluster Runtime (AICR) project seeks to eliminate.

[Click on image for larger view.]

[Click on image for larger view.]

AICR provides machine-readable recipes that automate the configuration of AI accelerators, effectively pushing the complexity down into the system, making the infrastructure invisible to developers.

Further innovations include LLM-D (Large Language Model Distributed), which was recently accepted into the CNCF sandbox.

[Click on image for larger view.]

[Click on image for larger view.]

Unlike single-node optimizers, LLM-D focuses on cluster-wide management of LLMs, tackling problems such as KV cache management and the disaggregation of the pre-fill and decode phases. This disaggregation is technically critical because the pre-fill phase is compute-intensive as it processes the initial prompt. In contrast, the decode phase is memory-bandwidth intensive because it generates tokens. By separating these tasks, LLM-D allows for higher throughput and lower latency, optimizing hardware utilization across the entire cluster and setting a new standard for distributed performance.

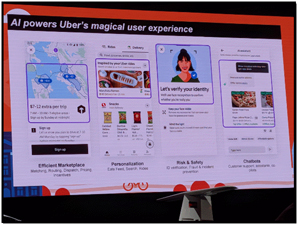

The validity of the cloud-native approach was demonstrated by organizations operating at a scale that challenges the limits of modern engineering. To emphasize this, the CNCF team brought up Uber to discuss the maturity of Michelangelo, which handles 30 million predictions per second and manages 20,000 models trained monthly.

[Click on image for larger view.]

[Click on image for larger view.]

Uber sees Kubernetes as the control plane for their "massive forest" of thousands of specialized, discrete AI models that power everything from marketplace pricing to safety checks.

CNCF later brought up the autonomous driving company Wayve to show how the same primitives support a "one giant brain" approach.

[Click on image for larger view.]

[Click on image for larger view.]

By leveraging the Kueue project for advanced job queuing, Wayve increased GPU utilization in its clusters from 85% to 97%. While Uber manages a vast array of specialized models, Wayve focuses on a single, massive end-to-end model that requires intense, focused compute resources across thousands of nodes. Despite these different architectural needs, both companies chose to use K8s as their foundation. This demonstrates that the CNCF ecosystem provides the necessary flexibility for both high-volume specialized intelligence and large-scale monolithic model training and inference.

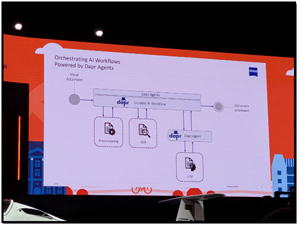

The speakers emphasized that the next frontier of this evolution is the rise of Agentic AI, where autonomous systems perform complex tasks with minimal human intervention. The announcement of Dapr Agents 1.0 showcased how record-setting gliders use AI agents to monitor and may eventually govern physical systems.

[Click on image for larger view.]

[Click on image for larger view.]

It was cool that they demonstrated how a glider had an edge-computing node running KubeEdge. During its flights, it collected data on cosmic rays and microplastics while flying at temperatures as low as -50 degrees Celsius, with intermittent satellite connectivity.

[Click on image for larger view.]

[Click on image for larger view.]

The success of the glider project illustrates that the cloud-native control plane is resilient enough to handle some of the most hostile conditions on and above Earth. It demonstrated that it could bridge the gap between massive data centers and autonomous edge nodes under a single unified fabric.

Vendors

KubeCon is more than the CNCF; it is also about the ecosystem surrounding Kubernetes, containers, and other cloud-native technologies. One of the more interesting/enjoyable things about KubeCon is talking with the people and companies on the showcase floor. Below is a recap of discussions I had with a few of the vendors.

[Click on image for larger view.]

[Click on image for larger view.]

Solo.io

After talking to Christian Posta, Global Field CTO of Solo.io, virtually a few times, it was good to sit down and see his product in action, which was no easy feat as Solo.io was front and center on the show floor and was packed with people.

[Click on image for larger view.]

[Click on image for larger view.]

The big announcement from Solo.io was agentevals, an open-source framework for evaluating agentic AI systems. It provides a framework for testing the effectiveness of AI agents in real-world workflows. This includes infrastructure automation, API orchestration, service management, and other AI-enhanced workflows.

Solo.io announced at KubeCon that it is donating the Agent Registry project to the CNCF, which provides the visibility and a central catalog needed to manage approved AI artifacts and tools. You can read more about Solo.io in articles that I wrote about them here and here, or visit their homepage.

Edera

I had a chance to catch up with Edera's CEO, Emily Long, and with Alex Zenla, Founder & CTO. As I covered Edera in another article, this is just about the announcement they made at KubeCon about their support for KVM hypervisor, as well as Krata, their bespoke, ultra-secure XEN-based hypervisor. Although they don't see KVM as secure as Krata, they feel it is important to support the entire Kubernetes ecosystem so it can benefit from their products without changing their infrastructure.

[Click on image for larger view.]

[Click on image for larger view.]

A few weeks before KubeCon, they also made a major announcement, rolling out a production-grade control plane for GPU infrastructure that enables secure multi-tenancy. It provides hardware-enforced GPU isolation, ~100ms spin-up times, and is vendor-agnostic. You can read more about Edera here.

Final Thoughts on Day 1

The first day of KubeCon highlighted two main themes: the importance of maintaining open standards and the role of Kubernetes (K8s) in powering AI. Together, these elements form the foundation of today's modern infrastructure, which must remain transparent and accessible.

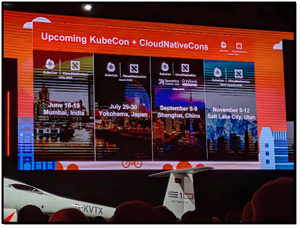

The global scope of the CNCF was confirmed with the attendance of KubeCon EU, surpassing its US event and making it the largest open-source meetup ever. CNCF will build on its worldwide success with upcoming events in India, Japan, China, and Salt Lake City, ensuring this movement continues to attract and drive an international talent pool.

[Click on image for larger view.]

[Click on image for larger view.]

The first day of KubeCon reinforced what was already apparent to me: the transition to an AI world is built on the foundations laid by the cloud-native community years ago. It has evolved and matured over the last decade to become what it is today. By standardizing hardware access, optimizing distributed inference, and provisioning systems at the scale required, the open-source ecosystem has positioned K8s as a path forward for the future of AI. The speakers today reiterated that, to ensure it remains so, open source must win, and what I saw in Amsterdam suggests it is doing so.

The CNCF keynote speeches were recorded and will be available on the CNCF's YouTube channel within two weeks after the event.