News

Prime Video Sparks Serverless Debate by Switching to Monolith

Amazon's Prime Video tech team sparked a vigorous debate about serverless computing after switching the video service to a monolithic architecture. Bucking the serverless/distributed/microservices trend, the team reported many advantages, including a 90 percent reduction in costs.

For many years now, the tech architectural winds have been swaying away from monoliths toward serverless computing, a "cloud native" technology that grew along with all the other cloud native technologies as cloud giants AWS, Microsoft and Google transformed IT.

Serverless, of course, doesn't mean that computing is done without servers; it just means the cloud platforms handle the servers for customers. Scalability is a big selling point of serverless computing, as the cloud platforms can allocate hardware and software resources according to demand, letting users pay for only what they use. Along with automatic scalability and obviating the need for server management, other advantages of the serverless approach typically include quick deployment of apps and updates and reduced latency.

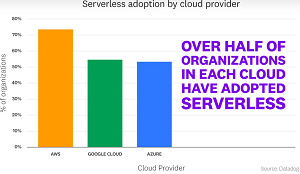

So the serverless movement took hold and, as we reported last year, "Serverless Has Gone Mainstream Across Big 3 Clouds," based on a report from Datadog.

[Click on image for larger view.] Serverless Adoption by Cloud Provider (source: Datadog).

[Click on image for larger view.] Serverless Adoption by Cloud Provider (source: Datadog).

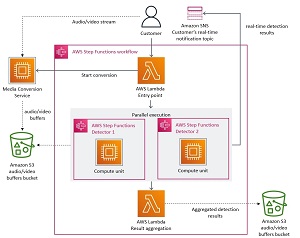

In March, however, Prime Video's tech team stirred things up in the post "Scaling up the Prime Video audio/video monitoring service and reducing costs by 90%," which explained how the move from a distributed microservices architecture to a monolithic approach helped achieve higher scale and resilience while lowering costs -- basically what serverless was supposed to do when replacing monoliths.

[Click on image for larger view.] The Old Prime Video Serverless Architecture (source: Amazon Prime Video).

[Click on image for larger view.] The Old Prime Video Serverless Architecture (source: Amazon Prime Video).

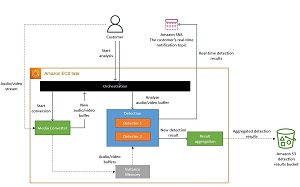

[Click on image for larger view.] The Updated Prime Video Monolithic Architecture (source: Amazon Prime Video).

[Click on image for larger view.] The Updated Prime Video Monolithic Architecture (source: Amazon Prime Video).

"Moving our service to a monolith reduced our infrastructure cost by over 90 percent," the Prime Video post said. "It also increased our scaling capabilities. Today, we're able to handle thousands of streams and we still have capacity to scale the service even further. Moving the solution to Amazon EC2 and Amazon ECS also allowed us to use the Amazon EC2 compute saving plans that will help drive costs down even further. Some decisions we've taken are not obvious but they resulted in significant improvements."

That article kicked off the vigorous debate, which is still continuing this week. In fact, there have been three new posts on the topic in the past three days, coming about seven weeks after the Prime Video post. Here are brief summaries of each:

Monoliths are not dinosaurs -- May 5:

This was authored on the All Things Distributed site by Dr. Werner Vogels, CTO at Amazon. "Building evolvable software systems is a strategy, not a religion. And revisiting your architectures with an open mind is a must," he said. Other quotes include:

- There is no one-size-fits-all. We always urge our engineers to find the best solution, and no particular architectural style is mandated. If you hire the best engineers, you should trust them to make the best decisions.

- There is not one architectural pattern to rule them all. How you choose to develop, deploy, and manage services will always be driven by the product you're designing, the skillset of the team building it, and the experience you want to deliver to customers (and of course things like cost, speed, and resiliency).

- So, monoliths aren't dead (quite the contrary), but evolvable architectures are playing an increasingly important role in a changing technology landscape, and it's possible because of cloud technologies.

So many bad takes -- What is there to learn from the Prime Video microservices to monolith story -- May 6

This is a Medium post authored by Adrian Cockcroft, a former AWS exec who also served as a cloud architect at Netflix, giving him a unique perspective on the Prime Video situation that is primarily all about video streaming efficiency.

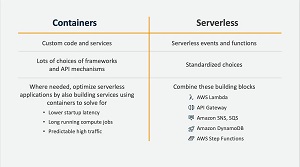

[Click on image for larger view.] Containers vs. Serverless (source: Adrian Cockcroft, excerpt from Serverless First deck published in 2019 ).

[Click on image for larger view.] Containers vs. Serverless (source: Adrian Cockcroft, excerpt from Serverless First deck published in 2019 ).

"What the team did follows the advice I've been giving for years (here's a video from 2019):

Where needed, optimize serverless applications by also building services using containers to solve for lower startup latency, long running compute jobs, and predictable high traffic

"In contrast to commentary along the lines that Amazon got it wrong, the team followed what I consider to be the best practice," Cockcroft said. "The result isn't a monolith, but there seems to be a popular trigger meme nowadays about microservices being over-sold, and a return to monoliths. There is some truth to that, as I do think microservices were over sold as the answer to everything, and I think this may have arisen from vendors who wanted to sell Kubernetes with a simple marketing message that enterprises needed to modernize by using Kubernetes to do cloud native microservices for everything."

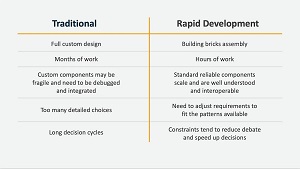

[Click on image for larger view.] Traditional vs. Rapid Development (source: Adrian Cockcroft, excerpt from Serverless First deck published in 2019 ).

[Click on image for larger view.] Traditional vs. Rapid Development (source: Adrian Cockcroft, excerpt from Serverless First deck published in 2019 ).

There's now a backlash against that messaging, maintains Cockcroft, who noted that Kubernetes does come with a cost, but one that need burden only large-scale, large-team implementations.

"Ironically, many enterprise workloads are intermittent and small scale and very good candidates for a serverless first approach using Step Functions and Lambda," he continued. "See The Value Flywheel Effect book for more on serverless first, and read Sam Newman's Building Microservices: Designing Fine-Grained Systems book to get the best practices on when and how to use the techniques to effectively build, manage and operate this way."

AI in the Cloud: The Epic Clash of Monolithic vs. Serverless Architectures -- May 7

Published on LinkedIn, this article was authored by Reuven Cohen, reportedly a tester for OpenAI and co-founder and CEO of AwardPool. Thus his take on the Prime Video-provoked serverless vs. monolith debate comes with a heavy AI angle.

"In this ever-changing technological realm, where innovation and progress reign supreme, the cyclical nature of trends often brings forth surprising twists," he said. "The resurgence of monolithic architecture now stands as a potent challenger, raising fundamental questions that challenge the prevailing dominance of microservices and serverless approaches in the context of AI/LLM/Autonomous systems."

He listed disadvantages of monolithic architectures for AI-centric systems:

- Fluidity: AI-centric architectures require flexibility and adaptability as algorithms and models evolve. Monolithic architectures, with their tightly coupled components, hinder the agility needed for AI development and experimentation.

- Autonomy: Monolithic architectures often rely on command and centralized control, limiting the autonomy of AI agents and resulting in performance bottlenecks and reduced efficiency. Moreover most monolithic architectures are focused on large human workforce to implement and maintain them.

- Distribution: Monolithic architectures struggle with scalability and fail to leverage distributed computing resources effectively, which is crucial for large-scale data processing & decentralized command and control within AI agents.

along with compelling advantages:

- Scalability: Serverless architectures excel at scaling individual microservices based on demand, making them ideal for computationally intensive AI tasks and extensive datasets.

- Agility: The modular design of serverless architectures enables rapid development, testing, and deployment of components, enhancing overall agility in the AI development process.

- Code and Infrastructure Management: Serverless architectures streamline automated code deployment and infrastructure management, facilitating seamless updates for evolving AI systems.

"In the ongoing debate between monolithic and serverless architectures, it is important to acknowledge that both approaches have their merits and considerations," Cohen continued. "Monolithic architectures have been prevalent in large human-centric organizations due to their management advantages and historical use cases. However, as the world continues its rapid progression towards a more AI-driven future, the landscape is shifting towards architectures that embrace fluidity, autonomy, and distribution."

Ultimately, the Same Old Answer

While the Prime Video post is heavy on technical details specific to unique video streaming considerations -- and the resulting debate incorporates all kinds of attitudes and contentions -- the resulting consensus seems to be the usual resulting consensus found in such tech trade-off discussions. That is, commentators mostly provide the same old bromides: every situation is different; use the right tool for the right job; stay flexible and don't get locked in to one approach; and so on ... and on.

At any rate, the debate can serve as a starting point for organizations that might want to take another look at their own systems with a fresh perspective to see where savings can be made by bucking trends.